Achieving Multi-Site Reproducibility in IHC: A Complete Guide to Robust Assay Development

This comprehensive guide addresses the critical challenge of ensuring immunohistochemistry (IHC) assay robustness and reproducibility across multiple laboratory sites in research and drug development.

Achieving Multi-Site Reproducibility in IHC: A Complete Guide to Robust Assay Development

Abstract

This comprehensive guide addresses the critical challenge of ensuring immunohistochemistry (IHC) assay robustness and reproducibility across multiple laboratory sites in research and drug development. We explore the foundational importance of reproducibility in translational science, detail actionable methodological frameworks for standardizing pre-analytical, analytical, and post-analytical variables, provide systematic troubleshooting strategies for common cross-site discrepancies, and review validation protocols and comparative analyses of standardization tools. Designed for researchers, scientists, and drug development professionals, this article synthesizes current best practices and emerging standards to enable reliable, comparable IHC data in multi-center studies.

Why Reproducibility Fails: The Foundational Challenge of Multi-Site IHC

Defining Robustness and Reproducibility in the IHC Context

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the most critical pre-analytical variables affecting IHC robustness across multiple sites? A: Pre-analytical variability is the primary source of non-reproducibility. Key variables include:

- Tissue Fixation: Delay, duration, and type of fixative (e.g., 10% NBF) must be standardized.

- Tissue Processing: Time in dehydrating alcohols and clearing agents.

- Embedding: Orientation and paraffin block temperature.

- Sectioning: Section thickness (typically 4-5 µm) and water bath temperature.

- Antigen Retrieval: Method (heat-induced vs. enzymatic), pH of buffer, and retrieval time.

Q2: How can we minimize inter-observer scoring variability in a multi-site study? A: Implement a standardized scoring protocol:

- Use validated, continuous digital image analysis (DIA) where possible.

- If manual scoring is required, employ a semi-quantitative method (e.g., H-score) with defined thresholds.

- Require all scorers from all sites to undergo centralized, rigorous training using a shared set of reference slides.

- Perform regular concordance testing (e.g., Cohen's kappa) between scorers and sites.

Q3: Our positive controls are inconsistent. What steps should we take? A: Inconsistent controls indicate a lack of assay robustness. Troubleshoot using this hierarchy:

- Verify reagent integrity: Check antibody and detection kit expiry dates. Use fresh, aliquoted buffers.

- Check equipment calibration: Validate oven/water bath/steamer temperature and pressure cooker pressure.

- Optimize antibody titration: Re-titrate the primary antibody on a known positive control tissue using a checkerboard dilution series.

- Standardize the detection system: Ensure consistent incubation times and temperatures for all detection steps.

Q4: What is the best way to validate an antibody for a multi-site reproducibility study? A: Follow a orthogonal validation framework:

- Confirm specificity: Use siRNA/CRISPR knockout cell lines or genetically modified tissue as a negative control.

- Verify expected localization: Compare staining pattern to published data and molecular databases.

- Assay precision: Perform intra-run, inter-run, inter-operator, and inter-site precision studies.

- Correlate with another method: Where possible, correlate IHC results with an orthogonal method (e.g., mRNA in situ hybridization, Western blot on lysates from the same tissue type).

Troubleshooting Guide: Common IHC Staining Issues

| Problem | Possible Causes | Recommended Action |

|---|---|---|

| Weak or No Staining | Depleted antibody, incorrect retrieval, inactive detection system, over-fixation. | Run a multi-tissue control block. Increase primary antibody concentration; optimize retrieval pH/time. Test detection system with a known robust antibody. |

| High Background | Non-specific binding, over-concentrated antibody, inadequate blocking, drying of sections. | Titrate primary antibody. Extend serum/protein block step. Ensure sections are never allowed to dry post-retrieval. Include relevant isotype control. |

| Non-Specific Nuclear Staining | Often due to over-retrieval or endogenous biotin (in older systems). | Reduce retrieval time. Use a polymer-based, biotin-free detection system. |

| Patchy/Uneven Staining | Inconsistent contact during incubation, bubbles under coverslip, uneven heating during retrieval. | Use automated staining if available. Ensure coverslips are properly applied. Check water bath/steamer for hot spots. |

| Inter-Site Result Discrepancy | Uncalibrated equipment, different reagent lots, subjective scoring. | Implement a Site Qualification Protocol (see below). Centralize critical reagents. Use digital image analysis with a shared algorithm. |

Table 1: Impact of Pre-Analytical Variables on IHC Staining Intensity (H-Score)

| Variable | Standardized Protocol | Non-Standardized Range | % Coefficient of Variation (CV) |

|---|---|---|---|

| Fixation Time (10% NBF) | 18-24 hours | 6 hours - 72 hours | 35-45% |

| Section Thickness | 4 µm | 3 - 6 µm | 22% |

| Antigen Retrieval pH | pH 6.0 | pH 6.0 - pH 9.0 | 50-60% |

| Primary Antibody Incubation | 32 min (automated) | 30 min - overnight (manual) | 25% |

Table 2: Inter-Site Reproducibility Metrics for a Validated PD-L1 IHC Assay

| Performance Metric | Target | Observed Result (n=5 sites) |

|---|---|---|

| Inter-Site Concordance (Positive vs. Negative) | >90% | 98% |

| Intra-Site Precision (CV of H-Score) | <15% | 8% |

| Inter-Site Precision (CV of H-Score) | <20% | 12% |

| Inter-Observer Scoring Agreement (Kappa) | >0.80 | 0.89 |

Experimental Protocols

Protocol 1: Site Qualification for Multi-Center IHC Studies

Purpose: To ensure all participating laboratories achieve equivalent staining results before study initiation. Materials: Centralized kit of validated reagents (antibody, detection kit, buffers), multi-tissue microarray (TMA) control slide. Method:

- Pre-Study Run: All sites process the same TMA slide using the locked, detailed protocol.

- Digital Upload: Sites digitize slides using a calibrated scanner at 20x magnification.

- Central Analysis: A lead site analyzes all digital images using a pre-defined DIA algorithm.

- Acceptance Criteria: Staining intensity (H-score) for each core must fall within ±15% of the lead site's mean. Positive/negative calls must be 100% concordant.

- Remediation: Sites outside criteria repeat training and the qualification run.

Protocol 2: Checkerboard Antibody Titration for Optimization

Purpose: To empirically determine the optimal primary antibody concentration and retrieval conditions. Materials: Positive control tissue, primary antibody, range of retrieval buffers (pH 6-10). Method:

- Cut serial sections from the control block.

- Perform antigen retrieval using three different buffer pH levels (e.g., 6, 8, 9) on separate sections.

- For each retrieval condition, apply a series of primary antibody dilutions (e.g., 1:50, 1:100, 1:200, 1:500, 1:1000).

- Complete staining with standardized detection and visualization.

- Select the condition (pH + dilution) that yields the strongest specific signal with the lowest background. This is the "peak" dilution for use in the validated assay.

Diagrams

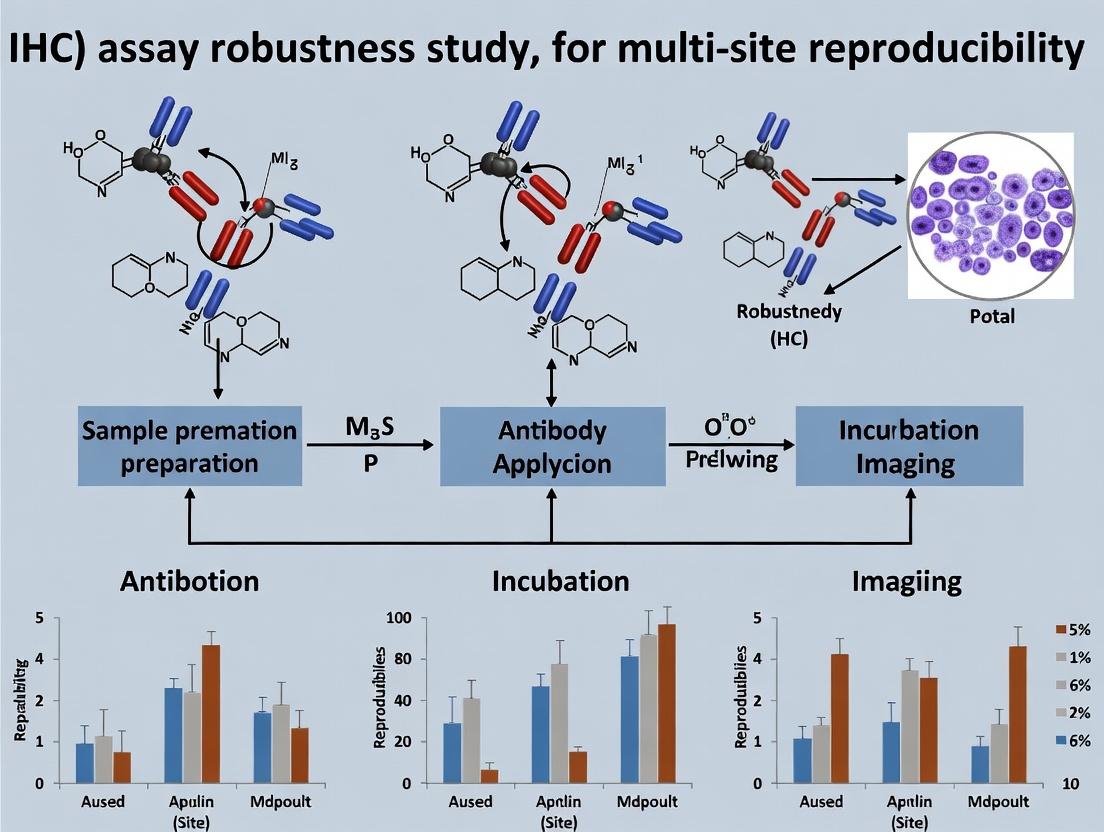

Diagram Title: The Three Pillars of IHC Robustness

Diagram Title: Multi-Site IHC Reproducibility Study Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Importance for Robustness |

|---|---|

| Validated Primary Antibody | The core reagent. Must be specific, sensitive, and tested for IHC on fixed tissue. Use clones with known performance data. |

| Polymer-Based Detection System | Provides high sensitivity and low background. Eliminates endogenous biotin interference common in avidin-biotin systems. |

| pH-Calibrated Antigen Retrieval Buffers | Critical for epitope exposure. Using a consistent, precisely formulated buffer (e.g., citrate pH 6.0, Tris-EDTA pH 9.0) is essential. |

| Multi-Tissue Control Block (MTCB) | Contains cell lines or tissues with known expression levels (negative, weak, moderate, strong). Run on every slide/ batch for run-to-run monitoring. |

| Automated Staining Platform | Dramatically improves reproducibility by standardizing incubation times, temperatures, and wash volumes across runs and operators. |

| Digital Slide Scanner & Analysis Software | Enables objective, quantitative assessment of staining (H-score, % positive cells). Facilitates remote review and inter-site comparison. |

| Isotype Control Antibody | Distinguishes specific signal from non-specific background staining. Crucial for assay development and validation. |

Technical Support Center: Troubleshooting IHC Reproducibility

FAQs & Troubleshooting Guides

Q1: Our multi-site trial shows high inter-site variance in HER2 IHC H-scores for the same patient samples. What are the most likely pre-analytical variables? A: Pre-analytical variables are a primary source of irreproducibility. Key factors include:

- Fixation Delay & Duration: Time from biopsy to fixation and total fixation time in 10% Neutral Buffered Formalin must be standardized.

- Fixation Type: Inconsistent use of NBF vs. other fixatives alters epitope availability.

- Ischemic Time: Warm vs. cold ischemia time affects protein degradation.

- Sample Size: Thickness of tissue impacts formalin penetration.

Table 1: Impact of Pre-Analytical Variables on IHC Results

| Variable | Recommended Standard | Effect of Deviation | Typical Impact on H-Score Variance |

|---|---|---|---|

| Fixation Delay | < 1 hour | Antigen degradation/modification | Increase of 15-25% |

| Fixation Duration | 6-72 hours (NBF) | Under-fixation or over-masking | Increase of 20-40% |

| Tissue Thickness | 3-5 mm | Incomplete fixation center | Increase of 25-35% |

| Ischemic Time | Minimize (< 60 min) | Hypoxia-induced changes | Increase of 10-20% |

Protocol: Standardized Pre-Analytical Processing for Multi-Site Studies

- Biopsy Collection: Use a calibrated punch/needle. Immediately place specimen in pre-labeled container.

- Fixation: Immerse in a 10X volume of pre-validated 10% NBF within 60 minutes of excision.

- Fixation Duration: Maintain fixation at room temperature (18-25°C) for 18-24 hours.

- Processing: Use a validated, automated tissue processor with identical dehydration and paraffin-embedding protocols across all sites.

- Sectioning: Cut sections at 4-5 µm thickness using microtomes with freshly replaced blades. Float sections in a 40°C water bath.

- Slide Storage: Store unstained slides at 4°C in a desiccated environment for ≤ 4 weeks before staining.

Q2: During assay validation, our positive controls stain weakly while negative controls show high background. How do we troubleshoot the staining protocol itself? A: This indicates an issue with antigen retrieval and/or detection system conditions.

Table 2: Troubleshooting Staining Protocol Issues

| Symptom | Possible Cause | Corrective Action |

|---|---|---|

| Weak positive control | Suboptimal antigen retrieval | Optimize retrieval time/pH; validate with a range of retrieval buffers (e.g., citrate pH 6.0, Tris-EDTA pH 9.0). |

| High background | Over-retrieval, excessive primary Ab concentration, or inadequate blocking | Titrate primary antibody; implement protein block (e.g., 5% normal serum, casein); optimize retrieval time. |

| Inconsistent staining | Manual staining variability, reagent depletion | Automate staining steps using a validated platform; establish strict reagent lot tracking and changeover protocols. |

| High inter-slide variance | Uneven heating during retrieval, inconsistent washing | Use a calibrated, water bath-based or pressurized retrieval system; implement automated slide washers. |

Protocol: Optimized Antigen Retrieval & Staining Workflow

- Deparaffinization: Standard xylene and ethanol series.

- Antigen Retrieval: Use a pressure cooker or commercial decloaking chamber with 1X Tris-EDTA, pH 9.0, at 95-100°C for 20 minutes. Cool for 30 minutes at room temperature.

- Peroxidase Block: 3% H₂O₂ for 10 minutes.

- Protein Block: Apply 2.5% normal horse serum for 20 minutes.

- Primary Antibody: Apply validated, pre-diluted monoclonal antibody (e.g., anti-PD-L1, clone 22C3) for 60 minutes at room temperature.

- Detection: Use a polymer-based detection system (e.g., HRP-polymer) for 30 minutes.

- Chromogen: Apply DAB substrate for exactly 5 minutes. Monitor development microscopically.

- Counterstain: Hematoxylin for 30 seconds.

Q3: How do we standardize scoring across multiple pathologists to reduce observer bias? A: Implement digital pathology and AI-assisted quantitation with rigorous training.

Table 3: Methods to Reduce Scoring Variance

| Method | Description | Estimated Reduction in Inter-Observer Variance |

|---|---|---|

| Manual + Training | Use standardized scoring guides (e.g., ASCO/CAP) with joint training sessions. | 20-30% |

| Image Analysis Algorithms | Deploy validated digital algorithms for quantitation of % positivity, H-score, or combined positive score (CPS). | 50-70% |

| Continuous Scoring | Replace categorical scores (0, 1+, 2+, 3+) with continuous metrics (e.g., H-score: 0-300). | 30-40% |

Protocol: Digital Image Analysis for PD-L1 CPS Quantification

- Slide Digitization: Scan slides at 20X magnification using a validated whole slide scanner.

- Region of Interest (ROI) Annotation: A certified pathologist digitally annotates viable tumor areas.

- Algorithm Application: Apply a validated algorithm to:

- Segment tumor cells and associated inflammatory cells.

- Detect membranous (and/or cytoplasmic) DAB signal.

- Calculate CPS = (Number of PD-L1 staining cells (tumor cells, lymphocytes, macrophages) / Total number of viable tumor cells) x 100.

- Review & Sign-off: Pathologist reviews algorithm output and approves the final score.

Signaling Pathway & Experimental Workflow Diagrams

Title: Variables Affecting IHC Reproducibility Across Phases

Title: Workflow for Robust Multi-Site IHC

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Reproducible IHC Assays

| Item | Function & Importance for Reproducibility |

|---|---|

| Validated Primary Antibody Clone | Specific clone (e.g., HER2 clone 4B5, PD-L1 clone 22C3) is critical. Use same clone, lot, and vendor across sites. Defines assay specificity. |

| Isotype-Matched Control IgG | Negative control to distinguish non-specific background from specific signal. Must match host species and isotype of primary antibody. |

| Polymer-Based Detection System | Amplifies signal with high sensitivity and low background. More reproducible than older avidin-biotin systems. |

| Buffered Antigen Retrieval Solution | Standardizes epitope recovery. Citrate (pH 6.0) or Tris-EDTA (pH 9.0) buffer choice is target-dependent. |

| Automated IHC Stainer | Eliminates manual timing and application inconsistencies. Essential for multi-site trials. |

| Whole Slide Scanner | Digitizes slides for archiving, remote review, and application of digital image analysis algorithms. |

| Image Analysis Software | Provides objective, quantitative metrics (H-score, CPS, % positivity), reducing observer bias. |

| Multitissue Control Block | Contains cell lines/tissues with known expression levels (negative, low, high) for run-to-run validation. |

Troubleshooting Guides & FAQs

Pre-Analytical Phase FAQ

Q1: Our IHC staining intensity varies significantly between sites, even with the same tissue type. What pre-analytical factors should we investigate first? A: The most common pre-analytical culprits are tissue fixation and ischemia time. Standardize cold ischemia time to ≤60 minutes and fix in 10% Neutral Buffered Formalin for 24-48 hours (depending on tissue thickness). Use a fixation timer and log deviations.

Q2: How can we ensure consistent antigen retrieval across multiple laboratories? A: Variability often stems from pH drift of retrieval buffers and heating method inconsistency. Implement a protocol using a pressure cooker or declared steamer at 121°C for 15 minutes. Use a validated, pre-mixed EDTA or citrate buffer (pH 6.0 or 9.0) with a strict shelf-life and pH check before each use.

Analytical Phase FAQ

Q3: Our automated stainers show declining signal over time despite using the same protocol. What is the likely cause? A: This typically indicates reagent degradation or instrument calibration drift. First, check the primary antibody dilution stability (often ≤1 week at 4°C). Then, validate the detection system (polymer-HRP) activity using a control slide. Ensure the stainer's liquid dispense volumes are calibrated quarterly.

Q4: How do we troubleshoot high background or non-specific staining? A: High background often results from inadequate blocking or over-fixation. Implement a two-step block: 3% H2O2 for endogenous peroxidase, followed by 5% normal serum from the species of the secondary antibody for 30 minutes. If the issue persists, titrate the primary antibody concentration using a multi-tiered dilution series.

Post-Analytical Phase FAQ

Q5: Scoring discrepancies between pathologists are affecting our study's reproducibility. How can we mitigate this? A: This is a major post-analytical challenge. Implement a mandatory digital pathology training session using a consensus slide set (≥20 images). Utilize standardized scoring algorithms (e.g., H-score, Allred score) and require a minimum inter-rater reliability score (Cohen's kappa >0.7) before study initiation.

Q6: Our digital image analysis yields different results from the same slide when scanned on different days. A: This indicates variability in whole slide image scanner calibration or analysis settings. Calibrate the scanner's light source and camera monthly. For analysis, lock all software parameters (e.g., color threshold, tissue detection sensitivity) and re-validate using the same control slide for every batch scan.

Table 1: Impact of Pre-Analytical Variables on IHC Signal Integrity

| Variable | Acceptable Range | % Signal Loss Outside Range | Recommended Mitigation |

|---|---|---|---|

| Cold Ischemia Time | ≤ 60 min | 15-40% per hour delay | Use cold transport media, strict SOP timing |

| Fixation Time | 18-48 hrs (depends on tissue) | Up to 60% loss | Implement fixation timer alarms |

| Fixative Type | 10% NBF only | N/A | Centralized procurement of fixative |

| Tissue Processing Temp | Ambient (22-25°C) | Variable | Use monitored, calibrated processors |

Table 2: Analytical Phase Reagent Stability & Performance

| Reagent | Optimal Storage | Max Stable Duration After Prep | Key Performance Check |

|---|---|---|---|

| Primary Antibody (diluted) | 4°C, aliquoted | 7 days | Titration curve every new lot |

| Polymer-Based Detection System | 4°C, original bottle | Until expiry | Positive control slide with each run |

| Chromogen (DAB) | Protect from light, 4°C | 24 hrs after prep | Monitor for precipitate formation |

| Antigen Retrieval Buffer (pH 9.0) | RT, sealed | 1 month | Measure pH before each use (target pH ±0.2) |

Experimental Protocols

Protocol 1: Systematic Titration of Primary Antibody for Multi-Site Standardization

Purpose: To determine the optimal concentration of a primary antibody that provides maximum specific signal with minimum background across multiple assay sites.

- Slide Preparation: Use a standardized multi-tissue microarray (TMA) containing known positive and negative controls.

- Antibody Dilutions: Prepare a 2-fold serial dilution series of the primary antibody (e.g., from 1:50 to 1:1600) in the validated antibody diluent.

- Staining: Process slides on the same automated stainer platform using identical retrieval, blocking, detection, and chromogen steps.

- Analysis: Perform digital image analysis on each TMA core to quantify stain intensity (DAB optical density) and percentage of positive cells.

- Optimal Concentration Selection: Plot staining intensity vs. dilution. Select the concentration at the plateau phase (highest signal before background increase) for the formal protocol.

Protocol 2: Inter-Site Reproducibility Validation Run

Purpose: To qualify multiple testing sites prior to initiating a multi-center IHC study.

- Central Kit Preparation: Prepare and aliquot all critical reagents (primary antibody dilution, detection system, chromogen, retrieval buffer) from a single lot at a central lab. Ship frozen/aliquoted alongside a set of pre-cut TMA slides.

- Synchronized Staining: All sites run the identical protocol on the same calibrated instrument platform on a predefined date.

- Data Return & Analysis: Sites return scanned images and raw data files to the central lab.

- Statistical Analysis: Central lab calculates the coefficient of variation (%CV) for staining intensity (H-score) across all sites. A pass/fail criterion is set (e.g., %CV < 20% for the positive control tissue).

Visualizations

Diagram Title: IHC Variability Sources and Their Impact

Diagram Title: IHC Multi-Site Standardization Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Robust IHC Assays

| Item | Function & Rationale | Key Selection Criteria |

|---|---|---|

| Validated Primary Antibody (Clone) | Binds specifically to the target antigen. The primary source of specificity. | Monoclonal clones preferred for reproducibility. Vendor-provided validation data (IHC-P on human FFPE). |

| Polymer-Based Detection System | Amplifies the primary antibody signal with high sensitivity and low background. | Species-specific (anti-mouse/rabbit). Low lot-to-lot variability. Compatible with your automation. |

| Standardized Chromogen (DAB) | Produces a stable, insoluble brown precipitate at the antigen site for visualization. | Ready-to-use liquid formulations. Consistent particle size to prevent granularity. |

| pH-Stable Antigen Retrieval Buffer | Reverses formaldehyde cross-links to re-expose epitopes. Critical for consistency. | Certified pH (6.0 Citrate or 9.0 EDTA/Tris). Pre-mixed, low lot-to-lot variability. |

| IHC-Grade Blocking Serum | Reduces non-specific binding of detection antibodies to tissue, minimizing background. | Normal serum from the species in which the secondary antibody was raised. |

| Multi-Tissue Control Microarray (TMA) | Contains known positive, negative, and gradient-expressing tissues for run validation. | Must include the relevant tissue types for your study. Commercially sourced or custom-made. |

| Automated Stainer & Coverslipper | Provides precise, hands-off control over reagent incubation times, temperatures, and volumes. | Must allow full protocol parameter locking. Regular service calibration is mandatory. |

Troubleshooting Guides and FAQs for IHC Assay Multi-Center Reproducibility

This technical support center addresses common challenges in multi-center immunohistochemistry (IHC) studies, framed within the thesis context of achieving robust, reproducible IHC assay results across multiple research sites.

FAQ 1: Why do we observe significant inter-site staining intensity variation for the same biomarker using the same protocol?

Answer: This is frequently caused by pre-analytical variable drift. A 2023 multi-site ring study demonstrated that fixative time variation of just 6-48 hours for the same tissue type led to a 40% difference in H-Score quantification. Key factors are:

- Fixation Delay & Time: Uncontrolled ischemia time and variable fixation duration.

- Antibody Lot Inconsistency: New antibody lots may have different affinity without proper re-validation.

- Retrieval Conditions: Subtle differences in pH, temperature, or incubation time in antigen retrieval buffers.

- Instrument Calibration: Daily variances in automated stainers' dispense volumes or incubation temperatures.

Experimental Protocol for Inter-Site Validation:

- Central Tissue Control Block Creation: A single reference tissue microarray (TMA) containing cell lines with known biomarker expression levels (negative, low, medium, high) is constructed at a central site.

- Parallel Processing: Identical TMA sections are distributed to all participating sites.

- Staining Run: All sites run the IHC assay within a 48-hour window using the same protocol, reagent lots (where possible), and a detailed instrument setup sheet.

- Digital Slide Scanning & Centralized Analysis: Slides are scanned at 20x magnification and uploaded to a centralized digital pathology platform. Quantitative analysis (e.g., H-Score, % positivity) is performed by a single analyst using a single image analysis algorithm.

- Statistical Comparison: Concordance is measured via intraclass correlation coefficient (ICC), with a target ICC > 0.85 for biomarker positivity scores.

Table 1: Common Pre-Analytical Variables and Their Impact on IHC Quantification

| Variable | Typical Range in Failed Studies | Recommended Control | Observed Impact on Score (vs. Baseline) |

|---|---|---|---|

| Cold Ischemia Time | 30 min - 24 hours | ≤ 60 minutes | Up to 35% loss in phospho-epitopes |

| Formalin Fixation Time | 6 hours - 7 days | 18-24 hours for biopsies | 40% variance in nuclear targets |

| Antibody Clone | Different clones used | Standardize clone and vendor | Incomparable results, failure rates up to 100% |

| Antibody Lot | Unrecorded lot changes | Validate new lots vs. old | 20-30% shift in dynamic range |

| Antigen Retrieval pH | pH 6.0 - 10.0 uncontrolled | Standardize buffer pH (±0.2) | Loss of signal or high background |

FAQ 2: How can we troubleshoot a study where one site consistently reports non-specific background staining?

Answer: Systematic background at one site often points to reagent or instrument issues. Follow this diagnostic tree:

Protocol for Background Investigation:

- Reagent Swap Test: Ship aliquots of primary antibody, detection kit, and DAB from the central coordinating site to the affected site. Repeat the assay using these central reagents.

- Water Quality Check: Test the site's water used for buffer preparation with a conductivity meter. Require Type I/CLSI grade water (>1 MΩ·cm).

- Instrument Blanks: Run the assay without primary antibody on the site's stainer. High background indicates a contaminated detection system or carryover in the liquid handling lines.

FAQ 3: What is the most effective way to align digital pathology analysis settings across multiple centers to prevent scoring divergence?

Answer: Divergence stems from unstandardized image analysis algorithms and training. A 2024 review of failed studies showed that without standardization, algorithm threshold variance alone caused a 25-point median H-Score discrepancy.

Protocol for Analysis Harmonization:

- Algorithm Locking: Use a single vendor's software and a locked analysis algorithm (e.g., a defined .dsp file for Visopharm, or a packaged workflow for Halo).

- Training with Gold Standards: Create a "gold standard" set of 20-30 annotated image regions (from the control TMA) for each biomarker. These should be annotated by a panel of 3 expert pathologists.

- Algorithm Training & Validation: Train the image analysis algorithm on 70% of the gold standard regions. Validate its performance on the remaining 30%. Target a correlation of R² > 0.95 against the expert consensus manual scores.

- Continuous Monitoring: Implement a quarterly proficiency testing where all analysts score the same set of 10 new fields. Calculate the ICC to ensure ongoing concordance.

Table 2: Key Reagent Solutions for Robust Multi-Center IHC

| Research Reagent / Material | Function in Multi-Center Studies |

|---|---|

| Standardized Control TMA | Provides identical biological reference across all sites for run-to-run and site-to-site normalization. |

| Validated Antibody Master Lot | A single, large lot of primary antibody aliquoted and distributed to all sites to eliminate lot-to-lot variance. |

| Automated Stainer with Calibrated Dispensers | Ensures precise reagent volumes and incubation times; requires regular calibration checks. |

| pH-Buffered Saline (PBS) Concentrate | Centralized preparation and distribution of wash buffer concentrate eliminates on-site weighing/pH errors. |

| Digital Pathology Platform with Central Server | Enforces use of identical, locked image analysis algorithms and provides an audit trail for all scoring. |

| Barcoded Slide Labeling System | Prevents sample mix-ups and links slide ID directly to metadata in the Laboratory Information Management System (LIMS). |

Technical Support Center: FAQs & Troubleshooting for IHC Assay Validation

Q1: Our IHC assay shows high inter-operator variability at different sites. Which validation parameter from FDA/EMA guidelines should we prioritize to address this?

A: Precision (Repeatability & Reproducibility) is the critical parameter. Both FDA (Bioanalytical Method Validation Guidance, 2018) and EMA (Guideline on bioanalytical method validation, 2011/2015) emphasize assessing precision across multiple runs, days, operators, and sites. For IHC, the College of American Pathologists (CAP) recommends using a standardized scoring protocol and calculating the Intraclass Correlation Coefficient (ICC) or Cohen's kappa for ordinal data to quantify agreement.

- Troubleshooting Protocol: Implement a "Precision Challenge" experiment.

- Select 3-5 representative tissue blocks (including weak, moderate, strong expression).

- Create serial sections and distribute to all participating sites/labs.

- Using the identical validated protocol, each site processes and scores the slides.

- A central panel of 3 trained pathologists also scores all slides digitally to generate a consensus reference score.

- Analyze data using a Two-Way Mixed Effects Model ICC for absolute agreement (for continuous data like H-scores) or Fleiss' Kappa (for categorical data).

Q2: How do we set the acceptance criteria for accuracy/concordance when validating a new IHC assay against a known standard?

A: FDA/EMA guidelines require demonstration of accuracy, often through method comparison. CAP provides more specific guidance for IHC. Acceptance criteria are not universally fixed but must be justified.

Table 1: Recommended Acceptance Criteria for IHC Assay Validation

| Parameter | FDA/EMA General Recommendation | CAP/CLIA-based Benchmark for IHC | Common Justified Threshold for Multi-site Studies |

|---|---|---|---|

| Accuracy (Overall Percent Agreement vs. Reference) | Method-specific. Should be established. | ≥95% is often cited for clinical tests. | ≥90% for categorical (Positive/Negative). Must be pre-defined. |

| Inter-site Reproducibility (ICC) | Not specified for IHC. Emphasizes reproducibility. | ICC > 0.9 indicates excellent reliability. | ICC > 0.8 (Good agreement) is a common minimum for multi-site studies. |

| Pre-analytical Variable (e.g., Cold Ischemia Time) Impact | Must be investigated if it affects the analyte. | CAP requires monitoring of pre-analytical variables. | <10% deviation in mean score from baseline condition. |

Q3: Our positive controls are failing intermittently across sites. What steps should we take?

A: This indicates a critical failure in assay robustness. Follow this systematic guide:

- Check Reagent Stability & Lot Changes: Confirm all sites are using the same reagent lots. Validate new lots before implementation.

- Verify Equipment Calibration: Ensure automated stainers (if used) are calibrated per manufacturer specs. Check incubation temperatures and times.

- Troubleshoot Antigen Retrieval: This is the most common variable. Verify retrieval buffer pH, temperature consistency, and retrieval time. Use a control tissue microarray (TMA) containing variably expressed targets to distinguish between retrieval and detection issues.

- Review Scoring Criteria: Ensure all operators are using the same threshold for positivity. Consider transitioning to digital image analysis with a validated algorithm for objective quantification.

Key Experimental Protocol: Comprehensive IHC Assay Validation for Multi-site Reproducibility

Objective: To validate an IHC assay for a biomarker (e.g., PD-L1) according to regulatory guidelines, ensuring robustness and reproducibility across multiple laboratory sites.

Materials & Reagents:

- Validated Primary Antibody Clone (and known compatible retrieval method)

- Isotype Control (for specificity)

- Standardized Detection System (Polymer-based HRP/DAB recommended)

- Control Tissue Microarray (TMA): Contains cell line pellets or tissues with known negative, low, medium, and high expression.

- Whole Slide Scanner & Digital Image Analysis Software (for objective quantification)

Protocol:

- Define the Assay Context of Use: Clearly state if the assay is for companion diagnostics, patient selection, or exploratory research. This dictates validation stringency.

- Design the Validation Study:

- Analytical Specificity: Co-localization with another method (IF, RNAscope); adsorption block with target peptide.

- Analytical Sensitivity: Titrate antibody on TMA to determine optimal dilution (signal-to-noise ratio).

- Precision: Conduct a nested factorial experiment as described in FAQ1.

- Robustness: Deliberately introduce minor variations (e.g., ±5% retrieval time, ±1°C incubation temp) and measure impact on output.

- Stability: Assess antigen stability in cut slides over time (0, 1, 3, 6 months) under defined storage conditions.

- Centralized Analysis & Reporting: Collect all slides or digital images for centralized, blinded review. Calculate all validation parameters (ICC, % agreement, sensitivity, specificity) against the pre-defined acceptance criteria (Table 1).

- Documentation: Create a comprehensive validation report detailing protocols, raw data, analysis, and conclusions, ready for regulatory audit.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Materials for Robust IHC Validation

| Item | Function & Importance for Multi-site Studies |

|---|---|

| Certified Reference Standard TMA | Provides consistent positive/negative controls across all sites. Essential for monitoring assay performance and troubleshooting. |

| Validated, Clone-Specific Primary Antibody | Ensures specificity to the target epitope. Using the same clone and vendor is non-negotiable for reproducibility. |

| Standardized Detection Kit (Polymer-based) | Minimizes variability in signal amplification and visualization compared to manual ABC or PAP methods. |

| Automated Stainer with Protocol Lock | Ensures identical incubation times, temperatures, and wash volumes across runs and sites. Protocol locking prevents inadvertent changes. |

| Digital Pathology System | Enables whole-slide imaging, centralized blinded review, and application of standardized digital image analysis algorithms, removing scorer subjectivity. |

| Pre-analytical Control Samples | Tissues with documented cold ischemia and fixation times to monitor and control for pre-analytical variables. |

Visualization: IHC Validation Workflow & Decision Pathway

IHC Multi-Site Validation Workflow

IHC Validation Parameters & Measures

Building a Robust IHC Protocol: A Step-by-Step Methodological Framework

This technical support center addresses key challenges in implementing a Total Test Approach for immunohistochemistry (IHC) to ensure robustness in multi-site reproducibility research. The following FAQs, troubleshooting guides, and resources are framed within this critical context.

Frequently Asked Questions & Troubleshooting

Q1: In our multi-site study, we are observing significant inter-laboratory staining intensity variance for the same analyte despite using the same protocol. What are the primary pre-analytical factors we should audit? A1: Pre-analytical variability is the most common source of inter-site discrepancy. Systematically check the following:

- Ischemic Time: Ensure consistent time from tissue resection to fixation across all sites (target: <30 minutes).

- Fixation: Standardize fixative type (10% Neutral Buffered Formalin), volume (10:1 ratio to tissue), temperature (room temperature), and duration. Under-fixation (<6 hours) causes poor morphology and antigen loss; over-fixation (>72 hours) can mask epitopes.

- Processing & Embedding: Protocols must be identical. Variable dehydration or paraffin infiltration temperatures affect antigenicity.

- Section Thickness: Mandate a uniform thickness (typically 4-5 µm) with calibrated microtomes. A 1-2 µm variation alters antigen density and staining intensity.

Q2: Our positive controls stain correctly, but the test tissue shows weak or no signal. What steps should we take? A2: This indicates an issue specific to the test tissue or the antigen retrieval step.

- Verify Antigen Retrieval: Confirm the correct retrieval method (heat-induced epitope retrieval (HIER) vs. enzymatic) and solution (e.g., pH 6.0 citrate vs. pH 9.0 Tris-EDTA) is being used for your specific antibody. Re-optimize if necessary.

- Check Tissue Antigen Status: Validate that the test tissue is known to express the target. Use a confirmed positive tissue control.

- Review Primary Antibody: Confirm antibody specificity, optimal dilution, and incubation conditions. Perform a titration experiment on a control tissue.

Q3: We experience high non-specific background staining across all sites. How can we systematically reduce it? A3: High background compromises assay specificity. Follow this checklist:

- Blocking: Ensure sufficient incubation time (20-30 min) with an appropriate protein block (e.g., normal serum, BSA, or casein).

- Antibody Dilution & Incubation: Over-concentrated primary antibody is a leading cause. Re-titrate. Ensure incubation is performed in a humidified chamber to prevent drying artifacts.

- Wash Stringency: Increase wash buffer (e.g., Tris-Buffered Saline with Tween 20 - TBST) volume, agitation, and number of changes (recommended: 3x5 min washes post each step).

- Detection System: Ensure the detection system (e.g., polymer-based) is compatible with the tissue type. Endogenous enzyme (peroxidase/alkaline phosphatase) activity must be quenched properly (e.g., with H₂O₂ or levamisole).

Q4: What quantitative metrics should we collect to objectively validate assay performance at each site? A4: Move beyond subjective scoring. Implement digital pathology and quantifiable metrics as shown in the table below.

Table 1: Key Quantitative Metrics for IHC Assay Validation

| Metric | Description | Target for Validation | Typical Acceptance Range* |

|---|---|---|---|

| Positive Pixel Intensity | Average stain intensity in positive regions. | Consistent mean intensity across runs/sites. | Coefficient of Variation (CV) < 15% |

| Positive Area Percentage | % of annotated tissue area stained positive. | Consistent proportion in known control. | CV < 20% |

| Signal-to-Noise Ratio | Ratio of positive signal intensity to background. | High, consistent ratio indicating specificity. | > 3:1 |

| Staining Index | Composite of intensity and area. | Reproducible index score. | CV < 15% |

*Ranges are analyte-dependent and must be established during assay development.

Detailed Experimental Protocol: IHC Assay Validation for Multi-Site Studies

Protocol Title: Optimized IHC Staining with Rigorous On-Slide Controls for Reproducibility.

Objective: To provide a step-by-step methodology for performing a validated, robust IHC assay suitable for deployment across multiple research sites.

Key Materials (The Scientist's Toolkit): Table 2: Essential Research Reagent Solutions

| Item | Function |

|---|---|

| Validated Primary Antibody | Binds specifically to the target protein of interest. Must be clone-specific for IHC. |

| Polymer-based Detection System | Provides secondary antibody and enzyme (HRP/AP) conjugate in one step, amplifying signal with high sensitivity and low background. |

| Stable Chromogen (e.g., DAB) | Enzyme substrate that produces an insoluble, colored precipitate at the antigen site. |

| Automated Stainer | Provides precise, hands-off control of reagent incubation times, temperatures, and washes, critical for reproducibility. |

| Multitissue Control Block | Block containing multiple tissue types with known antigen expression levels, allowing validation of all assay steps on a single slide. |

| pH-calibrated Antigen Retrieval Buffer | Critical for unmasking epitopes cross-linked by fixation. pH (6.0 or 9.0) must be optimized per antibody. |

Workflow:

- Slide Baking & Deparaffinization: Bake slides at 60°C for 1 hour. Deparaffinize in xylene (3x5 min) and hydrate through graded alcohols (100%, 95%, 70%) to deionized water.

- Antigen Retrieval: Perform Heat-Induced Epitope Retrieval (HIER) in a decloaking chamber or pressure cooker using pre-optimized buffer (e.g., citrate pH 6.0) at 95-100°C for 20 minutes. Cool slides for 30 min at room temperature.

- Endogenous Peroxidase Blocking: Incubate with 3% hydrogen peroxide for 10 minutes to quench endogenous peroxidase activity. Wash with TBST (3x2 min).

- Protein Blocking: Apply a non-specific protein block (e.g., 5% normal serum) for 20 minutes at room temperature to minimize background. Do not wash; tap off excess.

- Primary Antibody Incubation: Apply validated primary antibody at the optimized dilution. Incubate for 60 minutes at room temperature or overnight at 4°C (as validated). Wash with TBST (3x5 min).

- Polymer Detection: Apply labeled polymer (e.g., HRP-polymer) for 30 minutes at room temperature. Wash with TBST (3x5 min).

- Chromogen Development: Apply chromogen substrate (e.g., DAB) for exactly 5-10 minutes (as predetermined). Monitor development microscopically. Rinse slides in deionized water to stop.

- Counterstaining & Mounting: Counterstain with hematoxylin for 30-60 seconds, "blue" in tap water. Dehydrate, clear, and mount with a permanent mounting medium.

Diagram 1: IHC Total Test Workflow

Diagram 2: Key Variables for Multi-Site Reproducibility

Technical Support Center: Troubleshooting Guides & FAQs

This support center addresses common pre-analytical challenges impacting IHC assay robustness in multi-site reproducibility research. Consistent pre-analytical control is the foundation for reliable downstream biomarker data.

FAQ & Troubleshooting

Q1: Our IHC staining intensity varies significantly between sites using the same protocol. The primary pre-analytical variable appears to be fixation time. What is the optimal fixation time and how can we control it?

A: Inconsistent fixation is a leading cause of multi-site variability. Under-fixation leads to poor morphology and antigen loss, while over-fixation causes excessive cross-linking and antigen masking.

- Optimal Fixation: For 10% Neutral Buffered Formalin (NBF), the ideal fixation time is 18-24 hours for most tissues at room temperature (20-25°C). The fixative volume should be 15-20 times the tissue volume.

- Protocol: Implement a standardized fixation protocol: 1) Dissect tissue to thickness ≤ 3-4 mm. 2) Immerse immediately in sufficient pre-labeled NBF. 3) Record start time. 4) Fix for 24 hours (± 2 hours). 5) Transfer to 70% ethanol for storage/shipping. Do not exceed 36 hours total fixation.

Q2: We observe poor morphology and sectioning artifacts (crumbling, chatter) in paraffin blocks from multi-center studies. What are the critical steps in tissue processing?

A: This indicates suboptimal dehydration, clearing, or paraffin infiltration during processing.

- Root Cause: Incomplete removal of water (dehydration) or clearing agent residue impairs paraffin wax infiltration.

- Protocol: Use an automated tissue processor with the following standardized schedule:

- Fixation: 10% NBF, 1 hour.

- Dehydration: 70% Ethanol, 1 hour -> 80% Ethanol, 1 hour -> 95% Ethanol, 1 hour -> 100% Ethanol, 1 hour -> 100% Ethanol, 1 hour.

- Clearing: Xylene or substitute, 1 hour -> Xylene, 1 hour.

- Infiltration: Paraffin wax at 58-60°C, 1 hour -> Paraffin wax, 1.5 hours.

- Control: Process a control tissue of similar type and size with each run.

Q3: During sectioning, tissues with varying densities (e.g., tumor and adjacent stroma) show differential wrinkling or tearing. How can we achieve uniform, high-quality sections?

A: This is often due to incorrect microtome setup, blade condition, or water bath parameters.

- Troubleshooting Steps:

- Blade: Use a new, sharp blade for every block or every 15-20 ribbons. Ensure it is properly seated.

- Block Temperature: Cool blocks on ice for 5-10 minutes before sectioning.

- Section Thickness: Set microtome to 4-5 µm. Rotate the wheel smoothly and at a consistent speed.

- Water Bath: Maintain bath at 42-45°C. Use distilled water. Flatten sections for ~10 seconds before fishing onto a slide.

- Slide Drying: Dry slides in a 37°C incubator for 1 hour, then overnight at 42°C on a slide warmer.

Table 1: Impact of Pre-Analytical Variables on IHC Signal Intensity (H-Score)

| Variable | Condition | Average H-Score (n=50) | Coefficient of Variation (CV) | Recommended SOP |

|---|---|---|---|---|

| Fixation Time | 6 hours (Under-fixed) | 85 | 45% | 18-24 hours |

| 24 hours (Optimal) | 165 | 12% | 18-24 hours | |

| 72 hours (Over-fixed) | 72 | 38% | ≤ 36 hours | |

| Ischemia Time | <10 minutes | 180 | 8% | Minimize, record time |

| 30 minutes | 155 | 25% | Minimize, record time | |

| 60 minutes | 110 | 32% | Minimize, record time | |

| Section Thickness | 3 µm | 140 | 18% | 4-5 µm |

| 5 µm | 160 | 10% | 4-5 µm | |

| 8 µm | 175 | 22% | 4-5 µm |

Table 2: Multi-Site Reproducibility Metrics with Standardized SOPs

| Site | Antigen Retrieval CV (%) | Fixation Time Adherence (%) | Average H-Score (Target Antigen) | Inter-Site Concordance (R²) |

|---|---|---|---|---|

| Site A | 8 | 100 | 162 | 0.98 |

| Site B | 10 | 95 | 158 | 0.96 |

| Site C | 15 | 98 | 155 | 0.94 |

| Average (with SOPs) | 11 | 98 | 158 | 0.96 |

| Historical (no SOPs) | 35 | 65 | Varies Widely | 0.71 |

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| 10% Neutral Buffered Formalin (NBF) | Gold-standard fixative. Buffers pH to ~7.4 to prevent acid-induced artifacts and ensure consistent cross-linking. |

| 70% Ethanol | Storage and transport medium post-fixation. Halts over-fixation and preserves nucleic acids better than formalin for long-term storage. |

| High-Grade Paraffin Wax | Low-melt-point (56-58°C), polymer-added wax improves ribbon consistency and sectioning of difficult tissues. |

| Positively Charged Microscope Slides | Adhesive coating ensures tissue section adherence during rigorous IHC staining procedures, especially for antigen retrieval. |

| Tissue Sectioning Water Bath | Maintains precise temperature (±1°C) to optimally spread paraffin sections without melting or introducing wrinkles. |

| Cold Ischemia Tracking Tool | Timer/log to record time from devascularization to fixation. Critical for labile antigen and phospho-epitope preservation. |

Experimental Protocols

Protocol 1: Standardized Tissue Collection & Fixation for Multi-Center Studies

- Ischemia Control: Record cold ischemia time from surgical devascularization to immersion in fixative. Target <20 minutes.

- Dissection: Trim tissue to a maximum dimension of 3 mm x 3 mm x 10 mm or 4 mm thick for core biopsies.

- Fixation: Immediately immerse tissue in 15-20x volume of 10% NBF. Use pre-labeled, leak-proof containers.

- Duration: Fix for 24 hours (± 2 hours) at room temperature (20-25°C) on an orbital shaker set to low speed.

- Post-Fixation: Transfer tissue to fresh 70% ethanol. Store at 4°C until processing. Ship to central lab in 70% ethanol.

Protocol 2: Automated Tissue Processing for Consistent Paraffin Infiltration

- Equipment: Validated automated closed tissue processor.

- Reagents: Prepare fresh ethanol series, clearing agent, and high-grade paraffin wax.

- Loading: Place tissue cassettes in processor basket. Include a control tissue cassette.

- Program: Run the standardized dehydration, clearing, and infiltration program (as detailed in FAQ A2).

- Embedding: Process tissues directly to embedding center. Pour molten paraffin into molds, orient tissue, and cool rapidly on a cold plate.

Workflow & Relationship Diagrams

Pre-Analytical Workflow for IHC Robustness

Root Causes & SOP Solutions for IHC Variability

This technical support center is framed within a thesis on enhancing IHC assay robustness for multi-site reproducibility research. It provides troubleshooting and FAQs for researchers, scientists, and drug development professionals.

Troubleshooting Guides & FAQs

Q1: After switching to a new lot of a primary antibody, we observe either significantly increased background or loss of specific signal. What steps should we take? A: This is a common reagent standardization issue. First, perform a new checkerboard titration (see protocol below) with the new lot alongside the old lot on the same slide using a multi-tissue block containing known positive and negative tissues. If the issue persists, verify the compatibility of the antibody diluent pH and ionic strength, as these can affect antibody binding. Ensure the antibody retrieval method (e.g., HIER pH 6 or pH 9) is optimal for the new lot. Document all parameters and lot numbers.

Q2: Our DAB chromogen reaction yields inconsistent staining intensity (too weak/too strong) across different staining runs, despite using the same protocol. A: Inconsistent DAB development is often linked to chromogen preparation or equipment. First, ensure the DAB substrate is freshly prepared or aliquoted from a single-use, freshly thawed vial. Check the liquid levels and flow paths of your automated stainer for any obstructions or air bubbles. Calibrate the dispenser volumes for the DAB and hydrogen peroxide reagents. Ambient temperature fluctuations can affect reaction kinetics; ensure the staining platform and reagent storage are at a consistent temperature (recommended 22-24°C). Run a calibrated multi-tier control slide with every batch.

Q3: During a multi-site study, we see high inter-site variability in H-Scores for the same analyte. What are the primary factors to investigate? A: Focus on the harmonization triad:

- Reagent Validation: Confirm all sites use the same validated lot of primary antibody, detection system, and retrieval buffer. Centralized reagent distribution is ideal.

- Equipment Calibration: Verify that all automated stainers undergo regular preventive maintenance and that incubation timers and temperatures are synchronized. Use the same model stainer if possible.

- Protocol Locking: Ensure the protocol is "locked" with no deviations, including fixed fixation times (e.g., 24-48h in 10% NBF), retrieval time/temperature/pH, and wash buffer specifications (concentration, pH, wash volume/duration).

Q4: What is the recommended method to validate and harmonize an IHC assay across multiple laboratories before initiating a large study? A: Implement a phased validation ring study:

- Phase 1 (Protocol Transfer): All sites stain the same set of 10-20 challenging tissue samples (including low/medium/high expressors and negatives) using a centrally distributed reagent kit and detailed protocol. Results are compared quantitatively (e.g., digital image analysis).

- Phase 2 (Reagent Bridging): Sites test new reagent lots against the master validation lot using a standard cell line microarray or tissue microarray (TMA).

- Phase 3 (Ongoing QC): Implement a system where each staining run includes a centrally provided control TMA. The data from this TMA must fall within pre-established acceptance criteria (see Table 1) for the run to be valid.

Experimental Protocols

Protocol 1: Checkerboard Titration for Antibody Standardization Purpose: To determine the optimal concentration of a primary antibody.

- Prepare a serial dilution of the primary antibody (e.g., 1:50, 1:100, 1:200, 1:400, 1:800) in the validated antibody diluent.

- Apply each dilution to consecutive sections of a multi-tissue control block (containing known positive and negative tissues) on the same slide.

- Perform the IHC staining protocol with all other conditions (retrieval, detection, DAB time) held constant.

- Analyze slides for specific signal intensity and background. The optimal dilution provides the highest specific signal with the lowest acceptable background. This becomes the locked concentration.

Protocol 2: Daily Run Acceptability Assessment Using a Control Tissue Microarray (TMA) Purpose: To ensure daily staining consistency.

- Include a control TMA slide with every staining batch. The TMA should contain core samples with known negative, low, medium, and high expression levels.

- After staining, perform digital image analysis (DIA) to generate quantitative scores (e.g., H-Score, % positivity) for each control core.

- Compare the scores to the established laboratory mean and acceptable range (derived from 20 previous runs). See Table 1 for example criteria.

- If all control core values fall within the acceptable range, the staining run is validated. If any fall outside, the run is investigated and repeated.

Data Presentation

Table 1: Example Acceptance Criteria for Control TMA Cores in a Harmonized IHC Assay

| Control Core Type | Target Expression | Acceptable H-Score Range (Mean ± 3SD) | Acceptance Criteria for % Positive Cells |

|---|---|---|---|

| Negative | None | 0 - 10 | ≤ 5% |

| Low Expressor | Weak | 45 - 85 | 15% - 30% |

| Medium Expressor | Moderate | 160 - 220 | 60% - 80% |

| High Expressor | Strong | 270 - 330 | ≥ 85% |

Data is illustrative, based on a theoretical harmonization study for estrogen receptor (ER) IHC. Ranges must be empirically defined for each assay during validation.

Mandatory Visualizations

Title: Workflow for Achieving Multi-Site IHC Reproducibility

Title: Standard IHC Staining Protocol Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Harmonized IHC |

|---|---|

| Validated Primary Antibody Lot | Centralized, large-volume master lot ensures identical binding specificity and affinity across all study sites. |

| Automated Stainer (Calibrated) | Ensures precise and reproducible dispensing of reagents, incubation times, and temperatures, removing operator variability. |

| Control Tissue Microarray (TMA) | Contains validated tissue cores for assay calibration and daily run acceptance; essential for longitudinal QC. |

| pH-Buffered Retrieval Solution | Standardized citrate (pH 6.0) or EDTA/TRIS (pH 9.0) buffer is critical for consistent antigen unmasking. |

| Pre-Diluted, Ready-to-Use Detection Kit | Eliminates variation in preparing complex enzyme (HRP)-polymer systems and chromogen mixtures. |

| Digital Image Analysis Software | Provides objective, quantitative scoring (H-Score, % positivity) to replace subjective pathologist grading. |

| Standardized Fixative (10% NBF) | Tissues from all sites must be fixed in neutral buffered formalin for a harmonized duration (e.g., 24-48h). |

Digital Pathology and Quantitative Image Analysis as Enablers of Reproducibility

Technical Support Center: Troubleshooting IHC & QIA for Multi-Site Reproducibility

FAQs & Troubleshooting Guides

Q1: During multi-site validation, we observe high inter-site variance in H-Score from the same sample. What are the primary technical sources? A: This is often due to pre-analytical and analytical variability. Key factors include:

- Fixation Discrepancy: Inconsistent fixation time or buffer pH across sites alters epitope availability.

- Antibody Lot Variability: Different lots of the same primary antibody can have varying affinity.

- Staining Platform Drift: Differences in automated stainers' reagent dispensing times or temperatures.

- Image Acquisition Settings: Inconsistent exposure times, gain, or white balance across scanners.

Q2: Our quantitative image analysis (QIA) algorithm fails to segment cells accurately in slides from a new site, despite consistent staining. Why? A: This typically stems from differences in color/intensity baselines due to scanner models or staining batches. Implement a per-site color normalization step as a pre-processing requirement before segmentation.

Q3: How can we objectively validate that our IHC assay is reproducible across multiple laboratories before initiating a large study? A: Implement a Phantom Tissue Microarray (TMA) or a cell line microarray with known expression levels of the target. Distribute this control slide to all sites. The coefficient of variation (%CV) of key QIA metrics (e.g., positive cell percentage, mean optical density) across sites should be calculated. Aim for a %CV < 20% for major metrics.

Table 1: Acceptable Performance Metrics for Multi-Site IHC Reproducibility

| Metric | Target Threshold for Robust Assay | Calculation Method |

|---|---|---|

| Inter-site Stain Intensity (CV%) | ≤ 15% | CV% = (Std Dev of Mean Optical Density / Mean) x 100 |

| Inter-site Positive Cell % (CV%) | ≤ 20% | CV% across sites for a defined positivity threshold |

| Inter-scanner Correlation (R²) | ≥ 0.95 | Correlation of metrics from the same slide scanned on different scanners |

| Inter-operator Annotation Concordance (Dice Score) | ≥ 0.85 | Overlap of manually annotated regions of interest |

Experimental Protocol: Multi-Site Reproducibility Validation for IHC-QIA

Title: Protocol for Inter-Laboratory IHC Assay Robustness Testing.

Objective: To quantify the inter-site reproducibility of an IHC assay coupled with QIA using a centrally prepared control TMA.

Materials: See "Research Reagent Solutions" below.

Methodology:

- Control TMA Construction (Central Lab):

- Assemble a TMA containing:

- Cell line pellets with high, medium, low, and negative target expression.

- Patient tissue cores (FFPE) covering the expected expression range.

- Section the TMA at 4µm. All sections must be from the same TMA block, cut within a 72-hour period.

- Assemble a TMA containing:

- Slide Distribution & Staining (Distributed Sites):

- Distribute identical TMA slides from consecutive cuts to each participating site (n≥3 sites).

- Provide a detailed, locked-down protocol (SOP) covering deparaffinization, antigen retrieval, primary antibody incubation (exact clone, dilution, incubation time/temp), detection kit, and counterstain.

- Sites use their local, validated automated stainers and routine reagents (except the specified primary antibody lot).

- Digital Slide Acquisition:

- Each site scans slides using their clinical-grade whole slide scanner at 20x magnification (0.5 µm/pixel).

- SOP must specify scanning parameters: bit depth (e.g., 24-bit RGB), exposure mode (consistent across batches), and file format (e.g., .svs).

- Quantitative Image Analysis (Central Analysis):

- Color Normalization: Apply a pixel-based color normalization algorithm (e.g., Reinhard or Macenko method) to all digital slides against a designated reference slide.

- Algorithm Application: Apply a single, version-controlled QIA algorithm to all normalized images. The algorithm should output pre-defined metrics: Positive Cell Percentage, H-Score, Mean Optical Density in positive cells.

- Statistical Analysis: Calculate the inter-site %CV for each metric and core. Perform ANOVA to assess the statistical significance of inter-site variance.

Diagram 1: Multi-Site Validation Workflow

Diagram 2: QIA Pipeline for Reproducibility

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Robust IHC-QIA Studies

| Item | Function & Rationale for Reproducibility |

|---|---|

| Certified Primary Antibody (Monoclonal Clone) | Ensures specificity to a single epitope. Critical for lot-to-lot consistency. Must be validated for IHC on FFPE. |

| Control Tissue/ Cell Line Microarray (TMA) | Provides internal controls on every slide for monitoring staining intensity and specificity across batches and sites. |

| Automated IHC Stainer with Log Tracking | Minimizes operator-dependent variability. Electronic logs of reagent dispense times and temperatures are essential for troubleshooting. |

| Whole Slide Scanner (Clinical Grade) | Generates high-fidelity digital slides for QIA. Calibration and maintenance logs are required. |

| Color Normalization Software (e.g., Vahadane, Macenko) | Standardizes color appearance of digital slides from different scanners/staining runs, a prerequisite for reproducible QIA. |

| Validated QIA Algorithm (Containerized) | The analysis code must be version-controlled and deployed in a container (e.g., Docker) to ensure identical execution in all analysis environments. |

| Digital Slide Repository (with Metadata) | Centralized, structured storage (e.g., based on DICOM standards) linking slide images to full experimental metadata (antibody lot, staining date, scanner model). |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: What are the most common causes of inter-site staining intensity variation in an IHC ring study?

A: The primary causes relate to pre-analytical and analytical variability. Key factors include:

- Fixation Discrepancies: Time to fixation, fixation duration, and fixative type (e.g., NBF vs. PAXgene) differ between sites.

- Antigen Retrieval Inconsistency: Buffer pH (e.g., pH 6 vs. pH 9), retrieval method (pressure cooker, water bath, steamer), and heating time.

- Primary Antibody Incubation: Variability in incubation time, temperature, and reagent application (manual vs. automated).

- Detection System: Lot-to-lot variation in detection kits (e.g., polymer-HRP systems) and chromogen development time.

- Slide Scoring: Subjective differences in pathologist interpretation.

Q2: How can we troubleshoot high background staining across all participating sites?

A: Follow this systematic approach:

- Check Detection System: Ensure the detection polymer is not over-concentrated. Include a secondary antibody-only control.

- Optimize Blocking: Increase blocking serum incubation time (e.g., 10% normal serum for 30 minutes) or consider a proprietary protein block.

- Titrate Primary Antibody: Re-test the primary antibody at a series of dilutions on a known positive control tissue.

- Review Washes: Ensure wash buffers (PBS/TBS) have the correct pH and that wash steps are sufficiently rigorous.

- Check Endogenous Enzymes: For HRP-based detection, confirm endogenous peroxidase blocking (e.g., with 3% H₂O₂) was performed correctly.

Q3: One site consistently reports weak or negative staining while others are positive. What is the first step?

A: Initiate a reagent and process trace-back:

- Reagent Audit: Confirm the site used the exact same lot numbers of primary antibody, detection kit, and retrieval buffer as other sites.

- Control Slide Exchange: Have the outlier site stain a set of pre-cut, centrally provided control tissue sections alongside their local ones. This isolates the variable to their staining process versus their tissue.

- Instrument Calibration: Verify the automated stainer's reagent dispensing volumes, temperature of heating steps, and wash pressures are within specifications.

Q4: How should discrepant scoring results between site pathologists be resolved?

A: Implement a consensus review process:

- Blinded Re-review: All discrepant slides (e.g., scores differing by more than one intensity grade or 20% in positivity) are anonymized and re-scored by all participating pathologists.

- Reference Images: Use a centrally developed, validated set of reference images (annotated with score) as a guide.

- Third-Arbitrator: If disagreement persists, a lead or external pathologist makes the final call. This process must be defined in the study protocol.

Key Experimental Protocols

Protocol 1: Standardized Tissue Microarray (TMA) Construction for Ring Study

- Donor Block Selection: Select formalin-fixed, paraffin-embedded (FFPE) blocks with confirmed, homogenous expression of the target antigen (positive) and no expression (negative).

- Core Extraction: Using a tissue microarrayer, extract 1.0 mm or 1.5 mm cores in duplicate/triplicate from defined regions of donor blocks.

- Recipient Block Assembly: Insert cores into a new, empty paraffin block in a pre-defined grid pattern with mapped coordinates.

- Sectioning: Cut 4–5 μm sections from the TMA block using a microtome with a fresh blade. Float sections in a 40°C water bath.

- Slide Mounting: Mount sections on positively charged or adhesive glass slides. Dry slides overnight at 37°C.

- Central Distribution: Bake all slides centrally at 60°C for 1 hour. Distribute identical sets of TMA slides to each participating site in a single batch.

Protocol 2: Harmonized IHC Staining Protocol (Manual Example)

- Deparaffinization & Rehydration: Xylene (2 x 5 min) → 100% Ethanol (2 x 3 min) → 95% Ethanol (2 x 3 min) → 70% Ethanol (1 x 3 min) → dH₂O rinse.

- Antigen Retrieval: Place slides in pre-heated retrieval buffer (e.g., citrate, pH 6.0) in a decloaking chamber or water bath. Heat at 95–100°C for 20 minutes. Cool at room temp for 30 minutes.

- Peroxidase Block: Incubate with 3% aqueous H₂O₂ for 10 minutes. Rinse with wash buffer.

- Protein Block: Apply 5% normal serum or protein block for 10 minutes at room temperature.

- Primary Antibody: Apply optimized dilution of primary antibody. Incubate for 60 minutes at room temperature (or as determined during assay optimization).

- Detection: Apply labeled polymer-HRP secondary antibody (e.g., from Dako EnVision+ or equivalent) for 30 minutes.

- Chromogen Development: Apply DAB substrate for exactly 5 minutes (timer required). Rinse with dH₂O.

- Counterstain & Mount: Counterstain with hematoxylin for 1 minute. Dehydrate, clear, and mount with a coverslip.

Data Presentation

Table 1: Example Ring Study Results - Scoring Consistency Across Sites

| Site ID | Target | Positive Control (Average H-Score) | Negative Control (Average H-Score) | Test Sample 1 H-Score | Test Sample 2 H-Score | Inter-Site CV for Test Sample 1* |

|---|---|---|---|---|---|---|

| Site A | Protein X | 280 | 5 | 185 | 40 | 8.2% |

| Site B | Protein X | 270 | 10 | 175 | 45 | 8.2% |

| Site C | Protein X | 265 | 0 | 200 | 35 | 8.2% |

| Site D | Protein X | 290 | 5 | 190 | 50 | 8.2% |

| Mean (SD) | 276.3 (10.8) | 5.0 (4.1) | 187.5 (10.4) | 42.5 (6.5) |

*CV: Coefficient of Variation. Calculated for Test Sample 1 across Sites A-D.

Table 2: Common Troubleshooting Matrix

| Problem | Potential Cause | Immediate Action | Long-Term Corrective Action |

|---|---|---|---|

| Weak Staining | Under-fixation, Low antibody titer, Inadequate retrieval | Increase retrieval time, Re-titrate antibody | Standardize fixation SOP, Centralize antibody aliquoting |

| High Background | Over-concentrated detection, Inadequate blocking, DAB over-development | Increase block time, Dilute detection reagent | Define optimal DAB incubation time with timer, Use automated stainers |

| Staining Granularity | Drying of sections, Precipitated antibody | Ensure slides remain hydrated during staining | Centrifuge antibody reagents before use, Optimize humidity controls |

| Inter-Site Discrepancy | Different retrieval buffer pH, Lot variation | Ship centralized retrieval buffer to all sites | Pre-qualify and reserve large reagent lots for the study |

Visualizations

Title: IHC Ring Study Experimental Workflow

Title: Logical Troubleshooting Path for Staining Issues

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in IHC Ring Study | Critical Consideration |

|---|---|---|

| Validated Primary Antibody | Binds specifically to the target protein antigen. | Use the same clone, host, and lot number across all sites. Pre-qualify on relevant tissues. |

| Detection Kit (Polymer-based) | Amplifies signal and facilitates chromogen deposition. | Use the same kit brand, product number, and lot number across all sites to minimize variation. |

| Antigen Retrieval Buffer | Unmasks epitopes cross-linked by fixation. | Standardize pH (e.g., pH 6 Citrate or pH 9 EDTA/Tris) and provide centralized batch if possible. |

| Chromogen (e.g., DAB) | Produces a visible, insoluble precipitate at the antigen site. | Use same formulation and lot. Strictly time the development step (e.g., 5 min ± 15 sec). |

| Control Tissue Microarray (TMA) | Serves as positive, negative, and expression gradient controls on every slide. | Construct centrally from well-characterized FFPE blocks to ensure all sites test identical samples. |

| Automated Stainer | Performs staining protocol with minimal human intervention. | Calibrate instruments at each site. Use identical programming (times, temperatures, volumes). |

| Digital Slide Scanner | Creates whole slide images for remote, centralized scoring. | Standardize scanning parameters (magnification, resolution, focus) to ensure image comparability. |

Diagnosing and Solving Cross-Site IHC Discrepancies: A Troubleshooting Guide

Root Cause Analysis Framework for Staining Inconsistencies

Within the broader thesis on achieving robust IHC assay performance for multi-site reproducibility in drug development, staining inconsistencies represent a critical failure point. This technical support center provides a structured root cause analysis framework, troubleshooting guides, and FAQs to empower researchers in systematically identifying and resolving these issues.

Troubleshooting Guides & FAQs

Q1: Why is there high inter-slide or inter-batch variability in staining intensity for the same target and sample type? A: This often points to pre-analytical or reagent variability. Follow this investigative protocol:

- Protocol: Reagent Stability & Aliquot Consistency Test

- Methodology: From a master batch of FFPE tissue sections (e.g., human tonsil), create three identical staining sets.

- Set A: Use a freshly prepared, aliquoted primary antibody dilution from a newly reconstituted vial.

- Set B: Use a primary antibody aliquot that has undergone 5 freeze-thaw cycles.

- Set C: Use a primary antibody dilution that was prepared 72 hours prior and stored at 4°C.

- Process all sets in the same automated stainer run. Quantify staining intensity (e.g., H-Score, DAB Optical Density) in identical anatomical regions across 10 fields per slide.

- Data & Analysis:

- Quantitative data from such an experiment typically shows significant degradation in Sets B and C.

| Test Set | Mean DAB OD (Target Region) | Coefficient of Variation (CV) Across Slides | Inferred Root Cause |

|---|---|---|---|

| Set A (Fresh Aliquot) | 0.45 | 8% | Baseline performance. |

| Set B (5 Freeze-Thaws) | 0.28 | 22% | Antibody degradation from improper storage. |

| Set C (72h Old Dilution) | 0.31 | 18% | Loss of antibody activity in diluted state. |

Q2: What could cause patchy or uneven staining across a single tissue section? A: This is frequently an artifact of the staining procedure itself. The primary suspect is inadequate or uneven reagent coverage during manual or automated steps.

- Protocol: Automated vs. Manual Dispense Validation

- Methodology: Select a serial section slide with known heterogeneous antigen distribution. Perform IHC using an automated platform with a calibrated, properly primed liquid dispensing system. On a duplicate slide, perform manual application of the same primary antibody, ensuring the reagent is applied but not spread evenly with a pipette tip. Compare staining patterns.

- Key Check: Inspect the automated stainer's liquid dispensing lines for bubbles and ensure the slide rack is level. For manual protocols, always use a hydrophobic barrier pen and cover the tissue with enough volume to form a convex meniscus.

Q3: How do I differentiate between true negative staining and a technical false negative? A: Implement a systematic set of controls within every run.

- Protocol: Comprehensive Control Slide Strategy

- Methodology: Include the following controls in each staining batch:

- Positive Control: A tissue known to express the target at a defined level.

- Negative Control: The same tissue type known to be null for the target (genetically confirmed if possible).

- Isotype Control: To assess non-specific binding of the primary antibody.

- Assay Positive Control (APC): A cell line pellet or tissue with known, stable expression of the target, processed identically to test samples. This controls for the entire assay process.

- Endogenous Enzyme Block Control: A section where the chromogen is applied after the block but without the primary antibody to check for incomplete peroxidase/alkaline phosphatase blockage.

- Methodology: Include the following controls in each staining batch:

Root Cause Analysis Framework: Decision Diagram

Title: Staining Inconsistency Root Cause Decision Tree

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Rationale for Robustness |

|---|---|

| Validated Primary Antibody with Lot-Specific Data Sheet | Core detection agent. Validation for IHC-specific applications (vs. WB) and consistent lot-to-lot performance data are critical for reproducibility. |

| Automated IHC Stainer & Certified Reagents | Minimizes operator-dependent variability. Use manufacturer-certified detection kits and buffers tailored for the platform. |

| Multitissue Microarray (TMA) Control Block | Contains defined positive, negative, and expression gradient tissues. Enables simultaneous monitoring of staining performance across dozens of samples on one slide. |

| Chromogen with Enhanced Stability (e.g., polymer-based DAB+) | Provides sharper signals and better resistance to solvent fading during coverslipping compared to traditional DAB. |

| Antigen Retrieval Buffer pH Standardization Kit | Precise pH (6.0 Citrate vs. 9.0 EDTA/Tris) is antigen-specific. Using a standardized, pH-verified buffer system prevents variable retrieval efficiency. |

| Protein Block (Species-Specific, Serum-Based) | Reduces non-specific background staining by blocking endogenous reactive sites on tissue, improving signal-to-noise ratio. |

| Barrier Pens (Hydrophobic) | Creates a defined, uniform incubation area for manual staining, preventing reagent spread and ensuring consistent volume-to-area ratio. |

| Digital Slide Scanner & Quantitative Image Analysis Software | Enables objective, high-throughput quantification of staining intensity and distribution, moving beyond subjective scoring. |

Troubleshooting Antigen Retrieval and Antibody Performance Across Platforms

This technical support center is designed to support researchers in achieving robust immunohistochemistry (IHC) assays, a critical requirement for multi-site reproducibility studies in drug development. Consistent antigen retrieval and antibody performance are foundational to reliable, comparable data across different laboratories and platforms.

Troubleshooting Guides & FAQs

Antigen Retrieval Issues

Q1: Why is my IHC staining weak or absent even with a validated antibody? A: This is frequently due to suboptimal antigen retrieval (AR). The fixation process cross-links and masks epitopes; AR reverses this. Troubleshoot using the following steps:

- Verify Fixation: Over-fixation (e.g., >72h in formalin) requires more aggressive AR. Under-fixation leads to poor morphology.

- Choose AR Method: Heat-Induced Epitope Retrieval (HIER) using a pressure cooker, microwave, or water bath is standard for most formalin-fixed paraffin-embedded (FFPE) samples. Proteolytic-Induced Epitope Retrieval (PIER) is suitable for some labile antigens.

- Optimize Buffer pH: Test a range (pH 6.0 Citrate vs. pH 8.0-9.0 Tris-EDTA). A shift in pH can dramatically alter staining intensity for specific epitopes.

- Control Time/Temperature: Standardize retrieval time and ensure the buffer reaches and maintains the intended temperature (e.g., 95-100°C for HIER).

Protocol: Standardized HIER Optimization Protocol

- Cut 4-5 serial sections from the FFPE block of interest.

- Deparaffinize and rehydrate sections.

- Perform HIER in a pressure cooker for 5 minutes at full pressure using different buffers: Citrate (pH 6.0), Tris-EDTA (pH 9.0), and a commercial high-pH buffer.

- Cool slides for 20-30 minutes at room temperature in the buffer.