From Data to Discovery: How AI and Machine Learning Are Revolutionizing Immunology Research

This article explores the transformative impact of artificial intelligence and machine learning on modern immunology.

From Data to Discovery: How AI and Machine Learning Are Revolutionizing Immunology Research

Abstract

This article explores the transformative impact of artificial intelligence and machine learning on modern immunology. Targeted at researchers, scientists, and drug development professionals, it provides a comprehensive guide spanning foundational concepts to advanced applications. We examine how AI deciphers immune system complexity, detail methodological breakthroughs in antigen and biomarker prediction, address critical challenges in data integration and model interpretability, and evaluate the comparative performance of leading AI tools. The synthesis offers a roadmap for leveraging computational power to accelerate therapeutic discovery and personalized medicine.

Decoding Complexity: Foundational AI Concepts for Immunological Discovery

Foundational Concepts and Data Types

Immunology research generates complex, high-dimensional data. Machine learning (ML) provides tools to find patterns within this data. Below is a table of core data types and corresponding ML approaches.

Table 1: Common Immunology Data Types and Associated ML Methods

| Data Type | Example in Immunology | Typical ML Task | Example ML Algorithm |

|---|---|---|---|

| Flow/Mass Cytometry | Single-cell protein expression | Dimensionality Reduction, Clustering | t-SNE, UMAP, PhenoGraph |

| Bulk RNA-seq | Gene expression from tissue | Supervised Classification | Random Forest, SVM, Neural Network |

| Single-Cell RNA-seq | Gene expression per cell | Trajectory Inference, Cell Type Annotation | PAGA, Monocle3, CellTypist |

| TCR/BCR Sequencing | Adaptive immune receptor repertoires | Sequence Motif Discovery, Anomaly Detection | GLIPH2, DeepRC, OLGA |

| Histopathology Images | H&E or multiplex IF stained tissue | Image Segmentation, Classification | U-Net, ResNet, Vision Transformer |

| Clinical & Biomarker Data | Patient outcomes, cytokine levels | Regression, Survival Analysis | Cox Proportional Hazards, XGBoost |

Protocol: A Standard Workflow for Supervised Classification of Disease State from Bulk Transcriptomics

This protocol outlines a standard pipeline for building a classifier to predict disease state (e.g., responder vs. non-responder) from bulk RNA-sequencing data.

Materials & Reagent Solutions

Table 2: Research Reagent Solutions for Computational Analysis

| Item/Category | Function/Purpose | Example Tools/Libraries |

|---|---|---|

| Computational Environment | Provides reproducible software and dependency management. | Docker, Singularity, Conda |

| Data Processing Suite | Converts raw sequencing reads into a gene expression matrix. | FastQC, STAR, HTSeq, Salmon |

| Statistical Programming Language | Language for data manipulation, analysis, and modeling. | Python (pandas, scikit-learn) or R (tidyverse) |

| Normalization Package | Corrects for technical variation (library size, composition). | DESeq2, edgeR, or scikit-learn’s StandardScaler |

| Feature Selection Module | Identifies informative genes, reduces dimensionality. | scikit-learn SelectKBest, VarianceThreshold |

| ML Library | Provides implementations of classification algorithms. | scikit-learn, XGBoost, PyTorch |

| Visualization Library | Creates plots for data exploration and result presentation. | matplotlib, seaborn, plotly |

Experimental Procedure

Data Acquisition & Preprocessing:

- Obtain raw FASTQ files and phenotypic metadata.

- Perform quality control (QC) using FastQC. Trim adapters if necessary.

- Align reads to a reference genome (e.g., using STAR) and quantify gene-level counts (e.g., using HTSeq-count). Alternatively, use a pseudoalignment tool like Salmon for faster quantification.

Normalization & Filtering:

- Load count matrix into analysis environment (Python/R).

- Filter out lowly expressed genes (e.g., genes with counts < 10 in >90% of samples).

- Normalize counts to correct for library size and composition. For bulk RNA-seq, use a method like DESeq2's median of ratios or edgeR's TMM normalization. Log2-transform the normalized counts.

Train-Test Split & Feature Selection:

- Split the dataset into a training set (e.g., 70-80%) and a held-out test set (20-30%). Crucially, this split must be performed before feature selection to avoid data leakage.

- On the training set only, perform feature selection to identify the top n (e.g., 500) most informative genes. Methods include:

- Variance-based: Select genes with highest variance.

- Differential Expression: Select genes with highest statistical significance (e.g., lowest p-value from a t-test) between classes.

- Model-based: Use L1-regularized logistic regression (Lasso) to select non-zero coefficient genes.

Model Training & Validation:

- Using the training set and the selected features, train multiple classifiers (e.g., Logistic Regression, Random Forest, Support Vector Machine).

- Perform k-fold cross-validation (e.g., k=5 or 10) on the training set to tune hyperparameters (e.g., regularization strength, tree depth) and estimate model performance without touching the test set.

- Select the best-performing model/hyperparameter set based on cross-validation metrics (e.g., AUC-ROC, accuracy).

Model Evaluation & Interpretation:

- Apply the finalized model to the held-out test set. Generate a comprehensive performance report: confusion matrix, ROC curve, precision-recall curve.

- Perform model interpretation:

- For linear models, examine coefficient magnitudes.

- For tree-based models (Random Forest, XGBoost), use built-in feature importance metrics (Gini importance, SHAP values).

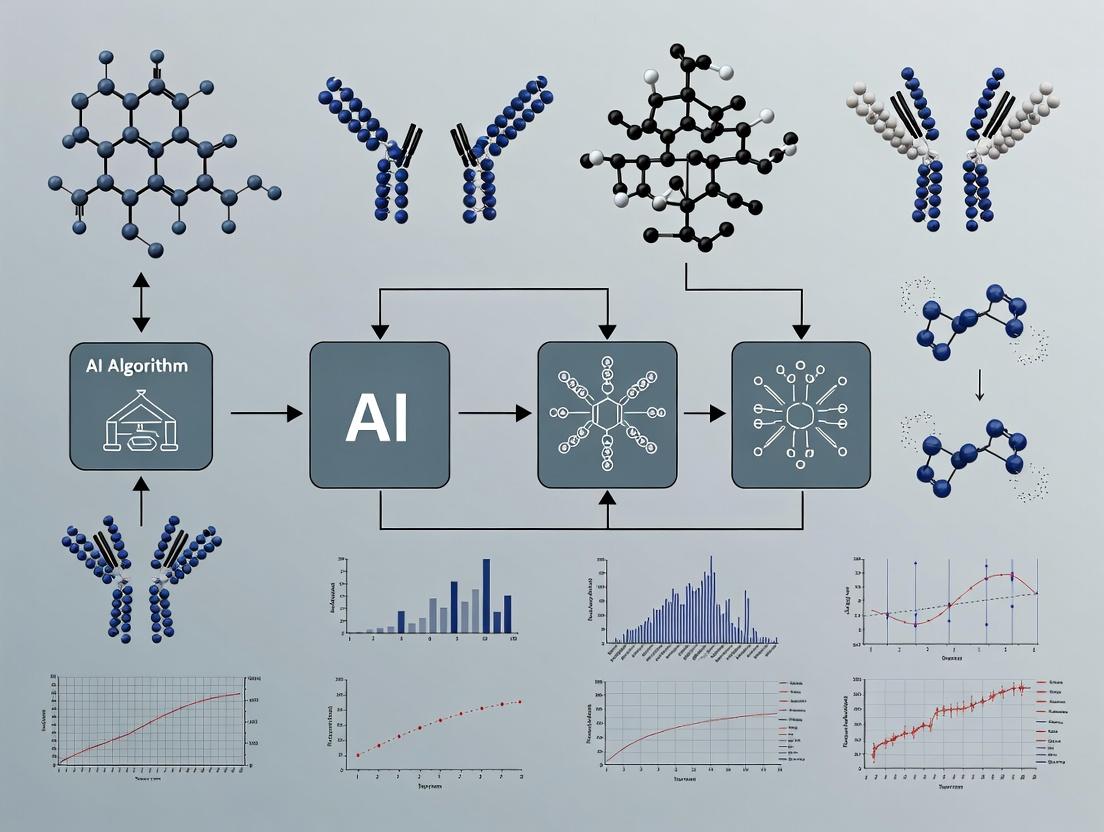

Diagram Title: Supervised ML Workflow for Bulk RNA-seq

Protocol: Unsupervised Clustering and Visualization of High-Dimensional Cytometry Data

This protocol details the use of dimensionality reduction and clustering to identify novel cell populations in flow or mass cytometry (CyTOF) data.

Materials & Reagent Solutions

Table 3: Research Reagent Solutions for CyTOF Data Analysis

| Item/Category | Function/Purpose | Example Tools/Libraries |

|---|---|---|

| Normalization & Debarcoding Software | Processes raw .fcs files from CyTOF, corrects for signal drift, and assigns cells to sample IDs. | Fluidigm CyTOF software, premessa (R) |

| Data Cleaning Library | Removes debris, dead cells, and doublets based on DNA and event length channels. | flowCore (R), CytofClean (Python) |

| Arcsinh Transformer | Applies an inverse hyperbolic sine (arcsinh) transform with a cofactor (e.g., 5) to stabilize variance and normalize marker expression. | scikit-learn FunctionTransformer |

| Dimensionality Reduction Engine | Reduces 30-50 protein markers to 2-3 dimensions for visualization. | UMAP, t-SNE (openTSNE implementation) |

| Clustering Algorithm | Identifies groups of phenotypically similar cells without prior labels. | PhenoGraph, FlowSOM, Leiden |

| Differential Abundance Test | Statistically compares cluster frequencies between sample groups. | diffcyt (R), scipy.stats (Python) |

Experimental Procedure

Data Preprocessing & Cleaning:

- Load .fcs files. Apply bead-based normalization if needed.

- Perform sample debarcoding for multiplexed runs.

- Clean the data: gate out cells positive for DNA intercalators (dead cells), remove events with low event length, and apply a Gaussian filter to exclude doublets.

Data Transformation:

- Select the channels for analysis (typically the lineage and functional markers, excluding DNA, event length, and viability channels).

- Apply an arcsinh transform to all selected channels:

X_transformed = arcsinh(X / cofactor). A cofactor of 5 is standard for CyTOF data.

Dimensionality Reduction & Clustering:

- Perform principal component analysis (PCA) on the transformed data. Use the top n PCs (where n is chosen by elbow plot) for downstream steps.

- Apply a graph-based clustering algorithm (e.g., PhenoGraph) on the PCA-reduced data to assign each cell a cluster label. PhenoGraph uses k-nearest-neighbor graph construction and community detection.

- In parallel, run UMAP on the same PCA-reduced data to generate a 2D embedding for visualization. Do not use t-SNE/UMAP coordinates for clustering.

Visualization & Annotation:

- Create a UMAP scatter plot, coloring cells by their cluster ID.

- Generate heatmaps of median marker expression per cluster.

- Manually annotate clusters based on known marker combinations (e.g., CD3+CD4+ for T-helper cells).

Differential Analysis:

- Aggregate cell counts to the sample level to get cluster proportions per patient/condition.

- Use a statistical test (e.g., Mann-Whitney U test, linear mixed model) to identify clusters whose frequencies differ significantly between experimental groups (e.g., healthy vs. disease).

Diagram Title: Unsupervised Analysis Pipeline for Cytometry Data

Application Note: High-Dimensional Immune Profiling for ML Model Training

Objective: To generate high-dimensional, single-cell resolution datasets capturing immune cell states, suitable for training machine learning models for cell type classification, state prediction, and perturbation response modeling.

Background: The adaptive immune system presents a data problem of immense scale (~10^12 lymphocytes) and dimensionality (cell state defined by transcriptome, proteome, receptor repertoire). Traditional low-parameter assays (e.g., 3-color flow cytometry) fail to capture this complexity. Modern high-parameter technologies like Mass Cytometry (CyTOF) and single-cell RNA sequencing (scRNA-seq) generate the rich, multi-dimensional data required to model immune system dynamics as a high-dimensional space where disease or treatment represents a shift in the distribution of cell states.

Key Quantitative Data Summary:

Table 1: Comparison of High-Dimensional Immune Profiling Platforms

| Platform | Measured Parameters (Dimensionality) | Typical Cell Throughput | Key Output for ML | Primary Computational Challenge |

|---|---|---|---|---|

| Spectral Flow Cytometry | 30-40 proteins (surface/intracellular) | 10^7 cells per run | High-dimensional vector per cell | Dimensionality reduction, automated gating |

| Mass Cytometry (CyTOF) | 50+ proteins (metal-tagged antibodies) | 10^6 cells per run | High-dimensional vector per cell | Normalization, batch correction |

| scRNA-seq (3' end) | 20,000+ genes (transcriptome) | 10^4 - 10^5 cells per run | Sparse gene expression matrix | Imputation, normalization, integration |

| CITE-seq / REAP-seq | 20,000+ genes + 100+ surface proteins | 10^4 - 10^5 cells per run | Multi-modal paired data | Multi-modal integration, cross-modal inference |

| TCR/BCR-seq + scRNA-seq | Paired receptor sequence + transcriptome | 10^3 - 10^4 cells per run | Clonotype-linked phenotype | Clonal tracking, lineage inference |

Protocols

Protocol 1: Generation of a Multi-Modal CITE-seq Dataset for ML-Based Immune Atlas Construction

Purpose: To simultaneously capture transcriptomic and proteomic data from a single-cell suspension, creating a paired, high-dimensional dataset ideal for training multi-modal deep learning models (e.g., for cross-modal imputation or integrated cell embedding).

Materials:

- Fresh PBMCs or tissue-derived single-cell suspension.

- TotalSeq-B or -C Antibody Panel (BioLegend): A cocktail of 50-150 oligonucleotide-tagged antibodies against surface proteins.

- Chromium Next GEM Chip G (10x Genomics): Part of the 5' Gene Expression with Feature Barcoding kit.

- Dual Index Kit TT Set A (10x Genomics).

- SPRIselect Reagent Kit (Beckman Coulter): For post-library clean-up.

- Bioanalyzer High Sensitivity DNA Kit (Agilent) or TapeStation.

- Cell Ranger Feature Barcoding pipeline (10x Genomics).

Procedure:

- Cell Preparation & Antibody Staining: Count and assess viability. Incubate 1x10^6 cells with the TotalSeq-B antibody cocktail (titrated, 1:100 dilution in Cell Staining Buffer) for 30 minutes on ice. Wash cells 3x with cold buffer.

- 10x Genomics Library Preparation: Follow the manufacturer’s protocol for "5' Gene Expression with Feature Barcoding." Load the stained cells onto the Chromium Chip to generate single-cell Gel Bead-In-Emulsions (GEMs). The GEMs contain primers for cDNA synthesis from poly-adenylated mRNA and from the antibody-derived tags (ADTs).

- cDNA Amplification & Library Construction: Perform GEM incubation and cleanup. Amplify cDNA. Then, split the amplified product for the generation of two separate libraries:

- Gene Expression Library: Fragmentation, end-repair, A-tailing, and adapter ligation using sample index primers.

- Antibody-Derived Tag (ADT) Library: A separate PCR is performed using a primer set specific to the constant region of the TotalSeq-B antibodies.

- Library QC & Sequencing: Quantify libraries using Qubit. Assess size distribution (~180 bp for ADT, broad peak ~2000 bp for cDNA). Pool libraries at an optimized ratio (typically 10:1 cDNA:ADT reads) and sequence on an Illumina NovaSeq (28-10-10-90 read configuration for 5' kit).

- Data Processing: Run

cellranger multi(Cell Ranger v7+) with the gene expression and feature barcode reference files. This generates a feature-barcode matrix containing two "modalities" (RNA and ADT counts) for each cell barcode.

ML Application: The resulting H5AD file can be imported into Python (Scanpy, scvi-tools). A multi-modal variational autoencoder (MMVAE) can be trained to learn a joint latent representation, enabling tasks like predicting protein expression from RNA data alone or denoising both data modalities.

Protocol 2: TCRβ Sequencing and Clonotype Tracking in a Longitudinal Study

Purpose: To generate quantitative data on T-cell clonal expansion and contraction over time or in response to therapy, providing dynamic, sequence-based features for time-series or graph-based ML models.

Materials:

- Serial PBMC samples (e.g., pre-treatment, on-treatment, relapse).

- SMARTer Human TCR a/b Profiling Kit (Takara Bio) or equivalent.

- Illumina TCR Solution (Illumina) for library prep.

- MiSeq or iSeq 100 System (Illumina) with appropriate v2/v3 kits.

- MIXCR or ImmunoSEQR analysis software.

Procedure:

- Nucleic Acid Extraction: Isolate total RNA or gDNA from each PBMC sample (~1x10^6 cells) using a column-based kit. Quantify.

- TCRβ CDR3 Amplification:

- For RNA: Use the SMARTer kit for 5' RACE-based amplification of rearranged TCRβ transcripts.

- For gDNA: Use multiplex PCR with V-region and J-region primers.

- Library Preparation for NGS: Add Illumina sequencing adapters and sample-specific dual indices via a secondary PCR (8 cycles). Clean up with SPRI beads.

- Pooling & Sequencing: Quantify libraries, normalize, and pool. Sequence on a MiSeq (2x300 bp) to a depth of at least 100,000 reads per sample for adequate clonotype coverage.

- Clonotype Calling: Process fastq files with MIXCR (

mixcr analyze shotgun). The output is a tab-separated clonotype table listing each unique CDR3 nucleotide/amino acid sequence, its frequency, and V/D/J gene assignments per sample. - Data Integration for ML: Create a clonal abundance matrix (samples x clonotypes). Use this to calculate:

- Clonal Shannon entropy.

- Top 10 clone frequency.

- Longitudinal tracking of specific clones.

ML Application: This matrix can be used as input for:

- Survival models: Using baseline clonality metrics as features.

- Clustering algorithms: To identify patients with similar dynamic clonal responses.

- Graph Neural Networks: Where nodes are clonotypes (with sequence features) and edges are co-occurrence across samples or shared specificity predictions.

Diagrams

CITE-seq Multi-Modal Data Generation Workflow

Core T-Cell Activation Signaling Network

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for High-Dimensional Immune Data Generation

| Item (Example Supplier) | Function in Experiment | Key Property for Data Quality |

|---|---|---|

| TotalSeq Antibodies (BioLegend) | Oligo-tagged antibodies for CITE-seq. | Allows simultaneous protein & RNA measurement in single cells. |

| Cell-ID Intercalator-Ir (Fluidigm) | DNA intercalator for CyTOF. | Distinguishes intact, nucleated cells from debris. |

| Chromium Next GEM Chip (10x Genomics) | Microfluidic device for single-cell partitioning. | Determines cell throughput and multiplet rate. |

| SMARTer TCR a/b Profiling Kit (Takara) | Amplifies full-length TCR transcripts. | Preserves paired V-J information for clonotype definition. |

| TruStain FcX (BioLegend) | Fc receptor blocking reagent. | Reduces non-specific antibody binding, lowers noise. |

| LIVE/DEAD Fixable Viability Dyes (Thermo Fisher) | Covalently labels dead cells. | Critical for excluding apoptotic cells from analysis. |

| BD Horizon Brilliant Polymer Dyes (BD Biosciences) | Flow cytometry dyes with minimal spillover. | Enables high-parameter panel design (30+ colors). |

| Cell Stimulation Cocktail (PMA/Ionomycin) (BioLegend) | Polyclonal T-cell activator. | Positive control for cytokine detection assays. |

| Human TruStain FcX (BioLegend) | Human Fc block. | Essential for human PBMC/mouse xenograft experiments. |

| Single-Cell Multiplexing Kit (Sample Tags) (BioLegend) | Labels cells from different samples with unique barcodes. | Enables sample multiplexing, reduces batch effects. |

Application Notes

The integration of multimodal immunology data provides a systems-level view of immune responses. These key data types, when combined with AI and machine learning, enable the deconvolution of cellular heterogeneity, lineage relationships, and antigen-specific immune responses critical for biomarker discovery and therapeutic development.

- Single-Cell RNA Sequencing (scRNA-seq): Enables unbiased transcriptomic profiling of individual cells, defining cell states, types, and potential functions. AI models (e.g., graph neural networks) cluster cells, identify rare populations, and infer gene regulatory networks.

- Cytometry by Time-of-Flight (CyTOF): Utilizes metal-tagged antibodies to measure >40 proteins simultaneously at single-cell resolution, providing deep immunophenotyping. Dimensionality reduction algorithms (e.g., t-SNE, UMAP) and automated cell-type classification are standard analytical steps.

- TCR/BCR Repertoire Sequencing: Profiles the complementary determining region 3 (CDR3) of T- and B-cell receptors, quantifying clonal diversity, expansion, and sequence similarity. Machine learning is applied to predict antigen specificity from sequence and to track clonal dynamics across conditions.

Table 1: Comparative Overview of Key Immunological Data Types

| Feature | scRNA-seq | CyTOF | TCR/BCR Rep-Seq |

|---|---|---|---|

| Primary Measured Molecule | mRNA (whole transcriptome or targeted) | Proteins (pre-defined panel) | DNA (TCR/BCR gene loci) |

| Throughput (cells/run) | 1,000 - 20,000 (plate-based); 10,000 - 1M+ (droplet-based) | 1,000 - 10 million+ | 1,000 - 10 million+ |

| Key Readouts | Cell type identification, differential gene expression, developmental trajectories | Cell surface & intracellular protein expression, phospho-signaling states | Clonal abundance, diversity metrics (Shannon entropy), sequence convergence |

| Primary AI/ML Applications | Cell type annotation, trajectory inference, gene imputation | Automated population identification, biomarker discovery | Clonotype clustering, specificity prediction, minimal residual disease detection |

| Lateral Integration Potential | High (CITE-seq, ATAC-seq) | High (CODEX, sequencing conjugates) | Essential for pairing with scRNA-seq (immune repertoire + transcriptome) |

Protocol 1: Integrated scRNA-seq with V(D)J Enrichment for Paired Transcriptome and Repertoire Analysis (10x Genomics Platform)

Objective: To simultaneously capture the gene expression profile and paired full-length TCR/BCR sequences from single lymphocytes.

Materials: Fresh or cryopreserved PBMCs/single-cell suspension, Chromium Next GEM Chip K, Single Cell 5’ Library & V(D)J Enrichment Kit, Dual Index Kit TT Set A, SPRIselect Reagent Kit.

Procedure:

- Cell Preparation: Assess viability (>90%) and concentration. Prepare a single-cell suspension at 700-1,200 cells/μL in PBS + 0.04% BSA.

- Gel Bead-in-Emulsion (GEM) Generation: Combine cells, Master Mix, and Gel Beads with Partitioning Oil on a Chromium Chip K. The controller generates GEMs where single cells are lysed, and mRNAs/barcoded V(D)J transcripts are reverse-transcribed with unique Cell Barcodes and Unique Molecular Identifiers (UMIs).

- Post GEM-RT Cleanup & cDNA Amplification: Break emulsions, purify cDNA with DynaBeads MyOne SILANE, and amplify via PCR.

- Library Construction: The amplified cDNA is split for two separate libraries:

- 5’ Gene Expression Library: Fragmentation, End-Repair, A-tailing, and adapter ligation are performed on a portion of cDNA, followed by sample index PCR.

- 5’ V(D)J Enriched Library: A second portion is enriched for TCR/BCR transcripts via targeted PCR, followed by fragmentation, adapter ligation, and sample index PCR.

- Library QC & Sequencing: Assess libraries on a Bioanalyzer (Agilent). Pool libraries and sequence on an Illumina platform (e.g., NovaSeq). Recommended sequencing depth: ~20,000 read pairs/cell for gene expression; ~5,000 read pairs/cell for V(D)J.

Protocol 2: High-Parameter CyTOF Panel Design and Staining

Objective: To stain and acquire data from a single-cell suspension using a >40-marker metal-conjugated antibody panel.

Materials: Single-cell suspension, MaxPar Metal-Labeled Antibodies, Cell-ID Intercalator-Ir (191/193Ir), Cell-ID 20-Plex Pd Barcoding Kit, Fix and Perm Buffer, MaxPar Water & Cell Acquisition Solution.

Procedure:

- Cell Barcoding (Optional): Resuspend cell pellets in unique combinations of 6 Pd barcoding channels. Pool samples, wash, and stain with a surface antibody cocktail for 30 mins at RT.

- Fixation and Permeabilization: Fix cells with 1.6% formaldehyde for 10 mins. Permeabilize cells with ice-cold methanol and store at -80°C or proceed.

- Intracellular Staining: Resuspend fixed cells in Perm Buffer. Stain with intracellular antibody cocktail (e.g., transcription factors, cytokines) for 30 mins at RT.

- DNA Labeling and Acquisition: Resuspend cells in 1:4000 Cell-ID Intercalator-Ir in Fix and Perm Buffer overnight at 4°C. Wash cells thoroughly with MaxPar Water and Cell Acquisition Solution. Filter cells through a 35-μm nylon mesh. Dilute to ~1M cells/mL in Cell Acquisition Solution spiked with 1:10 EQ Four Element Calibration Beads. Acquire on a Helios or CyTOF series instrument at ~300-500 events/second.

- Data Pre-processing: Use the CyTOF software for normalization using bead signals, debarcoding (if pooled), and file export (e.g., .fcs format).

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function & Relevance to AI/ML Analysis |

|---|---|

| Chromium Next GEM Chip K (10x Genomics) | Microfluidic device for partitioning single cells into Gel Bead-in-Emulsions (GEMs). The resulting cell barcode is the fundamental unit for all downstream single-cell AI analysis. |

| Cell-ID 20-Plex Pd Barcoding Kit (Fluidigm) | Enables sample multiplexing in CyTOF, reducing batch effects and acquisition time. Critical for generating robust, high-quality training data for ML classifiers. |

| Feature Barcoding Oligos (for CITE-seq/REAP-seq) | Antibody-derived tags (ADTs) allow simultaneous protein detection in scRNA-seq. Provides a ground-truth protein correlate to train multimodal data integration models. |

| SPRIselect Beads (Beckman Coulter) | For size-selective purification of cDNA and libraries. High-quality, adapter-free libraries reduce sequencing noise, improving the signal for feature extraction algorithms. |

| MaxPar Metal-Labeled Antibodies | Antibodies conjugated to rare-earth metals, free of spectral overlap. The clean, high-dimensional data is ideal for automated, high-resolution cell-type discovery via clustering algorithms. |

| Cell-ID Intercalator-Ir | Stains DNA uniformly, allowing event detection (cell identification) and viability gating. Provides the primary "cell" label for all subsequent single-cell statistical learning. |

Integrated scRNA-seq with V(D)J Workflow

CyTOF Staining and Acquisition Workflow

AI-Driven Immunology Research Cycle

This application note details the integration of core machine learning (ML) paradigms—supervised, unsupervised, and deep learning—into immunological research. Framed within a broader thesis on AI for immunology, this document provides actionable protocols, data summaries, and visualization tools to accelerate discovery in immunophenotyping, epitope prediction, and therapeutic design for researchers and drug development professionals.

Supervised Learning for Immune Cell Classification

Application Note

Supervised learning models are trained on labeled datasets to predict discrete (classification) or continuous (regression) outcomes. In immunology, this is pivotal for classifying cell types from flow/mass cytometry data, predicting antigen immunogenicity, or forecasting patient response to immunotherapy.

Recent Data Summary (2023-2024): Table 1: Performance of Supervised Models on Immune Cell Classification (Mass Cytometry Data)

| Model | Accuracy (%) | F1-Score | Dataset Size (Cells) | Reference |

|---|---|---|---|---|

| Random Forest | 94.2 | 0.93 | 500,000 | Shaul et al., 2023 |

| XGBoost | 96.7 | 0.96 | 450,000 | ImmunAI Benchmark |

| LightGBM | 97.1 | 0.97 | 450,000 | ImmunAI Benchmark |

| SVM (Linear) | 89.5 | 0.88 | 500,000 | Shaul et al., 2023 |

Experimental Protocol: Cell Population Classification with CyTOF Data

Objective: To train a supervised classifier to annotate major immune cell populations (e.g., CD4+ T cells, B cells, Monocytes) from high-dimensional mass cytometry (CyTOF) data.

Materials: See "Scientist's Toolkit" (Section 5).

Procedure:

- Data Preprocessing:

- Load FCS files from a public repository (e.g., FlowRepository FR-FCM-ZYBR).

- Apply arcsinh transformation with a cofactor of 5 for all marker channels.

- Perform bead-based normalization if using multiple batches.

- Use manual gating by an expert immunologist to generate ground truth labels for 10 major cell populations.

- Feature Engineering & Splitting:

- Use all transformed marker intensities (e.g., 30-40 features) as input.

- Split data at the donor level into 70% training, 15% validation, and 15% test sets to prevent data leakage.

- Model Training (XGBoost Example):

- Evaluation:

- Predict on the held-out test set.

- Generate a confusion matrix and report per-class F1-score and overall accuracy.

Unsupervised Learning for Novel Phenotype Discovery

Application Note

Unsupervised learning identifies hidden patterns in unlabeled data. Techniques like clustering and dimensionality reduction are used to discover novel immune cell subsets, patient stratifications, or disease endotypes from omics data.

Recent Data Summary (2023-2024): Table 2: Unsupervised Analysis of Single-Cell RNA-Seq from Tumor-Infiltrating Lymphocytes

| Method | Primary Use | Key Finding (Study) | Cells Analyzed |

|---|---|---|---|

| UMAP + Leiden | Visualization & Clustering | Identified 3 novel exhausted CD8+ T cell states | 65,000 |

| SCANPY Pipeline | End-to-end scRNA-seq analysis | Revealed plasticity between Tr1 and Treg cells | 100,000 |

| PhenoGraph | Graph-based Clustering | Discovered a macrophage subset linked to immunotherapy resistance | 45,000 |

Experimental Protocol: Discovering Cellular States with scRNA-seq

Objective: To apply unsupervised clustering on single-cell RNA sequencing data from tumor microenvironments to identify novel immune cell states.

Procedure:

- Data Acquisition & QC:

- Obtain a count matrix (genes x cells) from a platform like 10x Genomics.

- Filter cells with < 200 genes or > 20% mitochondrial reads. Filter genes detected in < 3 cells.

- Normalization & Feature Selection:

- Normalize total counts per cell to 10,000 (CP10k). Log-transform.

- Identify 2000-3000 highly variable genes (HVGs).

- Dimensionality Reduction & Clustering:

- Scale data to zero mean and unit variance.

- Perform PCA (50 components).

- Construct a neighborhood graph (k=20 neighbors) on PCA space.

- Cluster cells using the Leiden algorithm (resolution=0.6).

- Generate a 2D visualization using UMAP based on the PCA embedding.

- Marker Identification & Annotation:

- For each cluster, perform differential expression analysis (Wilcoxon rank-sum test) against all other cells.

- Identify top 5 marker genes per cluster.

- Annotate clusters using known marker genes (e.g., CD3E for T cells, CD19 for B cells) and novel markers suggest new states.

Deep Learning for Antigen-Antibody Interaction Prediction

Application Note

Deep learning (DL), particularly deep neural networks (DNNs) and convolutional neural networks (CNNs), models complex, non-linear relationships. In immunology, DL excels at predicting peptide-MHC binding, antibody affinity maturation, and designing bispecific antibodies.

Recent Data Summary (2023-2024): Table 3: Deep Learning Models for pMHC-II Binding Prediction

| Model | Architecture | AUC-ROC | Data Source (Peptides) |

|---|---|---|---|

| NetMHCIIpan-4.2 | CNN + Ensemble | 0.920 | IEDB (>200,000) |

| MixMHCpred2.2 | Motif Deconvolution + NN | 0.905 | In-house MS data |

| DeepLigand | Multi-layer Perceptron | 0.890 | IEDB & Benchmark |

Experimental Protocol: Predicting TCR-Peptide Binding with a CNN

Objective: To train a convolutional neural network to predict whether a given T-cell receptor (TCR) beta chain CDR3 sequence binds to a specific peptide-MHC complex.

Procedure:

- Data Preparation:

- Obtain paired TCR-peptide data from databases like VDJdb or McPAS-TCR.

- Include negative samples (non-binders) from validated negative sets or by careful shuffling.

- Encode amino acid sequences using one-hot encoding (20 letters) or biochemical property vectors.

- Pad or truncate CDR3 sequences to a fixed length (e.g., 20 aa).

- Model Architecture (Simplified CNN):

- Input Layer: Sequence matrix (20x20 for one-hot).

- Conv Layers: Two 1D convolutional layers (filters=64, kernel=3, ReLU activation).

- Pooling: Global max pooling.

- Dense Layers: Two fully connected layers (128 units, ReLU) with 50% Dropout.

- Output Layer: Single unit with sigmoid activation for binary classification.

- Training:

- Use binary cross-entropy loss and Adam optimizer (lr=0.001).

- Train with batch size=64, validating on a 20% hold-out set.

- Implement early stopping based on validation AUC.

- Validation:

- Evaluate on an independent test set from a different study.

- Report precision, recall, AUC-ROC, and AUC-PR.

Visualizations

Diagram: ML Workflow in Immunology Research

Title: Core ML Workflow for Immunology Data Analysis

Diagram: Neural Network for pMHC Binding Prediction

Title: CNN Architecture for Peptide-MHC Binding Prediction

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Featured Experiments

| Item | Function/Application | Example Vendor/Product |

|---|---|---|

| Mass Cytometry Antibody Panel | Simultaneous detection of 30+ surface/intracellular markers for deep immunophenotyping. | Fluidigm MaxPar Direct Immune Profiling Assay |

| Single-Cell RNA-seq Kit | Generation of barcoded libraries from individual cells for transcriptomic analysis. | 10x Genomics Chromium Next GEM Single Cell 5' Kit v3 |

| pMHC Tetramers | Fluorescently labeled multimeric complexes for identifying antigen-specific T cells via flow cytometry. | MBL International Tetramer Factory |

| Recombinant Cytokines & Antibodies | For functional validation assays (e.g., T cell activation, suppression, proliferation). | BioLegend, PeproTech |

| AI/ML Software Platform | Integrated environment for implementing protocols in Sections 1-3. | Python (Scanpy, scikit-learn, TensorFlow/PyTorch) |

| High-Performance Computing (HPC) or Cloud Credits | Essential for training deep learning models on large immunological datasets. | AWS, Google Cloud, Azure |

Application Notes

This application note details the integration of unsupervised machine learning (ML) with high-dimensional single-cell technologies to deconvolve immune heterogeneity. Within the broader thesis of advancing AI for immunology, this approach moves beyond manual gating, enabling data-driven, hypothesis-free discovery of previously obscured cell states. The protocols herein are critical for researchers and drug development professionals aiming to identify novel cellular targets, understand disease mechanisms, and develop predictive biomarkers.

Core Workflow & Data Interpretation:

- High-Dimensional Data Generation: Mass cytometry (CyTOF) or single-cell RNA sequencing (scRNA-seq) generates data matrices with 30-50 protein markers or 20,000+ genes per cell.

- Preprocessing & Dimensionality Reduction: Data is normalized, transformed, and scaled. Principal Component Analysis (PCA) reduces noise, retaining the top components (typically 10-30) that capture the majority of variance.

- Unsupervised Clustering: Algorithms partition cells into distinct groups. Key metrics for evaluation include:

- Silhouette Score: Measures how similar a cell is to its own cluster versus others (range: -1 to 1).

- Calinski-Harabasz Index: Ratio of between-cluster dispersion to within-cluster dispersion.

- Cluster Annotation & Validation: Differentially expressed genes/proteins (DEGs) for each cluster are calculated. Putative identities are assigned via reference databases (e.g., ImmGen). Functional validation requires in vitro or ex vivo assays (see Protocols).

Quantitative Data Summary from a Representative Analysis:

Table 1: Clustering Algorithm Performance on a Healthy Donor PBMC scRNA-seq Dataset (n=10,000 cells)

| Clustering Algorithm | Number of Clusters Identified | Mean Silhouette Score | Calinski-Harabasz Index |

|---|---|---|---|

| Louvain (Graph-based) | 12 | 0.42 | 1250 |

| Leiden (Graph-based) | 11 | 0.45 | 1310 |

| k-Means (Partitional) | 10 (pre-set) | 0.38 | 1150 |

| DBSCAN (Density-based) | 9 | 0.51 | 1050 |

Table 2: Characterization of a Novel Candidate Cluster (Cluster 7)

| Metric | Value | Interpretation |

|---|---|---|

| % of Total Cells | 1.8% | Rare immune subset |

| Top 5 DEGs (vs. All CD8+ T Cells) | TCF7, IL7R, GZMK, CXCR3, ZNF683 | Memory-like, tissue-resident phenotype |

| Key Protein Markers (CyTOF) | CD8+, CD45RO+, CD62L-, CD103+, PD-1+ | Effector memory/ Tissue-resident phenotype |

| Enriched Pathways (GO Analysis) | T cell activation, Apoptotic process, Response to interferon-gamma | Activated, pro-inflammatory state |

Experimental Protocols

Protocol 1: Single-Cell RNA Sequencing Data Processing & Clustering Objective: To generate and analyze scRNA-seq data for unsupervised cell type discovery. Materials: See "Scientist's Toolkit" below. Procedure:

- Cell Preparation & Sequencing: Isolate PBMCs using Ficoll density gradient. Prepare single-cell suspensions with >90% viability. Process through 10x Genomics Chromium Controller using the 3' v3.1 gene expression kit. Sequence on an Illumina NovaSeq to a target depth of 50,000 reads per cell.

- Raw Data Processing: Use

Cell Ranger(10x Genomics) to demultiplex, align reads to the GRCh38 reference genome, and generate a feature-barcode matrix. - Quality Control & Filtering (in R/Python):

- Load data using

Seurat(R) orScanpy(Python). - Filter cells with <200 or >6000 detected genes and >15% mitochondrial reads.

- Filter genes detected in <3 cells.

- Load data using

- Normalization & Scaling: Normalize total expression per cell to 10,000 reads (LogNormalize in Seurat). Scale data, regressing out variation from mitochondrial percentage.

- Dimensionality Reduction & Clustering:

- Identify 2000 highly variable genes.

- Perform PCA. Select the top 15 principal components (PCs) based on the elbow plot.

- Construct a K-nearest neighbor (KNN) graph (k=20) in PC space.

- Apply the Leiden algorithm (resolution parameter=0.8) to partition the graph into clusters.

- Visualize using UMAP (Uniform Manifold Approximation and Projection) on the same PCs.

- Differential Expression & Annotation: Use the Wilcoxon rank-sum test to find DEGs for each cluster. Annotate clusters by cross-referencing DEGs with the

SingleRpackage (using the Human Primary Cell Atlas reference).

Protocol 2: Functional Validation of a Novel Cluster by Cytokine Secretion Assay Objective: To functionally validate the unique phenotype of a novel cluster identified in silico. Materials: FACS sorter, cell culture plates, PMA/Ionomycin, Brefeldin A, intracellular cytokine staining kit, flow cytometer. Procedure:

- Cell Sorting Based on Cluster Signature: From a fresh PBMC sample, stain cells with antibodies corresponding to the top protein markers of the novel cluster (e.g., for Cluster 7 from Table 2: CD8, CD45RO, CD103, PD-1). Include a dump channel (CD4, CD14, CD19, CD56) for exclusion. Use FACS to sort the putative novel population (CD8+ CD45RO+ CD103+ PD-1+) and a conventional memory CD8+ T cell control (CD8+ CD45RO+ CD103- PD-1-).

- Stimulation & Culture: Seed 10,000 sorted cells per well in a 96-well plate. Stimulate with PMA (50 ng/mL) and Ionomycin (1 µg/mL) in the presence of Brefeldin A (10 µg/mL) for 5 hours at 37°C, 5% CO₂.

- Intracellular Staining: After stimulation, fix and permeabilize cells using a commercial kit. Stain intracellularly for IFN-γ, TNF-α, and IL-2.

- Flow Cytometry Analysis: Acquire data on a flow cytometer. Compare the cytokine production profile (frequency and polyfunctionality) of the novel cluster to the conventional control. A statistically significant difference (p<0.05, unpaired t-test) confirms a functionally distinct state.

Visualizations

AI-Driven Immune Discovery Workflow

Signaling in Novel CD8+ T Cell Subset

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Immune Cell Discovery

| Item | Function & Application |

|---|---|

| 10x Genomics Chromium Single Cell 3' Kit | Integrated solution for barcoding, reverse transcription, and library preparation of thousands of single cells for scRNA-seq. |

| Maxpar Antibody Labeling Kits (Fluidigm) | Enables conjugation of pure metal isotopes to antibodies for high-parameter (40+) CyTOF panels with minimal signal overlap. |

| Human Leukocyte Differentiation Antigen (HLDA) Panel | Validated antibody clones targeting CD markers, essential for designing phenotyping panels for both flow cytometry and CyTOF. |

| Ficoll-Paque PLUS (Cytiva) | Density gradient medium for the isolation of high-viability PBMCs from human blood samples. |

| Recombinant Human IL-2 (PeproTech) | Critical cytokine for the in vitro expansion and maintenance of functionally viable T cell subsets post-sorting. |

| Cell Stimulation Cocktail (PMA/Ionomycin) + Protein Transport Inhibitors (eBioscience) | Standardized kit for the activation of T cells and inhibition of cytokine secretion, enabling intracellular cytokine staining assays. |

| Seurat R Toolkit / Scanpy Python Package | Open-source software environments providing comprehensive pipelines for single-cell data QC, analysis, and visualization. |

| ImmGen & Human Cell Atlas References | Publicly available, curated databases of gene expression profiles from purified immune cells, crucial for automated cluster annotation. |

AI in Action: Methodological Breakthroughs and Cutting-Edge Applications

Within the broader thesis on artificial intelligence (AI) and machine learning (ML) for immunology research, the development of predictive models for antigen recognition and epitope prediction represents a transformative frontier. This Application Note details the current landscape of AI/ML models, their performance benchmarks, and provides actionable protocols for their application in therapeutic and diagnostic development.

Current State of AI Models: Performance Benchmarks

Recent advancements have yielded numerous models with distinct architectures and training datasets. The table below summarizes key quantitative performance metrics for leading models as of recent evaluations.

Table 1: Performance Comparison of Recent AI/ML Models for Epitope Prediction

| Model Name | Core Architecture | Key Training Dataset(s) | Predicted Target(s) | Reported AUC (Range) | Key Strength |

|---|---|---|---|---|---|

| NetMHCPan 4.1 | Artificial Neural Network (ANN) | MHC-peptide binding data (IEDB) | MHC-I & MHC-II binding | 0.90 - 0.95 (MHC-I) | Pan-specificity, broad allele coverage |

| MHCFlurry 2.0 | Ensemble of ANNs | Curated mass spectrometry & binding data | MHC-I binding & antigen processing | 0.93 - 0.97 | Integrated antigen processing prediction |

| AlphaFold2 (adapted) | Transformer-based (Evoformer) | Protein Data Bank, structural data | Protein-antigen structure | (Docking Score > 0.8)* | High-resolution structural prediction |

| BepiPred-3.0 | Transformer & LSTM | Structural epitope data (IEDB, DiscoTope) | Linear & Conformational B-cell epitopes | 0.78 (Acc.) | Combined sequence & structure features |

| ElliPro | Thornton's method (geometric) | Protein structures (PDB) | Conformational B-cell epitopes | 0.73 (AUC) | No training required, residue clustering |

| DeepSCAb | Convolutional Neural Network (CNN) | Structural antibody-antigen complexes | Discontinuous epitope paratopes | 0.85 (AUC) | Direct paratope-epitope contact prediction |

| TITAN (TCR Specificity) | Attention-based Deep Learning | VDJdb, MIRA, 10x Genomics data | TCR-pMHC recognition | 0.89 (AUC) | Predicts specificity from TCR sequence |

*Not a traditional AUC; reported as high prediction accuracy for complex formation.

Experimental Protocols

Protocol 3.1: In Silico Prediction of MHC-I Binding Peptides Using AI Tools

Objective: To predict high-affinity candidate neoantigens from tumor somatic mutation data for vaccine design. Materials: Tumor sequencing data (VCF file), reference proteome, high-performance computing (HPC) or cloud environment. Procedure:

- Data Preprocessing: Use a variant calling pipeline (e.g., GATK) to identify somatic missense mutations. Translate mutated sequences using

bcftools csqor similar. - Peptide Extraction: For each mutated protein sequence, generate all possible 8-11mer peptides spanning the mutation site using

netMHCpan-4.1'speptide2scoreor a custom Python script. - AI Model Prediction:

a. Install

netMHCpan-4.1and/orMHCFlurry 2.0(pip install mhcflurry). b. Prepare an input file in CSV format listing peptide sequences and relevant HLA alleles of the patient (e.g., HLA-A02:01, HLA-B07:02). c. Run binding prediction:

- Ranking & Validation: Rank peptides by predicted binding affinity (typically %Rank < 0.5% or IC50 < 50nM). Top candidates should be selected for in vitro validation (see Protocol 3.3).

Protocol 3.2: Prediction of B-Cell Conformational Epitopes

Objective: To map potential antibody binding sites on a target viral surface protein.

Materials: Resolved or predicted 3D structure of the target antigen (PDB file or AlphaFold2 model).

Procedure:

- Structure Preparation: If using an AlphaFold2 model, ensure the predicted local distance difference test (pLDDT) score is >70 for regions of interest. Clean the PDB file using

pdb-tools or Schrödinger's Protein Preparation Wizard.

- Run ElliPro Analysis:

a. Access the IEDB ElliPro tool online or run the standalone version.

b. Upload the prepared PDB file.

c. Set parameters: Minimum Score = 0.5, Maximum Distance (Å) = 6.0.

d. Submit the job and retrieve results, which include epitope residue clusters and a protrusion index (PI) score.

- Run DeepSCAb or BepiPred-3.0 (Structure-based):

a. For DeepSCAb, submit the antigen structure to the web server or run the model container locally if available.

b. The output will provide a probability score per residue for being part of a conformational epitope.

- Consensus Mapping: Overlay results from ElliPro and DeepSCAb to identify high-confidence consensus regions for downstream monoclonal antibody (mAb) development.

Protocol 3.3: In Vitro Validation of AI-Predicted T-Cell Epitopes

Objective: To experimentally validate the immunogenicity of AI-predicted neoantigen candidates.

Materials: Synthetic predicted peptides, donor PBMCs, ELISpot or flow cytometry kits.

Procedure:

- Peptide Synthesis & Preparation: Synthesize top 10-20 predicted peptides (>90% purity). Prepare 1mg/mL stock solutions in DMSO or sterile PBS.

- Donor Cell Isolation: Isolate PBMCs from healthy donor buffy coats (with known HLA matching) or patient samples using Ficoll-Paque density gradient centrifugation.

- T-Cell Stimulation: Seed PBMCs in a 96-well U-bottom plate at 2x10^5 cells/well. Add individual peptides at a final concentration of 1-10 µg/mL. Include positive (PHA) and negative (DMSO/PBS) controls. Culture for 10-14 days, with IL-2 supplementation every 2-3 days.

- Immunogenicity Assay (IFN-γ ELISpot):

a. On day 10-14, harvest cells and re-stimulate with the same peptides for 24-48 hours in an IFN-γ pre-coated ELISpot plate.

b. Develop the plate according to manufacturer's instructions.

c. Count spots using an automated ELISpot reader. A response is typically considered positive if the peptide-stimulated well has at least 2x the spot count of the negative control and >10 spots per well.

- Data Correlation: Correlate the frequency of immunogenic peptides with the AI model's predicted rank/affinity score to iteratively refine the prediction algorithm.

Visualizations

AI-Driven Epitope Discovery Workflow

AI Model Architectures for Immunology

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for AI-Prediction Validation

Item

Function in Validation

Example Product/Supplier

HLA Typing Kit

Determines patient/donor HLA allelic profile for accurate, personalized AI prediction.

SeCore HLA Sequencing Kits (Thermo Fisher)

ELISpot Kit (IFN-γ/IL-2)

Gold-standard for quantifying antigen-specific T-cell responses in PBMCs.

Human IFN-γ ELISpotPRO (Mabtech)

pMHC Multimers (Tetramers/Dextramers)

Direct ex vivo staining and isolation of epitope-specific T-cells via flow cytometry.

PE-conjugated pMHC Tetramers (Immudex)

Peptide Pools & Libraries

Synthetic peptides for high-throughput screening of AI-predicted epitopes.

PepMix Peptide Pools (JPT Peptide Technologies)

Recombinant MHC Molecules

For in vitro binding assays (e.g., ELISA) to confirm AI-predicted affinity.

Recombinant HLA-A*02:01 (Bio-Techne)

Cell Line: T2 (TAP-deficient)

Presents exogenous peptides on MHC-I; used in binding/stabilization assays.

ATCC CRL-1992

Flow Cytometry Panel Antibodies

Phenotyping and functional analysis of activated T-cells (CD3, CD8, CD137, etc.).

Anti-human CD3/CD8/CD137 (BioLegend)

Cytokine Bead Array (CBA)

Multiplex quantification of cytokines released by activated immune cells.

LEGENDplex Human CD8/NK Panel (BioLegend)

Within the broader thesis on AI and machine learning for immunology research, this document details the application of computational pipelines to discover robust, biologically relevant signatures from multi-omics data. The integration of genomics, transcriptomics, proteomics, and metabolomics, powered by machine learning, is revolutionizing the identification of diagnostic and prognostic biomarkers in complex immunological diseases, enabling precision medicine and accelerating therapeutic development.

Table 1: Comparative Overview of Primary Omics Technologies for Biomarker Discovery

| Omics Layer | Typical Assay | Key Readout | Throughput | Approx. Cost per Sample | Primary Biomarker Class |

|---|---|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS) | DNA Sequence Variants | High | $600 - $1,000 | Germline/Somatic Mutations |

| Transcriptomics | RNA-Seq / Single-Cell RNA-Seq | Gene Expression Levels | High | $500 - $3,000 | mRNA, lncRNA, Gene Signatures |

| Proteomics | LC-MS/MS / Olink / SomaScan | Protein Abundance | Medium-High | $200 - $800 | Proteins, PTMs |

| Metabolomics | LC-MS / GC-MS | Metabolite Abundance | Medium | $300 - $600 | Small Molecules |

Table 2: Performance Metrics of Representative ML Models in Multi-Omics Integration

| Study Focus (Disease) | ML Model Used | Data Types Integrated | Reported AUC | Key Biomarkers Identified |

|---|---|---|---|---|

| Rheumatoid Arthritis Prognosis | Random Forest + Cox PH | RNA-Seq, Cytokine Proteomics | 0.89 | MMP3, CXCL13, S100A12 |

| Sepsis Outcome Prediction | Deep Neural Network (DNN) | WGS, Plasma Metabolomics, Clinical Labs | 0.91 | Lactate, ARG1 expression |

| IBD Subtyping (Crohn's vs UC) | Multi-kernel Learning | Microbiome, Serology, Transcriptomics | 0.94 | Anti-GP2, *Faecalibacterium abundance* |

Application Notes & Detailed Protocols

Protocol: An Integrated Pipeline for Multi-Omics Biomarker Discovery Using AI

Objective: To identify a prognostic protein signature for survival prediction in diffuse large B-cell lymphoma (DLBCL) by integrating transcriptomic and proteomic data.

3.1.1. Pre-processing and Quality Control (QC)

- RNA-Seq Data: Use

FastQCfor raw read QC. Trim adapters withTrimGalore. Align to GRCh38 withSTAR. Generate gene counts usingfeatureCounts. Normalize using TPM and correct for batch effects withComBatfrom thesvaR package. - Proteomics Data (LC-MS/MS): Process raw

.rawfiles withMaxQuant(v2.0). Use the UniProt human database. Filter for 1% FDR at peptide and protein levels. Normalize using median scaling and log2 transformation. Impute missing values using themissForestR package for left-censored (MNAR) data.

3.1.2. Dimensionality Reduction and Feature Selection

- Concatenation-Based Integration: Merge normalized RNA and protein data (for common genes/proteins) into a single matrix.

- Unsupervised Feature Filtering: Remove features with near-zero variance using the

caretR package. - Supervised Feature Selection: Apply

LASSO(Least Absolute Shrinkage and Selection Operator) regression with Cox proportional hazards loss function using theglmnetR package. Perform 10-fold cross-validation to select the optimal lambda (λ) value minimizing partial likelihood deviance.

3.1.3. Model Building and Validation

- Prognostic Model Construction: Build a multivariate Cox Proportional Hazards model using the top 15 features selected by LASSO.

- Risk Score Calculation: For each patient, compute a risk score as the linear combination of selected feature expressions weighted by their Cox regression coefficients.

- Validation: Split data into 70% training and 30% validation cohorts. Assess model performance using:

- Kaplan-Meier Analysis: Stratify patients into high/low-risk groups by median risk score. Log-rank test for significance.

- Time-dependent ROC Analysis: Calculate the area under the curve (AUC) for 1-, 3-, and 5-year overall survival using the

timeROCR package.

3.1.4. Biological Interpretation

- Pathway Enrichment: Perform Gene Set Enrichment Analysis (GSEA) on the genes corresponding to selected protein biomarkers using the

fgseaR package against the Hallmark and KEGG collections. - Network Analysis: Construct a protein-protein interaction (PPI) network using the STRING database and visualize in Cytoscape to identify hub genes.

Protocol: Single-Cell Multi-Omics Workflow for Immune Cell Biomarker Discovery

Objective: To identify rare, disease-associated immune cell populations and their marker genes from CITE-seq (Cellular Indexing of Transcriptomes and Epitopes by Sequencing) data.

3.2.1. Data Processing

- Cell Ranger: Process raw CITE-seq FASTQ files using

Cell Ranger(v7.0) withcountfunction, specifying the feature barcode kit. - Quality Control in R/Seurat: Load the matrix into

Seurat. Filter cells with:- Unique feature counts (nFeatureRNA) between 200 and 6000.

- Total RNA counts (nCountRNA) < 40,000.

- Mitochondrial gene percentage < 15%.

- ADT (Antibody-Derived Tag) Normalization: Normalize protein (ADT) data using centered log ratio (CLR) transformation.

3.2.2. Integrated Analysis

- Dimensionality Reduction: For RNA data, perform PCA on variable features. For ADT data, run PCA directly on CLR-transformed counts.

- Weighted Nearest Neighbors (WNN) Integration: Use the

FindMultiModalNeighborsfunction in Seurat to construct a WNN graph integrating RNA and protein modalities. - Clustering and UMAP: Generate a shared UMAP visualization based on the WNN graph. Perform graph-based clustering (

FindClusters, resolution=0.5).

3.2.3. Differential Biomarker Identification

- Use the

FindAllMarkersfunction to find genes and surface proteins significantly enriched (avglog2FC > 0.5, pval_adj < 0.01) in each cluster compared to all others. This yields a combined gene-protein signature for each immune cell population.

Visualization Diagrams

Workflow for AI-Powered Multi-Omics Biomarker Discovery

Immune Signaling Pathway Yielding Soluble Biomarkers

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-Omics Biomarker Discovery

| Category | Product/Kit Name | Provider | Key Function in Workflow |

|---|---|---|---|

| Sample Prep (Proteomics) | S-Trap Micro Columns | ProtiFi | Efficient digestion and cleanup of complex protein samples for LC-MS/MS, ideal for challenging lysates. |

| Sample Prep (Transcriptomics) | SMART-Seq v4 Ultra Low Input RNA Kit | Takara Bio | Highly sensitive cDNA synthesis and amplification for RNA-seq from low-input or single-cell samples. |

| Multiplex Immunoassay | Olink Target 96 or Explore | Olink | Proximity Extension Assay (PEA) technology for highly specific, multiplex quantification of 92-3000+ proteins in minute sample volumes. |

| Spatial Multi-omics | Visium Spatial Gene Expression | 10x Genomics | Enables whole transcriptome analysis while retaining tissue architecture context, crucial for tumor microenvironment studies. |

| Data Analysis Suite | Partek Flow | Partek | GUI-based bioinformatics software with built-in, optimized pipelines for end-to-end statistical analysis of multi-omics data. |

| AI/ML Platform | DriverMap Immune Profiling | Cellecta | Combinatorial barcoding and NGS for highly multiplexed immune cell profiling, with integrated ML analysis tools for biomarker detection. |

Introduction Within the broader thesis on AI and machine learning for immunology research, digital twins represent a paradigm shift. These are dynamic, multi-scale computational models of individual biological systems, continuously updated with experimental and clinical data. This application note details protocols and frameworks for developing immune system digital twins to simulate response dynamics and predict disease trajectories, accelerating therapeutic discovery.

Core Data and Modeling Approaches Table 1: Quantitative Data for Immune Digital Twin Calibration

| Data Type | Exemplary Source/Assay | Typical Scale/Resolution | Primary Use in Model |

|---|---|---|---|

| Single-Cell RNA Sequencing | 10x Genomics, Smart-seq2 | 1,000 - 100,000 cells; 1,000-20,000 genes/cell | Define cell states & heterogeneity; infer signaling activity |

| Cytokine/Chemokine Profiling | Luminex/MSD Assay | 30-100 analytes; pg/mL sensitivity | Validate & calibrate intercellular communication |

| Immune Cell Phenotyping | Mass Cytometry (CyTOF) | 40-50 protein markers/cell | Quantify cell population frequencies & activation states |

| T-Cell Receptor Repertoire | Adaptive Biotechnologies | 1e6 - 1e8 unique sequences | Model antigen-specific clonal expansion & diversity |

| Longitudinal Clinical Labs | CBC with Differential, CRP | Daily to monthly time series | Track systemic immune status & disease flares |

Protocol 1: Developing a Multi-Scale Agent-Based Model (ABM) of Acute Inflammation

Objective: To construct a spatially-resolved digital twin of innate immune response to pathogen challenge.

Materials & Workflow:

- Define Computational Environment: Use modeling platforms like PhysiCell or CompuCell3D.

- Agent Specifications: Program agents (e.g., macrophages, neutrophils, epithelial cells) with rules for:

- Chemotaxis (following [IL-8], [MCP-1] gradients).

- Phagocytosis (probability based on pathogen opsonization state).

- Cytokine Secretion (state-dependent rates).

- Apoptosis/Necrosis (stochastic or signal-driven).

- Parameterization: Import kinetic rates (e.g., cytokine diffusion, decay) from databases like BioNumbers.

- Calibration: Use high-content microscopy data of in vitro immune cell trafficking to fit motility parameters.

- Validation: Challenge the simulation with a virtual pathogen load and compare the emergent cytokine dynamics (e.g., TNF-α, IL-6 time-course) to in vivo murine data.

The Scientist's Toolkit Table 2: Key Research Reagent Solutions for Digital Twin Validation

| Reagent/Kit | Provider Examples | Function in Context |

|---|---|---|

| Phenotyping Antibody Panels | BioLegend, BD Biosciences | High-parameter cell state definition for model ontology. |

| Recombinant Cytokines & Inhibitors | R&D Systems, PeproTech | Perturb signaling networks in vitro to test model predictions. |

| Organ-on-a-Chip Platforms | Emulate, MIMETAS | Generate controlled, multimodal time-series data for calibration. |

| LIVE/DEAD Cell Viability Assays | Thermo Fisher Scientific | Quantify agent death rules in the simulation (apoptosis/necrosis). |

| Multiplex Immunoassay Panels | Meso Scale Discovery (MSD) | Measure cytokine network outputs for model validation. |

Protocol 2: Integrating Machine Learning for Parameter Inference and Model Personalization

Objective: To calibrate a patient-specific digital twin from sparse, longitudinal omics data.

Methodology:

- Build a Prior Model: Use ordinary differential equations (ODEs) representing core pathways (e.g., IFN signaling, T-cell exhaustion).

- Define Likelihood Function: Use a Gaussian process to model how simulation outputs (e.g., predicted CD8+ T cell count) relate to observed clinical data.

- Parameter Inference: Employ a Bayesian optimization or Markov Chain Monte Carlo (MCMC) algorithm (e.g., PyMC3, Stan) to find the parameter set that maximizes the likelihood of the observed patient data.

- Sensitivity Analysis: Use the trained model to perform in-silico knock-outs of key parameters (e.g., PD-1/PD-L1 interaction strength) to identify potential therapeutic targets.

Visualization of Key Concepts

(Title: Digital Twin Personalization Workflow)

(Title: IFN-γ JAK-STAT Signaling Pathway)

Application Note: Simulating Checkpoint Inhibitor Therapy in a Tumor Microenvironment (TME) Digital Twin A calibrated TME digital twin, integrating agents for T-cells, cancer cells, and myeloid-derived suppressor cells (MDSCs), can test combination therapies. In-silico protocol: 1) Initialize model with patient-specific T-cell clonality and tumor antigen data. 2) Simulate anti-PD-1 therapy. 3) Identify non-responders by analyzing simulated MDSC recruitment and adenosine signaling. 4) Propose and test in-silico combination with an A2AR antagonist. 5) Output predicted cytokine shifts (e.g., IFN-γ/IL-10 ratio) for in-vivo validation.

Conclusion Digital twins, powered by AI-driven calibration and multi-scale modeling, provide a powerful in-silico sandbox for immunology. They enable hypothesis generation, de-risk clinical trials through patient stratification, and offer a foundational tool for the thesis vision of a fully integrated, predictive AI platform for immunology research and therapeutic development.

Application Notes: AI-Driven Target Identification

The integration of AI into immunology research has fundamentally altered the early-stage discovery pipeline for novel drugs and vaccines. Within the broader thesis of applying machine learning to immunology, these tools primarily accelerate the identification and validation of high-potential biological targets—proteins, genes, or pathways involved in disease mechanisms.

1.1. Key Applications & Quantitative Impact Recent studies and industrial reports quantify the acceleration and increased success rates enabled by AI/ML.

Table 1: Quantitative Impact of AI/ML in Early-Stage Drug Discovery

| Metric | Traditional Approach | AI/ML-Augmented Approach | Data Source (Year) |

|---|---|---|---|

| Target Identification Timeline | 12-24 months | 3-6 months | Industry Benchmarking (2023) |

| Average Cost per Target Identified | $2M - $5M | $200K - $1M | McKinsey Analysis (2024) |

| Predicted Target Success Rate (Phase I Entry) | ~5% | 10-15% | Nature Reviews Drug Discovery (2023) |

| Number of Novel Immune Checkpoints Proposed (2020-2024) | ~5 manually | 50+ via ML mining | Literature & Patent Analysis (2024) |

| Throughput for Compound Screening (Virtual) | 10^3 - 10^5 compounds/week | 10^7 - 10^9 compounds/week | DeepMind/Isomorphic Labs (2023) |

1.2. AI Modalities in Immunology Research

- Natural Language Processing (NLP): Models like BioBERT and PubMedBERT mine millions of scientific publications, clinical trial records, and patents to form hypothetical disease associations.

- Deep Learning on Omics Data: Convolutional Neural Networks (CNNs) and Graph Neural Networks (GNNs) analyze single-cell RNA-seq, proteomics, and spatial transcriptomics data to identify novel cell states, receptor-ligand pairs, and dysregulated pathways in autoimmune diseases or cancer.

- Generative AI for Antigen Design: Diffusion models and variational autoencoders (VAEs) are used to design novel vaccine antigens (e.g., for SARS-CoV-2 variants, influenza) and therapeutic antibodies with optimized binding and developability profiles.

Experimental Protocols

Protocol 1: In Silico Target Prioritization Using Multi-Omics Integration

Objective: To identify and prioritize novel immuno-oncology targets by integrating publicly available transcriptomic, proteomic, and genetic datasets using a supervised ML pipeline.

Materials & Reagents:

- High-performance computing cluster or cloud instance (Google Cloud, AWS).

- Curated disease datasets from TCGA (cancer), GTEx (normal tissue), and GEO repositories.

- Python environment with libraries: Scanpy, PyTorch, scikit-learn, pandas.

Procedure:

- Data Curation: Download RNA-seq and survival data for a cancer cohort (e.g., TCGA-SKCM). Obtain single-cell RNA-seq data of tumor-infiltrating lymphocytes from a related study (e.g., from GEO).

- Feature Engineering: Using the bulk RNA-seq data, calculate differential gene expression between responders and non-responders to immune checkpoint blockade. From scRNA-seq data, use graph-based clustering to identify unique T-cell exhaustion signatures.

- Model Training: Train a gradient-boosted tree model (XGBoost) using gene expression features, mutation status, and pathway activity scores to predict clinical response. Use Shapley Additive Explanations (SHAP) for model interpretability.

- Target Prioritization: Rank genes by their SHAP value importance. Cross-reference top candidates with cell surface protein databases (e.g., The Human Protein Atlas) and CRISPR knockout viability screens (DepMap) to filter for essential, druggable, and immunologically relevant targets.

- Validation: Perform in silico validation by checking target gene expression correlation with CD8+ T-cell infiltration across multiple independent cohorts.

Protocol 2: Generative Design of a Therapeutic Antibody Fragment (scFv)

Objective: To use a pre-trained protein language model and a diffusion model to generate novel single-chain variable fragment (scFv) sequences against a specified target antigen epitope.

Materials & Reagents:

- Pre-trained protein model (e.g., ESM-2 from Meta AI).

- Structural data (PDB file) of the target antigen.

- Known antibody-antigen complex structures for conditioning (e.g., from SAbDab database).

- GPU-accelerated computing environment.

Procedure:

- Epitope Definition: Extract the target epitope's amino acid sequence and structural coordinates from the PDB file.

- Conditioning the Model: Encode the epitope sequence using ESM-2 to generate a continuous vector representation ("conditioning vector").

- Sequence Generation: Input the conditioning vector into a diffusion model (e.g., RFdiffusion) specialized for protein design. The model will iteratively denoise a random sequence to produce a novel scFv complementary-determining region (CDR) sequence predicted to bind the epitope.

- In Silico Affinity Maturation: Use a trained predictor (like AlphaFold2 or a dedicated affinity predictor) to score the generated scFv designs. Select the top 100 designs for further analysis.

- Stability & Developability Filtering: Pass the top designs through computational filters (NetCharge, aggregation propensity, instability index) to eliminate non-viable candidates.

- Output: The final output is a list of 10-20 novel scFv amino acid sequences ready for in vitro synthesis and validation.

Visualization: AI-Driven Immunology Discovery Workflow

AI-Driven Immunology Discovery Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Tools for AI-Guided Immunology Experiments

| Reagent/Tool Category | Specific Example | Function in AI-Integrated Workflow |

|---|---|---|

| High-Plex Protein Profiling | Olink Explore Proximity Extension Assay (PEA) Panels | Validates AI-predicted protein targets quantitatively in patient sera or cell supernatants. Provides high-quality training data for models. |

| Single-Cell Multiomics Kits | 10x Genomics Single Cell Immune Profiling Kit | Generates paired V(D)J and gene expression data from T/B cells. Crucial for training models on immune repertoire and cell state. |

| CRISPR Screening Libraries | Synthego or Horizon Discovery pooled gRNA libraries | Enables functional validation of AI-prioritized gene targets via high-throughput knockout/activation screens. |

| Recombinant Proteins & Antibodies | Sino Biological or ACROBiosystems recombinant viral antigens/immune checkpoint proteins | Used for in vitro binding and functional assays to validate AI-designed antibodies or vaccine candidates. |

| Cell-Based Reporter Assays | Promega Bio-Glo or NFAT/NF-κB Luciferase Reporter Cell Lines | Quantifies functional immune cell activation or inhibition by AI-predicted therapeutic molecules. |

| AI-Ready Data Repositories | ImmuneSpace (NIH), The Cancer Imaging Archive (TCIA) | Curated, standardized datasets (transcriptomic, flow cytometry, imaging) for training and benchmarking ML models. |

Within the broader thesis on AI and machine learning for immunology research, deep learning has emerged as a transformative tool for neoantigen discovery and prioritization. Neoantigens, tumor-specific peptides arising from somatic mutations, are ideal targets for personalized cancer vaccines. The traditional pipeline for neoantigen identification is slow, expensive, and has a high false-positive rate. Deep learning models are now being integrated into clinical trial protocols to accurately predict which mutations will yield immunogenic peptides capable of eliciting a potent, tumor-specific T-cell response, thereby powering the next generation of vaccine trials.

Application Notes: The DL-Powered Neoantigen Pipeline

Core Deep Learning Applications

- Neoantigen Prediction: Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) analyze sequencing data (Whole Exome Sequencing and RNA-Seq) to predict Major Histocompatibility Complex (MHC) binding affinity, peptide stability, and likelihood of proteasomal processing.

- Immunogenicity Scoring: Advanced models integrate features beyond binding, such as TCR recognition probability, to rank candidate neoantigens by their predicted ability to activate T-cells.

- Clonal Neoantigen Prioritization: Algorithms assess variant allele frequency and cancer cell fraction to prioritize neoantigens derived from clonal (vs. subclonal) mutations, targeting the core of the tumor and reducing escape.

Quantitative Impact on Trial Design

Table 1: Performance Comparison of Traditional vs. DL-Enhanced Neoantigen Screening

| Metric | Traditional Pipeline (Mass Spectrometry & Biochemical Assays) | DL-Enhanced Pipeline | Data Source (2023-2024) |

|---|---|---|---|

| Time from Biopsy to Vaccine Design | 3-6 months | 4-6 weeks | Analysis of recent trials (NCT03558958, NCT04263051) |

| Candidate Neoantigens per Patient | 50-100 | 10-20 (high-confidence) | Model validation studies |

| Predicted MHC-I Binding Accuracy (AUC) | ~0.75 (NetMHCpan4.0) | >0.90 (NetMHCpan-4.1, MHCflurry 2.0) | Benchmark publications |

| Positive Predictive Value for Immunogenicity | <10% | 25-40% | Integrated immunogenicity model reports |

Experimental Protocols

Protocol 3.1: In Silico Neoantigen Prediction & Prioritization Using Deep Learning

Objective: To identify and prioritize patient-specific neoantigen candidates from tumor sequencing data for vaccine design.

Materials (Digital Toolkit):

- Input Data: Matched tumor-normal WES (≥150x coverage) and tumor RNA-Seq (≥50M reads).

- Software: Python/R environment, Docker/Singularity for containerization.

- Key DL Tools: NetMHCpan-4.1 (MHC binding), MHCflurry 2.0 (affinity/stability), DeepImmuno (immunogenicity), pVACseq (pipeline integration).

- Reference Genome: GRCh38/hg38.

Procedure:

- Somatic Variant Calling: Use

Mutect2(GATK) orStrelka2on aligned WES data. Filter for somatic, non-synonymous, exonic mutations. - HLA Typing: Execute

OptiTypeorPolysolveron RNA-Seq data to determine patient-specific HLA class I/II alleles. - Neopeptide Generation: For each somatic mutation, generate all possible 8-11mer (MHC-I) and 13-17mer (MHC-II) candidate peptides.

- DL-Based Prediction:

a. MHC Binding Prediction: Run all candidate peptides through NetMHCpan-4.1 (

netmhcpan -BA) for each patient HLA allele. Retain peptides with %Rank < 2.0 (strong binders) or < 0.5 (very strong). b. Peptide Processing & Presentation: Integrate predictors for proteasomal cleavage (NetChop) and peptide-MHC complex stability (MHCflurry). - Immunogenicity Prioritization: Score filtered peptides using DeepImmuno or analogous CNN models trained on TCR-peptide-MHC interaction data.

- Clonality Filter: Cross-reference selected mutations with copy-number and clonality analysis (e.g., via

PyClone-VI) to prioritize clonal neoantigens. - Final Vaccine Cocktail Selection: Select the top 10-20 ranked neoantigens, ensuring diversity in HLA restriction and source gene expression (from RNA-Seq TPM values).

Protocol 3.2: In Vitro Validation of DL-Predicted Neoantigens

Objective: To experimentally confirm the immunogenicity of computationally prioritized neoantigens.

Materials (Research Reagent Solutions):

- Patient PBMCs: Cryopreserved peripheral blood mononuclear cells from leukapheresis.

- Peptides: Synthetic peptides (≥95% purity, GMP-grade for trials) representing predicted neoantigens and wild-type counterparts.

- Cell Culture Media: X-VIVO 15 serum-free medium, supplemented with IL-2 (for expansion).

- Assay Kits: ELISpot kit (IFN-γ), flow cytometry antibodies (CD3, CD4, CD8, CD137, cytokines), tetramer/multimer staining kits (patient HLA-specific).

Procedure:

- Peptide Pool Stimulation: Isolate CD8+/CD4+ T-cells from PBMCs. Co-culture with autologous antigen-presenting cells (APCs) pulsed with pools of predicted neoantigen peptides.

- T-Cell Expansion: Add low-dose IL-2 (50 IU/mL) on day 3. Re-stimulate weekly with peptide-pulsed APCs.

- Immunogenicity Assay (Day 14): a. IFN-γ ELISpot: Plate expanded T-cells with individual peptide-pulsed APCs. Develop and count spots; a significant increase over wild-type control indicates neoantigen-specific response. b. Activation-Induced Marker (AIM) Assay: Analyze by flow cytometry for co-expression of CD137/CD69 on T-cells after peptide re-stimulation. c. pMHC Multimer Staining: Use commercially synthesized fluorescent multimers for direct detection of antigen-specific T-cells.

- Data Correlation: Compare in vitro response strength with the model-derived immunogenicity score to refine the DL algorithm.

Visualizations

Title: DL-Driven Neoantigen Prediction Workflow

Title: Architecture of a Multi-Feature Neoantigen DL Model

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Neoantigen Vaccine Development

| Item | Function & Application | Example Product/Provider |

|---|---|---|

| GMP-Grade Synthetic Peptides | Patient-specific neoantigen payload for vaccine formulation. Must be high-purity, sterile, endotoxin-free. | Bachem, JPT Peptide Technologies, Genscript |

| pMHC Multimers (Tetramers/Dextramers) | Direct ex vivo detection and isolation of neoantigen-specific T-cells for immune monitoring. | Immudex, MBL International |

| IFN-γ ELISpot Kit | Functional assay to quantify neoantigen-reactive T-cell responses (sensitivity: 1 in 100,000 cells). | Mabtech, Cellular Technology Limited (CTL) |

| T-Cell Expansion Media (Serum-Free) | Supports robust in vitro expansion of low-frequency neoantigen-specific T-cell clones. | ThermoFisher (ImmunoCult), Miltenyi (TexMACS) |

| HLA Typing Kit | High-resolution determination of patient HLA alleles, critical for prediction algorithm input. | Omixon (Holotype HLA), Illumina (TruSight HLA) |

| Single-Cell RNA-Seq Kit (5' with V(D)J) | Profiling of TCR repertoire and functional state of vaccine-induced T-cells. | 10x Genomics (Chromium Next GEM) |

| Neoantigen Prediction Software Suite | Integrated platform for running DL models (NetMHCpan, MHCflurry, pVACseq). | pVACtools (github), ELLA (EpiVax) |

Navigating Challenges: Troubleshooting Data, Models, and Interpretation in AI-Driven Immunology

Within the thesis framework of AI and Machine Learning for Immunology Research, a central challenge is the integration of complex, multi-modal immunological data. Effective data integration is the prerequisite for building predictive models of immune response, vaccine efficacy, and autoimmunity. This document provides application notes and detailed protocols for overcoming the data bottleneck.

Core Strategies & Quantitative Benchmarks

Data Harmonization & Imputation Performance

The following table summarizes the performance of leading methods for handling missing data (sparsity) in cytometry and single-cell RNA sequencing (scRNA-seq) datasets.

Table 1: Benchmarking of Data Imputation & Normalization Methods

| Method Name | Data Type | Core Algorithm | Reported Accuracy (NRMSE)* | Processing Speed (cells/sec) | Best For |

|---|---|---|---|---|---|

| SAUCIE | CyTOF / Flow | Autoencoder | 0.12 (CyTOF) | ~1,000 | Dimensionality reduction, batch correction |

| MAGIC | scRNA-seq | Diffusion-based imputation | 0.18 (scRNA-seq) | ~10,000 | Recovering gene-gene relationships |

| k-NN Impute | General Omics | k-Nearest Neighbors | 0.22 (mixed) | ~5,000 | Small to medium datasets |

| ComBat | General Omics | Empirical Bayes | Batch effect p-value < 0.001 | ~50,000 | Removing technical batch noise |

| scVI | scRNA-seq | Variational Autoencoder | 0.15 (scRNA-seq) | ~8,000 | Integration of large, heterogeneous studies |

*Normalized Root Mean Square Error (lower is better). Compiled from recent literature (2023-2024).

Multi-Omic Integration Tool Landscape

Table 2: Platforms for Heterogeneous Data Integration

| Platform/Tool | Supported Data Types | Integration Method | Output | Key Limitation |

|---|---|---|---|---|

| Multi-Omics Factor Analysis (MOFA+) | RNA-seq, ATAC-seq, Methylation, Proteomics | Statistical factor analysis | Latent factors | Assumes data are Gaussian |

| Cobolt | scRNA-seq, scATAC-seq | Variational Autoencoder (VAE) | Joint latent embedding | Requires paired measurements |

| LIGER | scRNA-seq, Spatial Transcriptomics | Integrative Non-negative Matrix Factorization (iNMF) | Shared and dataset-specific factors | Sensitive to hyperparameters |

| Arches | Single-cell omics | Neural Network, Reference Mapping | Integrated embeddings | Needs a well-defined reference |

| CellCharter | Spatial Proteomics (IMC, CODEX) | Spatial-aware Gaussian Mixture Models | Spatial cell niches | Primarily for imaging data |