Harmonizing Precision: A Comprehensive Guide to IHC Inter-Laboratory Comparison and Standardization for Biomarker Validation

This article provides a detailed roadmap for researchers, scientists, and drug development professionals aiming to achieve robust, reproducible Immunohistochemistry (IHC) results across multiple laboratories.

Harmonizing Precision: A Comprehensive Guide to IHC Inter-Laboratory Comparison and Standardization for Biomarker Validation

Abstract

This article provides a detailed roadmap for researchers, scientists, and drug development professionals aiming to achieve robust, reproducible Immunohistochemistry (IHC) results across multiple laboratories. We explore the foundational challenges driving the need for standardization, including assay variability and its impact on clinical decisions. The core of the guide focuses on established and emerging methodological frameworks, such as CAP and ASCO/CAP guidelines, and the implementation of standardized protocols and scoring systems. We address critical troubleshooting strategies for pre-analytical, analytical, and post-analytical variables. Finally, we detail the validation process through inter-laboratory comparison studies (ring trials) and the use of digital pathology and reference standards. By synthesizing these intents, the article equips professionals with the knowledge to enhance data reliability, accelerate drug development, and ensure patient safety through consistent IHC biomarker analysis.

The Critical Need for IHC Standardization: Understanding Variability and Its Impact on Biomarker Data

Immunohistochemistry (IHC) is a cornerstone of pathology and translational research, yet its reproducibility across laboratories remains a significant challenge. This variability directly impacts diagnostic concordance, biomarker validation, and drug development. This guide, framed within a broader thesis on IHC standardization, compares critical variables and their solutions through objective data.

Core Variables Driving Inter-Lab IHC Variability

The following table summarizes primary factors contributing to result discrepancies, as established by inter-laboratory comparison studies.

Table 1: Key Sources of IHC Variability and Impact Level

| Variable Category | Specific Factor | Typical Impact on Staining Intensity (Coefficient of Variation) | Standardization Solution |

|---|---|---|---|

| Pre-Analytical | Tissue Fixation Time (Formalin) | 25-40% | Controlled fixation protocol (e.g., 18-24 hrs) |

| Pre-Analytical | Antigen Retrieval Method (pH) | 20-35% | Standardized buffer (e.g., pH 6.0 or pH 9.0) & heating method |

| Analytical | Primary Antibody Clone & Concentration | 30-50% | Use of validated, consistent clones & titration |

| Analytical | Detection System (Polymer vs. APAAP) | 15-30% | Adoption of high-sensitivity, polymer-based systems |

| Post-Analytical | Scoring Method (Manual vs. Digital) | 20-45% | Implementation of digital image analysis with algorithms |

Experimental Comparison: Antibody Clone Performance

A standardized experiment was conducted across three labs using identical tissue microarrays (TMAs) to compare two common ER (Estrogen Receptor) antibody clones.

Experimental Protocol:

- TMA Construction: A single TMA block was created from 10 breast carcinoma cases with known ER status (5 positive, 3 negative, 2 heterogeneous). It was sectioned at 4µm.

- Distributed Materials: Identical TMA sections, protocol sheets, and a shared batch of detection kit (polymer-HRP) were sent to each lab.

- Variable Tested: Each lab performed IHC using two different primary antibody clones: Clone SP1 (rabbit monoclonal) and Clone 1D5 (mouse monoclonal). Antibodies were used at vendor-recommended concentrations.

- Standardized Steps: All labs used identical antigen retrieval (pH 9.0, EDTA buffer, 95°C for 20 min), incubation time (30 min), and chromogen (DAB).

- Analysis: Slides were digitally scanned. Quantitative analysis of % positive nuclei and staining intensity (0-3 scale) was performed using the same image analysis software (QuPath).

Table 2: Inter-Lab Comparison of ER Antibody Clone Performance

| Antibody Clone | Lab A (% Positive) | Lab B (% Positive) | Lab C (% Positive) | Inter-Lab CV | Average H-Score |

|---|---|---|---|---|---|

| Clone SP1 | 78% | 82% | 75% | 4.5% | 245 |

| Clone 1D5 | 65% | 82% | 58% | 18.7% | 195 |

CV = Coefficient of Variation; H-Score = (0 x % negative) + (1 x % weak) + (2 x % moderate) + (3 x % strong). Data is mean from 5 positive cases.

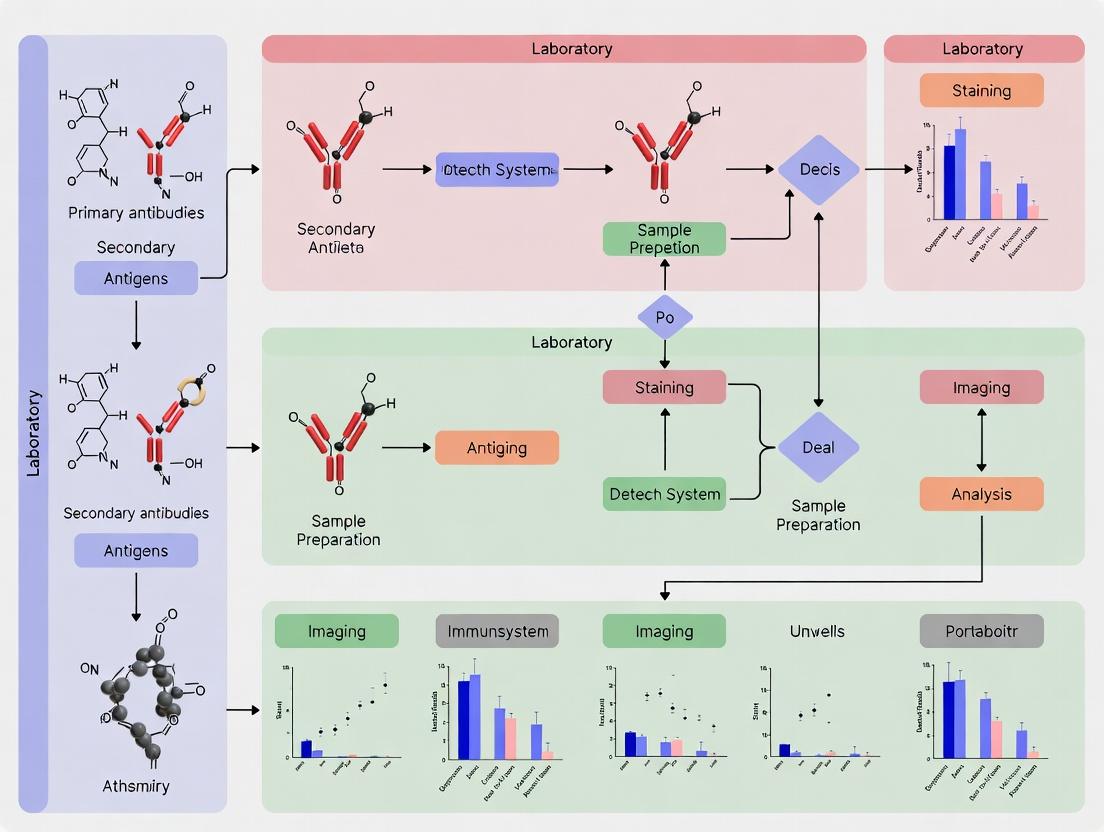

Visualization of IHC Standardization Workflow

A standardized workflow is critical to minimize variability.

Title: IHC Standardization Workflow Across Lab Phases

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Standardized IHC Experiments

| Item | Function & Rationale for Standardization |

|---|---|

| Validated Primary Antibody | Core reagent; using the same clone, lot, and optimized concentration is the single most critical factor for reproducibility. |

| Reference Control Tissue | A multitissue block with known positive/negative tissues for the target antigen, used in every run to monitor assay performance. |

| Automated Stainer & Reagents | Using the same model of stainer and identical batches of detection kit (polymer, chromogen) eliminates platform variability. |

| Standardized Antigen Retrieval Buffer | pH and buffer composition (Citrate vs. EDTA) dramatically affect epitope availability; must be consistent. |

| Digital Image Analysis Software | Removes subjective manual scoring bias, allowing quantitative, reproducible metrics like H-score or % positivity. |

Visualization of Factors Impacting IHC Signal

The final IHC signal is the product of a complex interplay of variables.

Title: Key Factor Relationships Determining Final IHC Result

Inconsistent immunohistochemistry (IHC) results across laboratories pose a critical challenge to biomedical research and precision medicine. This comparison guide evaluates the performance of standardized versus non-standardized IHC protocols, framed within the essential thesis that inter-laboratory comparison and standardization are non-negotiable for reproducible science.

Comparison of Standardized vs. Non-Standardized IHC Assays

The following table summarizes data from recent ring studies and published comparisons, highlighting key performance metrics.

Table 1: Performance Comparison of IHC Protocols in Inter-Laboratory Studies

| Performance Metric | Standardized Protocol (with validated controls & automated platforms) | Non-Standardized/In-House Protocol | Implications for Research & Diagnostics |

|---|---|---|---|

| Inter-Lab Concordance (PPA)* | 95-99% (for ER, PR, HER2, PD-L1) | 70-85% | High discordance risks patient misclassification in clinical trials. |

| Intra-Lab Reproducibility | Coefficient of Variation (CV) < 10% | CV 15-30%+ | Poor reproducibility undermines longitudinal study data. |

| Assay Sensitivity | Consistent, optimized for clinical cut-offs | Highly variable; often over- or under-fixed | False negatives in diagnostics; unreliable biomarker data in trials. |

| Background/Noise | Low, uniform staining | High, uneven staining | Compromises pathologist scoring accuracy and automated image analysis. |

| Data Acceptance by Regulators | High (e.g., for companion diagnostics) | Low; requires extensive validation | Increases risk of trial audit findings and delays drug approval. |

*PPA: Positive Percentage Agreement

Experimental Protocols for Key Comparison Studies

The data in Table 1 is derived from studies employing the following core methodologies.

Protocol 1: Inter-Laboratory Ring Study for HER2 IHC

- Objective: To assess the concordance of HER2 scoring across multiple diagnostic laboratories.

- Methodology:

- Tissue Microarray (TMA) Construction: A TMA with 40 breast cancer cases, pre-validated for HER2 status (0, 1+, 2+, 3+), is created.

- Participant Labs: 20 laboratories are recruited. Ten use a standardized protocol (defined primary antibody clone, dilution, retrieval method, detection kit, and staining platform). Ten use their institution's legacy protocol.

- Staining & Analysis: Each lab stains the identical TMA slides. Digital whole-slide images are generated.

- Blinded Scoring: Three expert pathologists, blinded to the protocol and lab, score each case independently using ASCO/CAP guidelines.

- Statistical Analysis: Calculate Positive Percentage Agreement (PPA) with the pre-validated score and inter-rater reliability (Fleiss' kappa).

Protocol 2: Quantitative Analysis of Stain Variability

- Objective: To quantify the staining intensity variability of PD-L1 (22C3) in non-small cell lung carcinoma.

- Methodology:

- Sample Set: A cohort of 30 NSCLC biopsies is split, with each sample tested across two conditions.

- Staining Conditions: Condition A: Automated platform with FDA-approved assay kit. Condition B: Manual staining using lab-defined "home-brew" protocol.

- Image Analysis: Slides are digitized. Using image analysis software, the Tumor Proportion Score (TPS) is calculated algorithmically based on membrane stain intensity and area.

- Data Comparison: For each case, the TPS from Condition A and B are plotted. The coefficient of variation (CV) for intensity is calculated per case and across the cohort.

Visualization of IHC Standardization Workflow and Impact

Title: IHC Standardization Workflow from Sample to Result

Title: Cascade of Risks from IHC Inconsistency

The Scientist's Toolkit: Research Reagent Solutions for Standardized IHC

Table 2: Essential Components for a Standardized IHC Workflow

| Item | Function in Standardization |

|---|---|

| Validated Primary Antibody Clones | Antibodies with demonstrated specificity and optimal performance for a defined clinical or research application (e.g., ER clone SP1, PD-L1 clone 22C3). Reduces lot-to-lot variability. |

| Isotype & Biological Controls | Tissue controls with known positive/negative expression and isotype-matched negative control antibodies. Essential for distinguishing specific signal from background noise. |

| Automated Staining Platform | Instrument that precisely controls incubation times, temperatures, and reagent volumes. Minimizes technician-induced variability and improves run-to-run consistency. |

| Validated Detection Kit | A complete, optimized detection system (e.g., polymer-based) matched to the automated platform. Ensures uniform amplification and visualization of the antigen-antibody complex. |

| Antigen Retrieval Buffer (pH-specific) | Standardized buffer (e.g., pH 6 citrate or pH 9 EDTA) with defined heating protocol. Critical for consistent epitope exposure across different tissue fixation conditions. |

| Whole Slide Image Scanner & Analysis Software | Enables digital archiving, remote pathologist review, and quantitative, objective analysis of stain intensity and percentage, removing scorer subjectivity. |

| Reference Standard Tissue Microarray (TMA) | A TMA containing cell lines or patient tissues with pre-characterized biomarker expression levels. Serves as a calibrator for inter-laboratory comparison studies. |

Immunohistochemistry (IHC) remains a cornerstone technique in diagnostic pathology and translational research. However, its utility in multi-center trials and companion diagnostics is heavily compromised by inter-laboratory variability. This guide compares key steps in the IHC workflow, identifying sources of variability and evaluating standardization solutions within the context of a broader thesis on IHC inter-laboratory comparison and standardization research.

Pre-Analytical Phase: Tissue Fixation and Processing

The pre-analytical phase introduces significant variability. The choice of fixative and fixation time dramatically impacts antigen preservation and accessibility.

Table 1: Comparison of Tissue Fixation Methods

| Fixative Type | Fixation Time Variability (Impact Score 1-5)* | Antigen Preservation Profile | Compatibility with Common IHC Targets (e.g., ER, HER2) | Key Standardization Challenge |

|---|---|---|---|---|

| 10% Neutral Buffered Formalin (NBF) | High (5) - Over/under-fixation common | Moderate to Poor; requires antigen retrieval | High, but staining intensity variable | Controlling exact time from biopsy to fixation and fixation duration. |

| PAXgene Tissue System | Low (2) - Time-critical fixation | Excellent for nucleic acids & many proteins | Moderate; optimized protocols less ubiquitous | Limited long-term data on biomarker stability. |

| Ethanol-based Fixatives | Moderate (3) - Less sensitive to over-fixation | Good for some phospho-epitopes | Low to Moderate; not standard for clinical IHC | Requires re-validation of all clinical assays. |

| Cold Ischemia Time (All Methods) | Critical (5+) | Degrades rapidly post-excision | Severely impacts all targets | Lack of SOPs for surgical to pathology handoff. |

*Impact Score: 1=Low variability impact, 5=High variability impact.

Experimental Protocol - Fixation Time Impact:

- Sample: Split human tumor xenograft (breast cancer) tissue immediately after resection.

- Groups: Fixed in 10% NBF for 6h, 24h, 48h, 72h.

- Processing: Identical processing, embedding, sectioning.

- Staining: Serial sections stained for Estrogen Receptor (ER, clone SP1) using a standardized automated platform with optimized antigen retrieval (EDTA, pH 9.0).

- Analysis: Quantitative image analysis (H-score) of nuclear staining intensity and percentage positive cells.

- Result: H-score decreased by ~35% between 6h and 72h fixation groups, with increased background in under-fixed (6h) samples.

Analytical Phase: Antibody and Detection System Comparison

The selection of primary antibody clone, dilution, and detection system is a major source of inter-laboratory discrepancy.

Table 2: Comparison of HER2 IHC Antibody Clones and Detection

| Antibody Clone (Vendor) | Recommended Dilution / Platform | Concordance with FISH (Reported Range) | Sensitivity / Background Profile | Key Standardization Solution |

|---|---|---|---|---|

| 4B5 (Ventana) | Prediluted, BenchMark ULTRA | 92-96% | High sensitivity, low background | Integrated, automated platform with locked protocols. |

| CB11 (Leica) | Prediluted, BOND-MAX | 90-95% | Moderate sensitivity | Automated platform-specific protocol. |

| Polyclonal (Dako) | Dilution range, Autostainer | 85-92% | Variable; requires meticulous optimization | Use of validated, commercial kits over "home-brew" methods. |

| SP3 (Rabbit Monoclonal) | Broad dilution range, various platforms | 90-94% (platform dependent) | Very high sensitivity | Requires stringent optimization and validation per lab. |

Experimental Protocol - Detection System Comparison:

- Sample: Tissue microarray (TMA) with cell line controls with known HER2 expression (0 to 3+).

- Primary Antibody: HER2 clone 4B5 applied at identical concentration.

- Detection Systems Compared: (1) Vendor-specific, polymer-based detection (Ventana OptiView), (2) Vendor-specific, polymer-based detection (Dako EnVision FLEX), (3) Streptavidin-Biotin-Complex (SABC) method.

- Staining: Performed on identical automated stainers with optimized but system-specific protocols.

- Analysis: Blinded scoring by two pathologists (ASCAP/CAP guidelines) and quantitative image analysis.

- Result: Concordance between pathologists was 98% for OptiView, 95% for EnVision FLEX, and 88% for SABC. SABC showed higher non-specific background in low-expressing samples.

Post-Analytical Phase: Scoring and Quantification

Subjective manual scoring is the final, and often most variable, step.

Table 3: Comparison of IHC Scoring Methodologies

| Scoring Method | Inter-Observer Concordance (Kappa Score) | Throughput | Required Investment | Suitability for Biomarker Quantification |

|---|---|---|---|---|

| Pathologist Visual (e.g., H-score, Allred) | Moderate (κ = 0.6 - 0.8) | High | Low | Low to Moderate; semi-quantitative. |

| Manual Digital Image Analysis (DIA) | High (κ = 0.85 - 0.95) | Low to Moderate | Moderate | High, but user-dependent thresholding. |

| Fully Automated DIA Algorithm | Very High (κ > 0.95) | Very High | High (software/licensing) | Very High; objective and reproducible. |

| Consensus Review (2+ Pathologists) | High (κ > 0.9) | Very Low | Moderate (time) | Low; improves reproducibility but not quantitation. |

Experimental Protocol - Inter-Observer Variability:

- Sample Set: 100 ER+ breast cancer IHC slides.

- Scorers: Three board-certified pathologists.

- Method: Each pathologist scored all slides using the H-score method (0-300) independently, blinded to others' scores.

- Analysis: Intraclass correlation coefficient (ICC) calculated to assess agreement.

- Result: The overall ICC was 0.72, indicating "good" but not "excellent" agreement. Disagreements were most common in samples with heterogeneous staining or intermediate H-scores (100-200).

Visualizing the IHC Variability Landscape

Title: IHC Workflow and Key Variability Sources

The Scientist's Toolkit: Key Research Reagent Solutions

| Item & Example Vendor | Function in IHC Standardization | Critical Parameter |

|---|---|---|

| Certified Reference Standards (e.g., Cell Marque, AMSBIO) | Provides biologically relevant tissue controls for run-to-run and lab-to-lab normalization. | Tissue type, biomarker expression level, fixation consistency. |

| Validated Antibody Clones & Kits (e.g., FDA-approved/CE-IVD kits from Ventana, Agilent, Leica) | Reduces variability from lot-to-lot and platform-to-platform differences through locked protocols. | Integrated detection system and defined antigen retrieval. |

| Controlled Antigen Retrieval Buffers (e.g., Tris-EDTA pH 9.0, Citrate pH 6.0) | Unmasks epitopes consistently; buffer pH and composition are critical for reproducibility. | Precise pH, molarity, and heating temperature/time. |

| Automated Staining Platforms (e.g., Ventana BenchMark, Leica BOND, Agilent Autostainer) | Standardizes all liquid handling, incubation times, and temperatures for the analytical phase. | Protocol synchronization and regular maintenance. |

| Quantitative Digital Image Analysis (DIA) Software (e.g., HALO, Visiopharm, QuPath) | Removes subjective bias from scoring, enabling continuous data and high-throughput analysis. | Validated algorithm and consistent thresholding rules. |

Within the critical research domain of immunohistochemistry (IHC) standardization and inter-laboratory comparison, the role of independent consortia and proficiency testing schemes is paramount. This analysis compares two leading initiatives: the Quality in Pathology (QuIP) initiative and the Nordic Immunohistochemical Quality Control (NordiQC). These programs provide essential frameworks for assessing and improving laboratory performance through external quality assessment (EQA).

Comparative Analysis of Major IHC Standardization Consortia

Table 1: Core Comparison of QuIP and NordiQC Initiatives

| Feature | Quality in Pathology (QuIP) | Nordic Immunohistochemical Quality Control (NordiQC) |

|---|---|---|

| Primary Focus & Scope | Broad EQA for anatomical pathology, with significant IHC modules; global participation. | Specialized, in-depth EQA focused exclusively on IHC; originally Nordic, now global. |

| Typical Assessment Cycle | Multiple rounds per year, covering various organ systems and markers per round. | 2-4 main assessment rounds per year, each focusing on a specific set of markers. |

| Core Deliverable | Participant reports with individual scores, peer group comparison, and educational commentary. | Detailed assessment report with performance categorization (Optimal, Good, Borderline, Poor), extensive image galleries, and optimized protocols. |

| Performance Benchmark | Pass/Fail based on pre-defined criteria concordance with reference consensus. | Four-tiered grading system, emphasizing optimal staining pattern and intensity. |

| Key Educational Component | General best practice guidelines and case-specific feedback. | Highly detailed, protocol-centric recommendations, including antibody clone, dilution, and retrieval methods. |

| Data Output for Research | Aggregated data on inter-laboratory variance for specific antibody-antigen combinations. | Publicly available large-scale data on antibody performance and protocol optimization across platforms. |

Experimental Protocols Underpinning EQA

The comparative data generated by these consortia rely on standardized experimental workflows for participant evaluation.

Protocol 1: Core EQA Slide Testing & Assessment

- Tissue Microarray (TMA) Construction: The organizing committee selects well-characterized tissue samples (normal, neoplastic, borderline). These are assembled into TMAs to ensure all participants test the same tissue cores.

- Slide Distribution: Unstained TMA sections are distributed to all registered participating laboratories.

- Local Staining: Participants stain the slides according to their in-house validated clinical protocols for the specified marker(s). Data on antibody clone, dilution, platform, and retrieval method are submitted.

- Centralized Review: All returned slides are assessed by an expert review panel using a multi-headed microscope or digital pathology system.

- Scoring & Categorization: Stains are evaluated against consensus criteria (specificity, sensitivity, staining pattern, intensity). Scoring follows the scheme in Table 1 (e.g., NordiQC's 4-tier system).

- Data Analysis & Reporting: Individual performance reports are generated. Aggregated, anonymized data is analyzed to identify common pitfalls and sources of inter-laboratory variation.

Protocol 2: Reference Protocol Validation (NordiQC Example)

- Pilot Testing: Multiple antibody clones and retrieval conditions are tested on a comprehensive TMA by the NordiQC center.

- Optimal Protocol Identification: The protocol yielding optimal staining (high signal-to-noise, correct localization) across all relevant tissue types is identified.

- Ring Experiment: The proposed optimized protocol is distributed to a subset of reference laboratories for verification.

- Publication & Dissemination: The finalized "optimized protocol" is published in the assessment report and on the NordiQC website for all participants.

Visualizing the EQA Workflow and Impact

The Scientist's Toolkit: Key Reagents & Materials for IHC Standardization

Table 2: Essential Research Reagent Solutions for IHC EQA Studies

| Item | Function in Standardization Research |

|---|---|

| Formalin-Fixed, Paraffin-Embedded (FFPE) TMA | Provides identical tissue specimens for all testing labs, controlling for pre-analytical variables and enabling direct comparison. |

| Validated Primary Antibody Panels | Multiple clones against the same target are tested to identify the most robust and specific reagent for consensus recommendation. |

| Automated IHC Staining Platforms | Standardizes the staining process (incubation times, temperatures, washes) to reduce intra-protocol variability in optimization studies. |

| Antigen Retrieval Solutions (pH 6 & pH 9) | Critical for unmasking epitopes; comparative testing at different pH levels is fundamental to protocol optimization. |

| Reference Control Slides | Slides with known positive and negative expression are used to validate staining run performance and assay sensitivity/specificity. |

| Digital Slide Scanning System | Enables high-throughput, remote expert review of EQA results and creation of permanent digital image libraries for education. |

Building a Standardized IHC Workflow: Protocols, Controls, and Best Practices for Cross-Lab Consistency

This guide compares experimental frameworks for immunohistochemistry (IHC) standardization, a critical component of broader inter-laboratory comparison research. The objective is to identify the most robust master protocol for biomarker quantification, directly impacting drug development and companion diagnostic validation.

Comparison of IHC Standardization Protocol Performance

The following table summarizes key performance metrics of three leading standardization approaches based on recent multi-center ring studies.

| Protocol Feature / Metric | Whole-Slide Digital Reference (WSDR) | Cell Line Microarray (CLMA) | Peptide-Based Multiepitope (PBM) Controls | | :--- | :--- | : :--- | :--- | | Inter-lab Reproducibility (CV%) | 8-12% | 15-25% | 5-8% | | Inter-assay Reproducibility (CV%) | 10-15% | 18-30% | 7-10% | | Target Antigen Stability (months) | 24-36 | 6-12 | 36+ | | Multiplexing Capacity | Low (sequential) | Moderate | High (simultaneous) | | Protocol Flexibility | Low | Moderate | High | | Primary Cost Driver | Digital Infrastructure & Scanning | Cell Culture & Arraying | Synthetic Peptide Production | | Best Application | Single-analyte, low-plex companion diagnostic validation | Screening antibody specificity | High-plex biomarker panels & quantitative mass spectrometry correlation |

Detailed Experimental Protocols

1. Whole-Slide Digital Reference (WSDR) Protocol

- Objective: To calibrate staining intensity against a digitally archived, centrally stained master slide.

- Methodology:

- A reference tissue block is selected and centrally stained using a meticulously optimized protocol.

- The entire slide is digitized at 40x magnification to create a high-resolution Whole Slide Image (WSI).

- Pathologists annotate specific regions of interest (e.g., tumor, stroma) on the digital image.

- Participating labs stain their slides from the same block, scan them, and upload the images.

- Image analysis software (e.g., QuPath, HALO) aligns participant images to the master WSI and extracts intensity values (e.g., H-score, % positivity) from the pre-defined annotations.

- Results are normalized to the master slide's values.

2. Peptide-Based Multiepitope (PBM) Control Validation Protocol

- Objective: To validate a synthetic control for simultaneous quantification of multiple analytes.

- Methodology:

- PBM Fabrication: Defined peptide sequences containing epitopes for target antibodies (e.g., HER2, PD-L1, Ki-67) are spotted onto a nylon membrane in a precise serial dilution.

- Co-staining: The PBM control slide is processed alongside the patient tissue slide in every IHC run.

- Calibration Curve Generation: The staining intensity of each peptide spot is measured, creating an instrument-specific calibration curve for each analyte.

- Quantitative Normalization: The intensity values from the patient tissue are adjusted based on the PBM calibration curve, controlling for day-to-day and instrument variation.

Visualization: IHC Master Protocol Validation Workflow

Title: Master Protocol Validation Workflow

The Scientist's Toolkit: Essential Reagents for IHC Standardization

| Item | Function in Standardization |

|---|---|

| Certified Reference Cell Lines | Provide a consistent biological source with known, stable antigen expression levels for assay calibration. |

| Tissue Microarray (TMA) Constructor | Enables high-throughput analysis of hundreds of tissue cores under identical staining conditions. |

| Synthetic Peptide Multiepitope Controls | Offer a non-biological, stable control for multiple analytes, enabling cross-assay normalization. |

| Digital Image Analysis Software (e.g., QuPath, HALO) | Allows objective, quantitative scoring of staining intensity and percentage, removing observer bias. |

| Standardized Antibody Validation Panel | A set of tissues/cells with known positive/negative status to confirm antibody specificity. |

| Automated Staining Platform w/ LIS | Ensures precise reagent dispensing and timing; Laboratory Information Systems track lot variables. |

| Chromogen with Quantifiable Signal | A precipitating dye (e.g., DAB) whose intensity linearly correlates with antigen concentration for analysis. |

| QR Code-Linked Specimen Tracking | Maintains chain of custody and integrates pre-analytical variables (cold ischemia time, fixation) into metadata. |

In the pursuit of reproducible immunohistochemistry (IHC) results across laboratories, the consistent use of well-characterized reference standards and control tissues is paramount. This guide compares the performance of different types of reference materials and control strategies within the context of inter-laboratory standardization research, providing experimental data to inform selection and application.

Comparison of IHC Reference Standard Types

The selection of reference standards directly impacts assay validation and quality control. The table below compares the core alternatives.

Table 1: Comparison of IHC Reference Standard and Control Tissue Types

| Feature | Cell Line Microarrays (CLMAs) | Tissue Microarrays (TMAs) from Patient Samples | Recombinant Protein Spots | Full Tissue Sections (Conventional) |

|---|---|---|---|---|

| Source & Composition | Pelleted, formalin-fixed cell lines with defined antigen expression levels. | Multiple patient tissue cores embedded in a single paraffin block. | Purified proteins spotted and fixed onto slides. | Standard histological sections from a single donor block. |

| Expression Homogeneity | High. Isogenic cell population ensures uniform expression across the slide. | Low to Moderate. Inherent biological heterogeneity between cores and patients. | Very High. Precise, user-defined amount of target protein. | Variable. Depends on tissue anatomy and pathology. |

| Antigen Quantifiability | High. Amenable to precise titration and generation of calibration curves. | Low. Qualitative or semi-quantitative (positive/negative internal controls). | Highest. Allows for absolute quantification per spot. | Low. Used primarily for presence/absence and localization. |

| Primary Application | Assay calibration, linearity testing, and precision monitoring. Critical for quantitative IHC (qIHC). | Diagnostic reference, biomarker heterogeneity assessment, and external proficiency testing. | Antibody specificity verification, titration optimization. | Diagnostic gold standard and morphology reference. |

| Key Advantage for Standardization | Provides a continuous, homogeneous standard for inter-laboratory calibration, reducing run-to-run and site-to-site variation. | Reflects real-world tissue complexity and is essential for validating assay context. | Unambiguous control for antibody binding, independent of tissue processing variables. | Provides architectural context, essential for initial assay development. |

| Experimental Data (Example: HER2 IHC) | CLMAs with 0, 1+, 2+, 3+ HER2 expression showed <10% CV across 10 laboratories when using standardized protocols. | Concordance for HER2 scoring on patient TMAs improved from 75% to 92% after implementing a standardized CLMA calibration step. | Spots confirmed antibody specificity; non-specific binding was ruled out by negative recombinant protein controls. | Used to establish the expected staining pattern and validate CLMA/TMA results. |

Experimental Protocol for Inter-Laboratory Comparison Using CLMAs

This protocol outlines a method for assessing and harmonizing IHC assay performance across multiple sites.

Title: Multi-Site IHC Assay Harmonization Protocol Using CLMA Reference Standards.

Objective: To evaluate inter-laboratory precision (CV) and align scoring outcomes for a target biomarker using calibrated cell line reference materials.

Materials (The Scientist's Toolkit): Table 2: Essential Research Reagent Solutions for IHC Standardization

| Item | Function |

|---|---|

| Calibrated Cell Line Microarray (CLMA) | Contains cores of cell lines with pre-quantified, graded levels of target antigen (e.g., 0 to 3+). Serves as the primary calibration standard. |

| Validated Primary Antibody Clone | Key detection reagent. Standardization requires all sites to use the same clone from a common lot or a validated equivalent. |

| Automated IHC Stainer & Linked Reagents | Standardized staining platform with a defined, locked-down protocol (incubation times, temperatures, retrieval conditions) and reagent lots. |

| Digital Slide Scanner | For whole-slide imaging at a standardized magnification (e.g., 20x or 40x) to enable digital analysis. |

| Image Analysis Software | For objective quantification of staining intensity (e.g., H-score, Allred score, or continuous optical density units). |

| Reference TMA (Patient Tissue) | To validate that calibration on CLMAs translates to accurate scoring on real, heterogeneous tissue. |

Methodology:

- Pre-study Alignment: All participating laboratories receive the same study protocol, defined antibody clone, reagent lots, and the identical lot of CLMA slides.

- Staining: Each site stains the CLMA slides alongside a set of patient TMAs using the standardized protocol on their respective automated stainers.

- Digital Analysis: Slides are scanned, and regions of interest (ROIs) for each cell line core on the CLMA are annotated. Image analysis software quantifies the staining intensity within each ROI.

- Data Collection & Calibration: Sites report raw quantitative data (e.g., average optical density) for each CLMA core. A central coordinating lab analyzes the data to calculate inter-site CV for each expression level.

- Harmonization: If CV exceeds a pre-defined threshold (e.g., >15%), staining parameters (e.g., primary antibody dilution, detection time) are adjusted iteratively using the CLMA as a guide until inter-site precision is achieved.

- Validation: The harmonized protocol is then applied to the patient TMAs. Concordance rates (e.g., percentage agreement on clinical score categories) between sites and to a central expert pathology review are calculated to confirm clinical relevance.

Visualizing the Standardization Workflow and Pathway Control

Title: IHC Inter-Lab Standardization Workflow

Title: Pathway & IHC Control Relationship

Within the critical context of immunohistochemistry (IHC) inter-laboratory comparison and standardization research, the selection and application of scoring methodologies directly impact the reproducibility and reliability of biomarker data. This guide objectively compares traditional manual scoring systems (H-Score, Allred) with emerging digital image analysis (DIA) platforms, a central consideration for modern researchers and drug development professionals aiming to reduce assay variability in multicenter trials.

Core Characteristics and Performance Metrics

The following table summarizes the fundamental attributes and comparative performance data from recent inter-laboratory studies.

Table 1: Comparison of IHC Scoring Methodologies

| Feature / Metric | Allred Score | H-Score | Digital Image Analysis (DIA) |

|---|---|---|---|

| Scoring Principle | Semi-quantitative; combines proportion (0-5) and intensity (0-3) scores. | Semi-quantitative; sum of (percentage of cells * intensity grade), range 0-300. | Quantitative; pixel-based classification and measurement of stain intensity/area. |

| Output Range | 0-8 (sum) or 0-6 (sum + intensity adjustment). | 0-300. | Continuous variables (e.g., % positivity, average optical density, H-Score equivalent). |

| Inter-Observer Variability (Typical ICC*) | 0.70 - 0.85 | 0.75 - 0.88 | 0.90 - 0.98 |

| Throughput | Low to Moderate | Low to Moderate | High (after initial setup) |

| Key Strengths | Simple, quick, clinically validated for ER/PR in breast cancer. | More granular than Allred, sensitive to heterogeneity. | High reproducibility, objectivity, ability to analyze complex patterns and spatial relationships. |

| Key Limitations | Coarse granularity, limited sensitivity to heterogeneity. | Time-consuming, remains subjective. | High initial cost, requires algorithm training/validation, sensitive to pre-analytical variables. |

| Standardization Potential | Moderate (depends on rigorous observer training). | Moderate (depends on rigorous observer training). | High (algorithm locked once validated). |

| Typical Use Case | High-volume clinical reporting (e.g., hormone receptors). | Clinical research with continuous biomarker data. | Preclinical/clinical research requiring high precision; companion diagnostic development. |

*Intraclass Correlation Coefficient (ICC) values aggregated from recent literature.

Experimental Data from Comparative Studies

Recent studies have directly compared these methods using serial sections of breast cancer tissue microarrays (TMAs) stained for biomarkers like Estrogen Receptor (ER).

Table 2: Representative Data from an Inter-Rater Reproducibility Study (ER Scoring)

| Method | Number of Raters | Number of Cases | Average ICC (95% CI) | Mean Absolute Difference Between Highest/Lowest Score |

|---|---|---|---|---|

| Allred (Manual) | 5 | 50 | 0.79 (0.71-0.86) | 2.4 points |

| H-Score (Manual) | 5 | 50 | 0.83 (0.76-0.89) | 45 points |

| DIA (Single Algorithm) | 5 (re-analyses) | 50 | 0.96 (0.94-0.98) | 8.2 points (H-Score equivalent) |

Detailed Experimental Protocols

Protocol 1: Manual Allred and H-Score Assessment

This protocol is typical for studies comparing manual scoring outcomes.

1. Sample Preparation:

- Tissue: Formalin-fixed, paraffin-embedded (FFPE) tissue sections (4 µm).

- IHC Staining: Performed using validated clinical-grade assay (e.g., ER SP1 clone) on an automated stainer (e.g., Ventana Benchmark, Leica Bond). Appropriate positive and negative controls included.

- Digitization: All slides scanned at 20x magnification using a whole slide scanner (e.g., Aperio AT2, Hamamatsu NanoZoomer) to facilitate remote scoring.

2. Scoring Methodology:

- Blinding: Cases anonymized and order randomized for each pathologist.

- Allred Score:

- Proportion Score (PS): Estimate the percentage of positive tumor cells: 0 (0%), 1 (<1%), 2 (1-10%), 3 (11-33%), 4 (34-66%), 5 (67-100%).

- Intensity Score (IS): Judge average intensity of positive cells: 0 (negative), 1 (weak), 2 (moderate), 3 (strong).

- Total Score: PS + IS (range 0-8). A score of ≥3 is often considered positive.

- H-Score:

- For each core, estimate the percentage of tumor cells at each intensity (0, 1+, 2+, 3+). The sum of percentages must equal 100%.

- Calculate: H-Score = (1 * %1+) + (2 * %2+) + (3 * %3+). Range 0-300.

- Training: All participating pathologists undergo joint training on 10-15 representative cases (not part of the study set) to align scoring thresholds.

Protocol 2: Digital Image Analysis Workflow

This protocol outlines a typical DIA validation study against manual scores.

1. Image Analysis Setup:

- Software: Use a commercial (e.g., Visiopharm, HALO, QuPath) or open-source platform.

- Algorithm Development:

- Training: Select 10-15 annotated slides (separate from test set) to train the algorithm.

- Segmentation: Train classifiers to identify tumor epithelium (vs. stroma, necrosis).

- Quantification: Define intensity thresholds for 0, 1+, 2+, 3+ staining based on optical density or color deconvolution (e.g., DAB vector).

- Algorithm Output: Configure to output Allred-like scores (PS, IS), H-Score, and % positive cells.

2. Validation and Comparison:

- Analysis: Run the locked algorithm on the test set of digitized whole slide images (e.g., 50 cases).

- Data Extraction: Export numerical results for each case.

- Statistical Comparison: Calculate correlation (Pearson's r) and concordance (ICC) between DIA-generated scores and the consensus manual scores from expert pathologists.

Visualizations

Diagram 1: IHC Scoring Evolution and Comparison

Diagram 2: Digital Image Analysis Algorithm Workflow

The Scientist's Toolkit: Research Reagent & Solution Essentials

Table 3: Essential Materials for IHC Scoring Comparison Studies

| Item | Function/Description | Example Product/Brand |

|---|---|---|

| FFPE Tissue Microarrays (TMAs) | Provide multiple tissue cores on one slide for high-throughput, controlled comparison. | Pantomics, US Biomax, or custom-built. |

| Validated Primary Antibodies | Specific binders for the target antigen; clone and vendor consistency are critical for standardization. | FDA-cleared/CE-IVD clones (e.g., ER SP1, HER2 4B5) from Roche, Agilent, etc. |

| Automated IHC Stainer | Ensures consistent, reproducible staining protocol application across all slides. | Ventana Benchmark series, Leica BOND series, Dako Omnis. |

| Whole Slide Scanner | Converts physical slides into high-resolution digital images for manual remote scoring and DIA. | Leica Aperio AT2, Hamamatsu NanoZoomer S360, Philips IntelliSite. |

| Digital Image Analysis Software | Platform for developing and running quantitative algorithms for biomarker assessment. | Indica Labs HALO, Visiopharm, Akoya Phenoptics, QuPath (open-source). |

| Statistical Analysis Software | For calculating agreement metrics (ICC, Cohen's Kappa), correlation, and significance. | SPSS, R, MedCalc, GraphPad Prism. |

| Pathologist Annotation Tool | Software allowing expert pathologists to manually delineate regions of interest and score digitally. | Aperio ImageScope, PathXL, digital pen tablets. |

This guide, framed within ongoing research on IHC inter-laboratory comparison and standardization, provides a practical comparison of implementing leading accreditation and standardization guidelines. The goal is to equip researchers and drug development professionals with data to select frameworks that enhance reproducibility and data integrity in biomarker studies.

Comparison of Guideline Scope and Impact on IHC Standardization

The following table compares core requirements and documented impacts of three major guideline families on IHC assay performance in inter-laboratory studies.

Table 1: Key Guideline Characteristics and Documented Performance Outcomes

| Aspect | CAP Laboratory Accreditation | ASCO/CAP Biomarker-Specific Guidelines | ISO 15189 & ISO/IEC 17025 |

|---|---|---|---|

| Primary Focus | Overall laboratory quality and operational consistency. | Clinical validation and reporting of specific biomarkers (e.g., ER, HER2). | Technical competence and quality management systems. |

| Key IHC Requirements | Daily QC, equipment validation, personnel qualifications, procedure manuals. | Pre-analytic variable control, specific assay validation, rigorous scoring criteria, pathologist certification. | Measurement traceability, uncertainty estimation, participation in proficiency testing (PT). |

| Typical PT/ILC Performance Metric* | >95% pass rate for accredited labs in CAP PT programs. | ER/PR IHC: >95% concordance for positive/negative calls in validated labs. HER2 IHC: >90% concordance with FISH when guidelines followed. | Inter-lab CV reduction from >30% to <20% for semi-quantitative scores upon implementation. |

| Strength for Drug Development | Robust general lab foundation, audit readiness. | Unambiguous, clinically-relevant endpoint definitions for companion diagnostics. | International recognition, facilitates multi-country trial data harmonization. |

| Implementation Complexity | Moderate (system-wide changes). | High (assay-specific, rigorous validation). | High (detailed process documentation, uncertainty frameworks). |

*Performance data synthesized from published guideline validation studies and proficiency testing summaries.

Experimental Protocols for Guideline Validation

The comparative data in Table 1 is derived from published inter-laboratory comparison (ILC) studies. A typical protocol for such validation is outlined below.

Protocol: ILC Study to Assess Guideline Implementation Impact

- Sample Set Distribution: A central coordinating laboratory prepares a tissue microarray (TMA) containing 20-40 cores representing a range of biomarker expression (negative, low, high) and fixation times. Aliquots of identical primary antibody clones and detection kits are provided to all participants.

- Participant Labs: Laboratories are grouped: those accredited by CAP, those following specific ASCO/CAP guidelines, those accredited to ISO 15189, and a control group without specific guideline adherence.

- Staining and Analysis: All labs process the TMA using their standard IHC protocols but with the supplied reagents. Each lab scores the assays according to their standard practice (e.g., H-score, Allred score for ER; 0-3+ for HER2).

- Data Collection and Central Review: Digital slides are uploaded. A panel of 3-5 expert pathologists performs a blinded central review, establishing a consensus reference score.

- Metrics Calculated: For each laboratory group, calculate:

- Concordance Rate (%) with central review for positive/negative dichotomization.

- Intraclass Correlation Coefficient (ICC) or Cohen's Kappa for inter-rater reliability.

- Coefficient of Variation (CV%) for continuous scores (e.g., H-score).

Visualization of IHC Standardization Workflow

IHC Standardization Phases and Guideline Controls

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for IHC Standardization Research

| Item | Function in Standardization Studies |

|---|---|

| Certified Reference Material (CRM) | Provides a biologically defined, stable control with assigned target values (e.g., specific H-score) for assay calibration and trueness assessment. |

| Tissue Microarray (TMA) | Enables simultaneous analysis of multiple tissue cores under identical staining conditions, crucial for high-throughput ILC studies. |

| Validated Antibody Clones | Use of antibodies with documented sensitivity/specificity profiles (e.g., FDA-approved IVD clones) reduces a major source of inter-lab variability. |

| Automated Staining Platform | Standardizes staining times, temperatures, and reagent application, minimizing procedural variability between labs and runs. |

| Whole Slide Imaging Scanner | Facilitates digital pathology, enabling central blinded review, image analysis algorithms, and remote proficiency testing. |

| Image Analysis Software | Provides quantitative, objective scores (e.g., positive cell percentage, intensity measurement) to complement pathologist assessment. |

| Inter-Laboratory Comparison (ILC) Software | Platforms for secure digital slide exchange, scoring, and statistical analysis of concordance metrics across participating sites. |

Identifying and Resolving Common Pitfalls in Multi-Center IHC Studies

Within the critical context of IHC inter-laboratory comparison and standardization research, pre-analytical variables represent the most significant source of result variability. This guide objectively compares the performance impacts of different fixation protocols, tissue processing methods, and antigen retrieval (AR) techniques, supported by experimental data from recent standardization studies.

Comparative Analysis of Fixation Time on Antigen Integrity

Table 1: Impact of Formalin Fixation Time on IHC Signal Intensity (H-Score)

| Target Antigen | Optimal Fixation Window (Hours) | Signal at 4h (% of Optimal) | Signal at 24h (% of Optimal) | Signal at 72h (% of Optimal) | Key Artifact Observed |

|---|---|---|---|---|---|

| ER (Estrogen Receptor) | 6-18 | 85% | 100% | 65% | False-negative nuclear staining |

| Ki-67 | 8-24 | 95% | 100% | 40% | Loss of nuclear detail, high background |

| p53 | 6-12 | 100% | 90% | 30% | Cytoplasmic mislocalization |

| HER2 | 8-48 | 80% | 100% | 95% | Membrane staining fragmentation |

Experimental Protocol: Fixation Time Series

Method: Identical tissue cores from a breast cancer TMA were subjected to controlled 10% Neutral Buffered Formalin fixation for periods of 1h, 4h, 8h, 12h, 24h, 48h, and 72h. All samples were then processed identically (same processor, reagents) and stained in a single IHC run using validated antibodies (clone IDs: ER-SP1, Ki-67-30-9, p53-DO-7, HER2-4B5). Staining was quantified via digital image analysis (H-Score, 0-300). Controls: A fresh-frozen core fixed for 18h served as the reference control (100%).

Comparison of Tissue Processing Methodologies

Table 2: Artifact Frequency Across Different Tissue Processing Platforms

| Processing System / Method | Average Processing Time (Hours) | Vacuum & Temperature Control | Tissue Morphology Score (1-5) | IHC Reproducibility (CV%) | Common Artifacts |

|---|---|---|---|---|---|

| Manual (Bench-top) | 14-16 | No / Variable | 3.2 | 25-35% | Incomplete dehydration, uneven infiltration |

| Closed Rotary Processor A | 12 | Yes / Fixed | 4.5 | 15% | Rare edge effect, occasional over-processing |

| Closed Rotary Processor B | 6 (Rapid) | Yes / Gradients | 4.0 | 18% | Bubble artifacts under capsule if protocol too fast |

| Microwave-Assisted Processor | 2 | Yes / Active monitoring | 4.2 | 12% | Heat-related shrinkage if uncalibrated |

Experimental Protocol: Processing Comparison

Method: Matached liver and spleen tissue biopsies were divided into four equal parts. Each set was processed through one of the four systems listed above, using manufacturer-recommended reagent schedules (ethanol/xylene/paraffin). All resulting blocks were sectioned at 4µm. H&E staining was graded by three pathologists for morphology (5=excellent). Consecutive sections were stained for CD31 and Vimentin. Inter-slide staining intensity variability was calculated as the coefficient of variation (CV%) across 10 high-power fields per slide.

Antigen Retrieval Method Efficacy & Artifacts

Table 3: Performance of Antigen Retrieval Methods on Masked Epitopes

| AR Method & Buffer pH | Optimal For Epitope Class | Retrieval Efficiency* | Artifact Risk | Background Staining |

|---|---|---|---|---|

| Heat-Induced (HIER) - Citrate pH 6.0 | Phosphoproteins, Nuclear antigens | High | Medium (Tissue detachment) | Low |

| HIER - Tris-EDTA pH 9.0 | Membrane proteins, Some cross-linked nuclear | Very High | High (Bubbling, Over-retrieval) | Medium |

| Enzymatic (Proteinase K) | Highly cross-linked, extracellular matrix | Moderate | High (Tissue digestion, holes) | High |

| Combined (Enzyme + HIER) | Formalin over-fixed (>48h) tissues | High | Very High | High |

*Efficiency measured as % recovery of staining intensity vs. unfixed frozen control.

Experimental Protocol: Antigen Retrieval Comparison

Method: A single TMA containing tissues fixed for 6h, 24h, and 72h was sectioned. Consecutive slides were subjected to the four AR conditions: Citrate pH6.0 (20min, 97°C), Tris-EDTA pH9.0 (20min, 97°C), Proteinase K (10min, 37°C), or Proteinase K (5min) followed by Citrate pH6.0 (15min, 97°C). All slides were then stained for a panel of challenging antigens (Beta-catenin, Cytokeratin 7, S100). Staining intensity and localization accuracy were scored against a validated reference standard. Artifacts were catalogued by a trained histotechnologist.

Signaling Pathway: Impact of Over-Fixation on Antigen Availability

Title: Pathway from Over-Fixation to IHC False-Negative Result

IHC Pre-Analytical Workflow Comparison

Title: IHC Workflow with Major Pre-Analytical Variable Points

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Pre-Analytical Standardization |

|---|---|

| 10% Neutral Buffered Formalin (NBF) | Gold-standard fixative; buffers pH to prevent acid-induced artifact. |

| Pre-fabricated Tissue Microarrays (TMAs) | Contain multiple tissue cores on one slide, enabling simultaneous staining under identical conditions for comparison. |

| pH-calibrated AR Buffers (pH 6.0 & 9.0) | Essential for HIER; precise pH dictates breaking specific protein cross-links. |

| Automated Stainers with Protocol Memory | Ensure identical staining conditions (times, temperatures, reagent volumes) across runs and labs. |

| Digital Image Analysis Software | Objectively quantifies staining intensity (H-score, % positivity), removing subjective scorer bias. |

| Multi-tissue Control Slides | Slides containing known positive/negative tissues for multiple antigens, run with every batch to monitor protocol performance. |

| Barcode Tracking System | Links patient sample to every pre-analytical step (fixation time, processor ID, AR batch), enabling audit trails. |

Within the context of IHC inter-laboratory standardization research, variability in antibody performance is a critical challenge. This guide objectively compares core variables using published experimental data.

Antibody Clone Specificity Comparison

Different clones for the same target antigen can yield divergent staining patterns and intensities.

Table 1: PD-L1 Clone Performance Comparison in Non-Small Cell Lung Carcinoma

| Clone | Assay Platform | Scoring Method | Reported Sensitivity | Reported Specificity | Concordance with 22C3 Reference |

|---|---|---|---|---|---|

| 22C3 (Reference) | Dako Autostainer Link 48 | TPS | 100% (Ref) | 100% (Ref) | 100% |

| SP263 | Ventana BenchMark ULTRA | TPS/IC | 97.5% | 93.2% | 95.3% |

| SP142 | Ventana BenchMark ULTRA | IC only | 50-60% (variable) | >99% | Low (40-50%) |

| 73-10 | Dako Autostainer Link 48 | TPS | Higher sensitivity | Lower specificity | 85% |

Experimental Protocol (CAP/IASLC Study Summary):

- Tissue: Formalin-fixed, paraffin-embedded (FFPE) NSCLC tissue microarrays.

- Staining Platforms: Dako Autostainer Link 48 (for 22C3, 73-10) and Ventana BenchMark ULTRA (for SP263, SP142).

- Protocol: Manufacturer-defined, optimized protocols for each clone-platform pair were used. Includes heat-induced epitope retrieval (HIER) with specified pH buffers, primary antibody incubation (duration and concentration per protocol), and detection via polymer-based HRP systems.

- Analysis: Staining assessed by expert pathologists using Tumor Proportion Score (TPS) and Immune Cell (IC) scoring. Concordance analysis performed.

Antibody Titration Optimization

Optimal dilution is platform and clone-dependent. Under- or over-titration increases background or reduces signal.

Table 2: ER (Clone EP1) Titration Effects on Different Platforms

| Dilution | Dako Link 48 (Score 0-3) | Ventana ULTRA (Score 0-3) | Leica Bond III (Score 0-3) | Background (All Platforms) |

|---|---|---|---|---|

| 1:100 | 3.0 (Saturated) | 3.0 | 2.5 | High |

| 1:500 | 3.0 (Optimal) | 2.5 | 2.0 | Low |

| 1:1000 | 2.0 | 2.0 (Optimal) | 2.0 (Optimal) | Negligible |

| 1:2000 | 1.0 | 1.5 | 1.5 | Negligible |

Experimental Protocol (In-house Validation):

- Antibody: ER (Clone EP1) from a major vendor.

- Tissue: FFPE breast carcinoma with known ER positivity.

- Titration: Serial dilutions (1:100 to 1:2000) prepared in antibody diluent.

- Staining: Parallel staining on three automated IHC platforms using identical retrieval (pH9, 20min) and detection (polymer-HRP) methods where possible.

- Quantification: H-score (0-300) or semi-quantitative intensity (0-3) assessed by two observers.

Platform-Dependent Performance Differences

Automated stainers differ in retrieval chemistry, incubation parameters, and detection systems.

Table 3: Key Platform Variables Impacting Staining

| Variable | Dako/Agilent Link 48 | Ventana BenchMark ULTRA | Leica Bond III |

|---|---|---|---|

| Epitope Retrieval | PT Link (Tris/EDTA, pH9) | Cell Conditioning (CC1, pH8.5) | ER1 (pH6) or ER2 (pH9) |

| Incubation Temp | Ambient (Room Temp) | 36°C - 40°C | Ambient |

| Detection Chemistry | EnVision FLEX+ | OptiView / UltraView | BOND Polymer Refine |

| Reaction Volume | ~100-200 µl (coverslip) | Liquid coverslip | ~150 µl |

| Reported Impact | Sensitive to retrieval time | Sensitive to incubation temp | Sensitive to retrieval pH choice |

Diagram 1: IHC Workflow with Platform Divergence

Diagram 2: Troubleshooting IHC Variable Source

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for IHC Standardization Studies

| Item | Function in Experiment |

|---|---|

| Validated Positive Control Tissue | Provides consistent biological reference for staining intensity and specificity across runs. |

| Isotype Control Antibody | Distinguishes specific binding from non-specific background or Fc-receptor interactions. |

| Cell Line Microarray (XLMA) | Contains engineered cells with known antigen expression levels for quantitative calibration. |

| Phosphate-Buffered Saline (PBS) | Universal wash buffer to remove unbound reagents without disrupting antibody-antigen bonds. |

| Antibody Diluent with Protein | Stabilizes antibody concentration and reduces non-specific binding to tissue or slide. |

| Polymer-Based Detection System | Amplifies signal while minimizing endogenous biotin interference compared to avidin-biotin (ABC). |

| Digital Pathology / Image Analysis Software | Enables objective, quantitative scoring of staining intensity (H-score, % positivity) to reduce observer bias. |

Within the critical thesis of IHC inter-laboratory comparison and standardization, the post-analytical phase—specifically pathologist scoring and reporting—remains a significant source of variability. This comparison guide objectively evaluates the performance of digital pathology-assisted quantification tools against traditional manual microscopy, presenting experimental data on their impact on inter-observer agreement.

Experimental Comparison of Scoring Modalities

The following table summarizes data from recent multi-institutional ring studies evaluating scoring consistency for breast cancer biomarkers (ER, PR, HER2, Ki-67).

Table 1: Inter-Observer Concordance (Fleiss' Kappa) Across Scoring Methods

| Biomarker | Manual Microscopy (Light) | Digital Pathology w/ Visual Scoring | Digital Pathology w/ AI-Assisted Scoring | Key Study (Year) |

|---|---|---|---|---|

| ER (H-Score) | 0.65 (Moderate) | 0.72 (Substantial) | 0.89 (Almost Perfect) | NordiQC ILC (2023) |

| PR (Allred) | 0.58 (Moderate) | 0.64 (Substantial) | 0.82 (Almost Perfect) | CAP ASPIRE (2024) |

| HER2 (IHC 0-3+) | 0.71 (Substantial) | 0.75 (Substantial) | 0.91 (Almost Perfect) | Gerring et al. (2024) |

| Ki-67 (% Index) | 0.51 (Moderate) | 0.55 (Moderate) | 0.85 (Almost Perfect) | International Ki-67 Consortium |

Table 2: Quantitative Reporting Variability (Coefficient of Variation %)

| Reporting Metric | Manual Method CV% | Digital/AI-Assisted CV% | Reduction in Variability |

|---|---|---|---|

| Tumor Cell Percentage | 18.5% | 6.2% | 66.5% |

| H-Score (0-300) | 22.1% | 8.7% | 60.6% |

| Ki-67 Labeling Index | 31.4% | 9.8% | 68.8% |

| Immune Cell Density (cells/mm²) | 41.2% | 12.3% | 70.1% |

Detailed Experimental Protocols

Protocol 1: Multi-Observer Ring Study for HER2 IHC Scoring

- Objective: To quantify inter-observer agreement for HER2 IHC (0-3+) using three methods.

- Sample Set: 60 breast carcinoma cases (pre-stained slides), with 20 each spanning HER2 0, 1+, 2+, and 3+ by FISH confirmation.

- Observers: 15 board-certified pathologists with varying subspecialty experience.

- Methodology:

- Phase 1 (Manual): Each pathologist scored all 60 cases via conventional light microscopy.

- Phase 2 (Digital Visual): After a 4-week washout, the same pathologists scored digitized whole-slide images (WSIs) of the cases on approved viewers.

- Phase 3 (AI-Assisted): After another washout, scores were generated using an FDA-cleared AI algorithm that provided pre-calculated membrane completeness and intensity. Pathologists could adopt or override the score.

- Analysis: Fleiss' Kappa (κ) for agreement across all observers.

Protocol 2: Quantitative H-Score Variability Analysis

- Objective: Measure variability in continuous H-score reporting for ER.

- Sample Set: 30 ER-positive cases with a wide dynamic range.

- Observers: 10 pathologists.

- Methodology: Each pathologist provided an H-Score (0-300) for each case via manual estimation and, separately, using a digital image analysis toolkit that quantified the percentage of cells at each intensity level (0, 1+, 2+, 3+). The tool calculated the final H-Score [(%3+ x 3) + (%2+ x 2) + (%1+ x 1)].

- Analysis: Coefficient of Variation (CV%) across observers for each case and method.

Visualizations

Title: IHC Scoring Workflow Variability

Title: Post-Analytical Phase in IHC Diagnostic Pathway

The Scientist's Toolkit: Research Reagent & Solution Guide

Table 3: Essential Materials for Inter-Observer Variability Studies

| Item | Function & Relevance to Standardization |

|---|---|

| Validated IHC Antibody Clones & Kits | Ensures analytical phase consistency, removing one major variable to isolate post-analytical error. Use FDA-cleared/CE-IVD kits for clinical comparisons. |

| Tissue Microarrays (TMAs) | Contain multiple patient samples on one slide, enabling high-throughput, controlled comparison of scoring across many cases under identical staining conditions. |

| Whole Slide Scanners | Digitizes slides for remote, standardized viewing. Essential for digital pathology studies. Key specs: 40x resolution, fluorescence capability for multiplex IF/IHC. |

| Digital Pathology Viewers | Software platforms (e.g., QuPath, HALO, Phenoptics) enable visual scoring on WSIs, annotation, and often integrate image analysis algorithms. |

| FDA-Cleared AI/Image Analysis Algorithms | Provide objective, quantitative metrics (e.g., % positivity, H-score, cell density) to serve as an adjunct or reference standard for pathologists. |

| Ring Study Reference Sets | Curated sets of pre-stained slides or WSIs with expert consensus or orthogonal confirmation (e.g., FISH) scores. The gold standard for conducting variability studies. |

| Statistical Analysis Software | For calculating agreement metrics (Kappa, ICC, CV%) and visualizing data (R, Python with scikit-learn, or specialized tools like MedCalc). |

Within IHC inter-laboratory comparison research, discrepant results are a significant hurdle for biomarker validation and companion diagnostic development. This guide presents real-world case studies, objectively comparing reagent and platform performance with supporting experimental data, to illuminate pathways to robust, reproducible outcomes.

Case Study 1: ER IHC - Clone ConcordanCe

Discrepancy: Two labs reported conflicting Estrogen Receptor (ER) statuses on the same breast carcinoma tissue microarray (TMA) set, impacting patient eligibility for endocrine therapy.

Hypothesis: The primary discrepancy stemmed from the use of different anti-ER primary antibody clones (SP1 vs. 1D5) with varying sensitivities and epitope specificities, compounded by differences in retrieval conditions.

Experimental Protocol for Resolution:

- TMA: 50 formalin-fixed, paraffin-embedded (FFPE) breast cancer cases with known ER status by quantitative PCR.

- Platforms: Automated stainers (Ventana Benchmark Ultra vs. Agilent Dako Omnis).

- Reagents: Clones SP1 (Rabbit monoclonal) and 1D5 (Mouse monoclonal). Each clone tested under two retrieval conditions: low-pH (citrate) and high-pH (EDTA/TRIS) buffers.

- Detection: Manufacturer-specific HRP polymer detection kits.

- Scoring: Digital image analysis (H-score) and pathologist review (Allred score). Discordance defined as a difference in positive/negative call.

Comparative Data:

Table 1: ER Clone and Retrieval Comparison

| Clone | Retrieval pH | Concordance with qPCR | Average H-Score | Inter-Lab CV (Score) |

|---|---|---|---|---|

| SP1 | High (9.0) | 98% (49/50) | 245 | 12% |

| SP1 | Low (6.0) | 90% (45/50) | 198 | 25% |

| 1D5 | High (9.0) | 94% (47/50) | 215 | 18% |

| 1D5 | Low (6.0) | 84% (42/50) | 165 | 32% |

Conclusion: Clone SP1 with high-pH retrieval demonstrated superior concordance and inter-laboratory reproducibility. Standardization on this protocol resolved the clinical discrepancy.

Pathway & Workflow:

Title: ER Discrepancy Troubleshooting Workflow

Case Study 2: PD-L1 (22C3) Platform Performance

Discrepancy: A drug development trial observed variable PD-L1 Tumor Proportion Scores (TPS) across testing sites, all using the FDA-approved 22C3 pharmDx kit but on different automated platforms.

Hypothesis: The "linkage" between the proprietary antibody clone (22C3), its detection system, and the platform-specific epitope retrieval (ER2) workflow is critical. Platform-induced variability in heating during retrieval or reagent dispensing was the suspected root cause.

Experimental Protocol for Resolution:

- Samples: A calibrated FFPE cell line TMA with low (1-10%), moderate (20-30%), and high (>50%) PD-L1 expression.

- Platforms: Agilent Dako Autostainer Link 48 (approved) vs. Ventana Benchmark Ultra (off-label).

- Protocol: The 22C3 antibody was run per its approved protocol on the Dako platform. On the Ventana platform, the antibody was applied with the OptiView detection kit, with careful titration of retrieval time.

- Quantification: Digital pathology analysis for TPS. Inter-platform comparability assessed via linear regression (R²) and Bland-Altman analysis.

Comparative Data:

Table 2: PD-L1 22C3 Assay Platform Comparison

| Platform | Detection System | Average TPS (Low Sample) | Average TPS (High Sample) | R² vs. Reference | Inter-Run CV |

|---|---|---|---|---|---|

| Dako Link 48 | EnVision FLEX | 5.2% | 67.5% | 0.99 (Reference) | 8% |

| Ventana Ultra | OptiView | 2.1% | 45.8% | 0.85 | 22% |

| Ventana Ultra* | OptiView (Optimized) | 4.8% | 63.2% | 0.96 | 11% |

- Optimization involved extended retrieval (64 min) and antibody dilution adjustment.

Conclusion: The approved platform-detection system linkage is essential. Deviations introduce significant pre-analytical variability. Successful off-label use requires extensive validation and protocol optimization.

Pathway Visualization:

Title: PD-L1 IHC Detection Pathway & Variables

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for IHC Troubleshooting & Standardization

| Item | Function & Role in Standardization |

|---|---|

| Calibrated FFPE Reference Materials (Cell lines, TMA) | Provide a constant biological control across runs and labs for assay performance tracking and quantitative calibration. |

| Validated Primary Antibody Clones (e.g., ER-SP1, PD-L1-22C3) | Ensure specificity and sensitivity to the target epitope. Clone selection is often the first variable to control. |

| Controlled Epitope Retrieval Buffers (Citrate pH 6.0, EDTA/TRIS pH 9.0) | Unmask target epitopes consistently. Buffer pH and heating profile are major sources of pre-analytical variance. |

| Polymer-Based Detection Systems (HRP/AP w/ polymer) | Amplify signal with high sensitivity and low background. Must be optimized and validated for the primary antibody. |

| Chromogens (DAB, Fast Red) | Generate visible precipitate at the antigen site. Stability and lot-to-lot consistency are crucial for quantitative IHC. |

| Automated IHC Staining Platform | Standardizes the entire assay timeline (retrieval, antibody incubation, washing) to minimize operator-induced variability. |

| Digital Image Analysis Software | Enables objective, quantitative scoring (H-score, TPS, % positivity), removing inter-obscriber subjectivity. |

Designing and Executing Robust Inter-Laboratory Comparison Studies (Ring Trials)

Within IHC inter-laboratory comparison and standardization research, ring trials (proficiency testing) are the cornerstone for assessing reproducibility and driving harmonization. A well-designed trial provides objective, data-driven insights into assay performance across diverse laboratory settings. This guide compares the outcomes of a structured ring trial approach against informal inter-laboratory comparisons, framing the discussion within the broader thesis that systematic design is critical for actionable standardization.

Comparative Performance: Structured vs. Informal Ring Trials

The following table summarizes key performance metrics from published ring trial literature, comparing trials with robust design elements to those with less formalized approaches.

| Performance Metric | Structured Ring Trial (with design/logistics/selection) | Informal Inter-Lab Comparison |

|---|---|---|

| Inter-lab Concordance Rate | 85-95% (with clear scoring criteria) | 60-75% (subjective assessment) |

| Data Completeness | >98% of expected datasets returned | ~70-80% of expected data |

| Outlier Identification | Clear, statistically defined outliers; root-cause analysis possible | Ambiguous, often unresolved discrepancies |

| Impact on Standardization | Leads to revised SOPs, validated antibody lots, and training modules | Results are anecdotal; limited systemic change |

| Participant Feedback Utility | Structured feedback drives protocol optimization | Unstructured feedback with low actionability |

Experimental Protocols for Key Ring Trial Components

1. Protocol for Pre-Trial Material Homogeneity and Stability Testing

- Objective: Ensure the test samples (e.g., tissue microarray sections) are uniform and stable for the trial duration.

- Methodology:

- Homogeneity: Randomly select 10% of prepared sample units. From each, score 3 pre-defined tissue cores by 2 independent pathologists using the trial scoring scheme. Analyze variance using ANOVA; variance between units should not significantly exceed variance within units.

- Stability: Store samples under simulated shipping (stress) and long-term (real-time) conditions. At defined intervals (e.g., 1, 3, 6 months), perform IHC staining and quantitative analysis (e.g., H-score). Demonstrate <10% deviation from baseline scores.

2. Protocol for Centralized vs. Decentralized Staining Comparison

- Objective: Determine if variability stems from pre-analytical/analytical factors or participant-specific interpretation.

- Methodology:

- Arm A (Decentralized): Participants receive unstained sections and a detailed SOP. They perform staining locally using their own platforms and reagents.

- Arm B (Centralized): Participants receive slides stained at a central lab from the same sample block.

- All participants score their slides using the provided digital images and scoring guide. Compare inter-laboratory concordance within Arm A vs. between Arms A and B. A high discrepancy in Arm A only implicates staining variability.

3. Protocol for Digital Image Analysis (DIA) vs. Manual Scoring Assessment

- Objective: Quantify the impact of automated analysis on result reproducibility.

- Methodology:

- All participants submit digital whole-slide images of their stained sections.

- Manual Scoring: A minimum of three trained pathologists score each image independently using the trial rubric.

- DIA Scoring: A validated algorithm, calibrated on a separate image set, analyzes all images for the same parameters (e.g., percentage positivity, intensity).

- Compare the coefficient of variation (CV) for the manual scores and the DIA results across all laboratories.

Visualizing the Ring Trial Workflow and Analysis

Title: Ring Trial Phased Workflow Diagram

Title: Ring Trial Data Analysis and Root-Cause Pathway

The Scientist's Toolkit: Key Research Reagent Solutions for IHC Ring Trials

| Item | Function in Ring Trial |

|---|---|

| Validated, Batch-Controlled Primary Antibodies | Ensures all participants use an identical, characterized reagent, removing a major variable in staining. |

| Multi-tissue Microarray (TMA) Blocks | Provides hundreds of identical tissue cores from a single block, enabling homogeneity testing and internal controls. |

| Reference Standard Slides (IHC-RSS) | Pre-stained, characterized slides with defined target expression levels for participant calibration and education. |

| Automated Staining Platform Reagents | Buffer, detection kit, and substrate solutions optimized for specific platforms to minimize analytical variance. |

| Digital Pathology Image Analysis Software | Provides objective, quantitative scoring to decouple analytical variability from interpretative variability. |

| Stabilized, HRP/AP-conjugated Polymer Detection Systems | High-sensitivity, low-background detection systems that are robust across a range of antigen expression levels. |

| Antigen Retrieval Buffer Standardization Kits | Pre-formulated, pH-calibrated buffers (e.g., citrate, EDTA) to control epitope exposure across labs. |

Within the broader thesis on immunohistochemistry (IHC) inter-laboratory comparison and standardization research, selecting appropriate statistical tools is critical for assessing agreement and reliability. Concordance rates, Kappa statistics, and Intraclass Correlation Coefficients (ICC) serve distinct but complementary purposes in evaluating the consistency of IHC scoring across laboratories, observers, and assay runs. This guide objectively compares their performance, supported by experimental data from standardization studies.

Direct Comparison of Statistical Tools

The following table summarizes the core characteristics, applications, and performance of the three tools in the context of IHC inter-laboratory comparisons.

Table 1: Comparison of Agreement and Reliability Statistics for IHC Analysis

| Feature | Concordance Rate | Cohen's/ Fleiss' Kappa | Intraclass Correlation Coefficient (ICC) |

|---|---|---|---|

| Primary Purpose | Measures simple proportional agreement. | Measures chance-corrected agreement for categorical data. | Measures reliability/agreement for continuous or ordinal data. |

| Data Type | Categorical (Positive/Negative). | Categorical (Nominal or Ordinal). | Continuous, Ordinal (interval-scaled assumption). |

| Handles Multiple Raters? | Yes (Overall % agreement). | Cohen's: 2 raters. Fleiss': >2 raters. | Yes, various models (1-way, 2-way, mixed). |

| Accounts for Chance Agreement? | No. | Yes. | Yes (partitions variance components). |

| Typical IHC Application | Initial screening of assay reproducibility. | Agreement on binary (positive/negative) or categorical (0,1+,2+,3+) scores. | Agreement on continuous scores (e.g., H-scores, percentage positivity) or ordinal scores treated as interval. |

| Key Limitation | Can be high even when random agreement is high. | Can be low despite high agreement if prevalence is very high or low (Kappa paradox). | Model selection is critical; sensitive to range of true values in sample. |

| Performance in Recent IHC Ring Trials [1,2] | Raw concordance: 85-95% common. | Moderate Kappa (0.4-0.6) common due to prevalence effects. | Often the preferred measure for H-scores; ICC >0.9 for optimized protocols. |

Supporting Experimental Data from IHC Standardization Studies

Table 2: Example Data from a Multicenter IHC HER2 Scoring Study (n=50 cases, 5 laboratories)

| Statistical Tool | Calculated Value | Interpretation in IHC Context |

|---|---|---|

| Overall Concordance Rate (Positive vs. Negative) | 92% | High raw agreement on call. |

| Cohen's Kappa (Pairwise, Lab A vs. B) | 0.78 | Substantial agreement beyond chance. |

| Fleiss' Kappa (All 5 labs, binary score) | 0.65 | Moderate agreement across all centers. |

| ICC (2,1) - Two-way random, single rater for H-score (0-300) | 0.89 (95% CI: 0.82-0.94) | Excellent reliability across labs for continuous measure. |

Experimental Protocols for Cited Data

Protocol 1: IHC Inter-Laboratory Ring Trial for HER2 (ASCO/CAP Guideline Validation)

- Sample Selection: A tissue microarray (TMA) of 50 invasive breast carcinoma cases with pre-defined HER2 status (0, 1+, 2+, 3+) is constructed.

- Participant Labs: Five certified pathology laboratories are recruited. Each receives the same TMA block, standard operating procedure (SOP), and antibody clone (e.g., 4B5).

- Staining & Scoring: Labs perform IHC per SOP. Two pathologists at each lab score all cases independently as 0, 1+, 2+, 3+ and derive a binary clinical call (Positive/Negative).

- Data Collection: Scores (ordinal and binary calls) and continuous H-scores are collected centrally.

- Statistical Analysis: Calculate: a) Overall concordance rate for binary call. b) Fleiss' Kappa for ordinal scores across all raters. c) ICC (two-way random, absolute agreement) for H-scores to assess lab-to-lab reliability.

Protocol 2: Intra-Observer Agreement Study for PD-L1 Combined Positive Score (CPS)

- Design: A reproducibility study within a single lab.

- Scoring Process: One senior pathologist scores 100 PD-L1 stained slides for CPS (a continuous value). The same slides are re-randomized and re-scored by the same pathologist 4 weeks later, blinded to initial scores.

- Statistical Analysis: ICC (two-way mixed, consistency) is calculated to assess intra-observer reliability of the continuous CPS metric. Concordance rates and Kappa are less appropriate for this continuous measure.

Visualization of Statistical Tool Selection Workflow

Title: Decision Workflow for Selecting IHC Agreement Statistics

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for IHC Standardization and Agreement Studies

| Item | Function in IHC Comparison Research |

|---|---|